[ad_1]

The next are the report’s key findings:

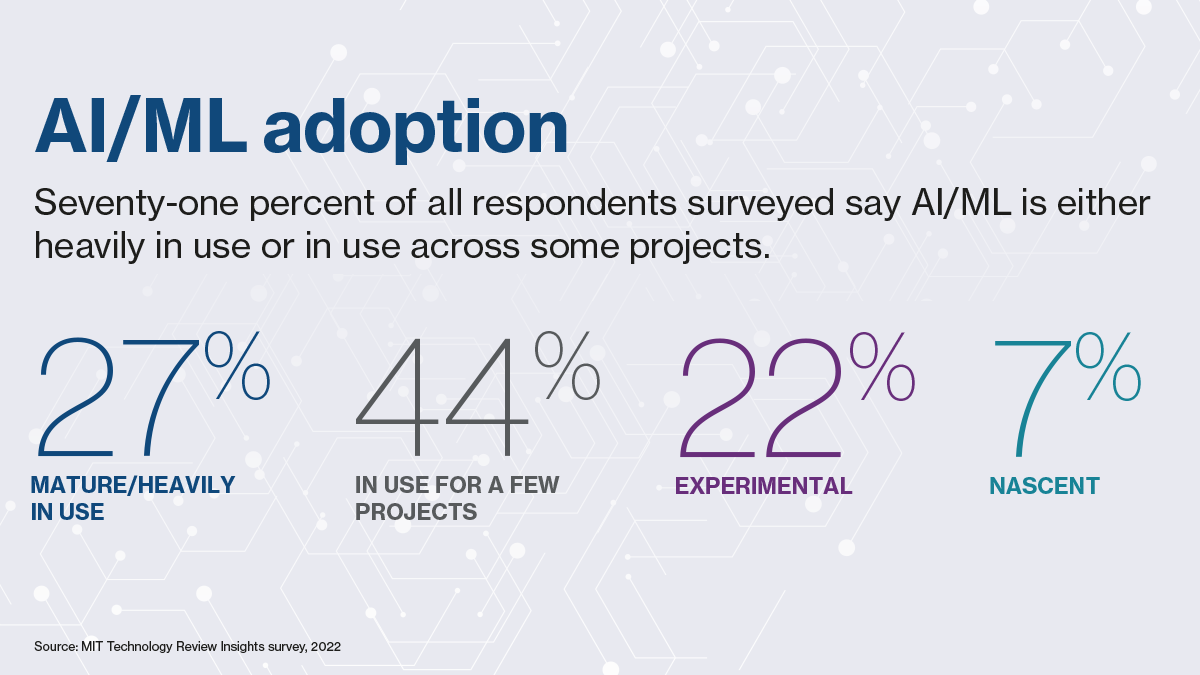

Companies purchase into AI/ML, however wrestle to scale throughout the group. The overwhelming majority (93%) of respondents have a number of experimental or in-use AI/ML tasks, with bigger corporations prone to have higher deployment. A majority (82%) say ML funding will enhance in the course of the subsequent 18 months, and intently tie AI and ML to income objectives. But scaling is a significant problem, as is hiring expert staff, discovering acceptable use circumstances, and exhibiting worth.

Deployment success requires a expertise and expertise technique. The problem goes additional than attracting core knowledge scientists. Corporations want hybrid and translator expertise to information AI/ML design, testing, and governance, and a workforce technique to make sure all customers play a task in know-how growth. Aggressive corporations ought to provide clear alternatives, development, and impacts for staff that set them aside. For the broader workforce, upskilling and engagement are key to help AI/ML improvements.

Facilities of excellence (CoE) present a basis for broad deployment, balancing technology-sharing with tailor-made options. Firms with mature capabilities, often bigger corporations, are likely to develop techniques in-house. A CoE gives a hub-and-spoke mannequin, with core ML consulting throughout divisions to develop broadly deployable options alongside bespoke instruments. ML groups ought to be incentivized to remain abreast of quickly evolving AI/ML knowledge science developments.

AI/ML governance requires strong mannequin operations, together with knowledge transparency and provenance, regulatory foresight, and accountable AI. The intersection of a number of automated techniques can convey elevated danger, resembling cybersecurity points, illegal discrimination, and macro volatility, to superior knowledge science instruments. Regulators and civil society teams are scrutinizing AI that impacts residents and governments, with particular consideration to systemically essential sectors. Firms want a accountable AI technique based mostly on full knowledge provenance, danger evaluation, and checks and controls. This requires technical interventions, resembling automated flagging for AI/ML mannequin faults or dangers, in addition to social, cultural, and different enterprise reforms.

This content material was produced by Insights, the customized content material arm of MIT Expertise Evaluate. It was not written by MIT Expertise Evaluate’s editorial workers.

[ad_2]