[ad_1]

Introduction

Managing streaming information from a supply system, like PostgreSQL, MongoDB or DynamoDB, right into a downstream system for real-time analytics is a problem for a lot of groups. The stream of information usually entails complicated ETL tooling in addition to self-managing integrations to make sure that excessive quantity writes, together with updates and deletes, don’t rack up CPU or influence efficiency of the top software.

For a system like Elasticsearch, engineers must have in-depth data of the underlying structure so as to effectively ingest streaming information. Elasticsearch was designed for log analytics the place information just isn’t ceaselessly altering, posing further challenges when coping with transactional information.

Rockset, however, is a cloud-native database, eradicating a variety of the tooling and overhead required to get information into the system. As Rockset is purpose-built for real-time analytics, it has additionally been designed for field-level mutability, reducing the CPU required to course of inserts, updates and deletes.

On this weblog, we’ll evaluate and distinction how Elasticsearch and Rockset deal with information ingestion in addition to present sensible strategies for utilizing these methods for real-time analytics.

Elasticsearch

Knowledge Ingestion in Elasticsearch

Whereas there are numerous methods to ingest information into Elasticsearch, we cowl three widespread strategies for real-time analytics:

- Ingest information from a relational database into Elasticsearch utilizing the Logstash JDBC enter plugin

- Ingest information from Kafka into Elasticsearch utilizing the Kafka Elasticsearch Service Sink Connector

- Ingest information immediately from the appliance into Elasticsearch utilizing the REST API and shopper libraries

Ingest information from a relational database into Elasticsearch utilizing the Logstash JDBC enter plugin

The Logstash JDBC enter plugin can be utilized to dump information from a relational database like PostgreSQL or MySQL to Elasticsearch for search and analytics.

Logstash is an occasion processing pipeline that ingests and transforms information earlier than sending it to Elasticsearch. Logstash provides a JDBC enter plugin that polls a relational database, like PostgreSQL or MySQL, for inserts and updates periodically. To make use of this service, your relational database wants to supply timestamped information that may be learn by Logstash to find out which adjustments have occurred.

This ingestion method works nicely for inserts and updates however further issues are wanted for deletions. That’s as a result of it’s not doable for Logstash to find out what’s been deleted in your OLTP database. Customers can get round this limitation by implementing mushy deletes, the place a flag is utilized to the deleted file and that’s used to filter out information at question time. Or, they will periodically scan their relational database to get entry to the hottest information and reindex the information in Elasticsearch.

Ingest information from Kafka into Elasticsearch utilizing the Kafka Elasticsearch Sink Connector

It’s additionally widespread to make use of an occasion streaming platform like Kafka to ship information from supply methods into Elasticsearch for real-time analytics.

Confluent and Elastic partnered within the launch of the Kafka Elasticsearch Service Sink Connector, obtainable to corporations utilizing each the managed Confluent Kafka and Elastic Elasticsearch choices. The connector does require putting in and managing further tooling, Kafka Join.

Utilizing the connector, you’ll be able to map every subject in Kafka to a single index sort in Elasticsearch. If dynamic typing is used because the index sort, then Elasticsearch does help some schema adjustments reminiscent of including fields, eradicating fields and altering sorts.

One of many challenges that does come up in utilizing Kafka is needing to reindex the information in Elasticsearch if you wish to modify the analyzer, tokenizer or listed fields. It’s because the mapping can’t be modified as soon as it’s already outlined. To carry out a reindex of the information, you’ll need to double write to the unique index and the brand new index, transfer the information from the unique index to the brand new index after which cease the unique connector job.

If you don’t use managed providers from Confluent or Elastic, you should utilize the open-source Kafka plugin for Logstash to ship information to Elasticsearch.

Ingest information immediately from the appliance into Elasticsearch utilizing the REST API and shopper libraries

Elasticsearch provides the flexibility to make use of supported shopper libraries together with Java, Javascript, Ruby, Go, Python and extra to ingest information through the REST API immediately out of your software. One of many challenges in utilizing a shopper library is that it must be configured to work with queueing and back-pressure within the case when Elasticsearch is unable to deal with the ingest load. With no queueing system in place, there may be the potential for information loss into Elasticsearch.

Updates, Inserts and Deletes in Elasticsearch

Elasticsearch has an Replace API that can be utilized to course of updates and deletes. The Replace API reduces the variety of community journeys and potential for model conflicts. The Replace API retrieves the prevailing doc from the index, processes the change after which indexes the information once more. That mentioned, Elasticsearch doesn’t provide in-place updates or deletes. So, your complete doc nonetheless have to be reindexed, a CPU intensive operation.

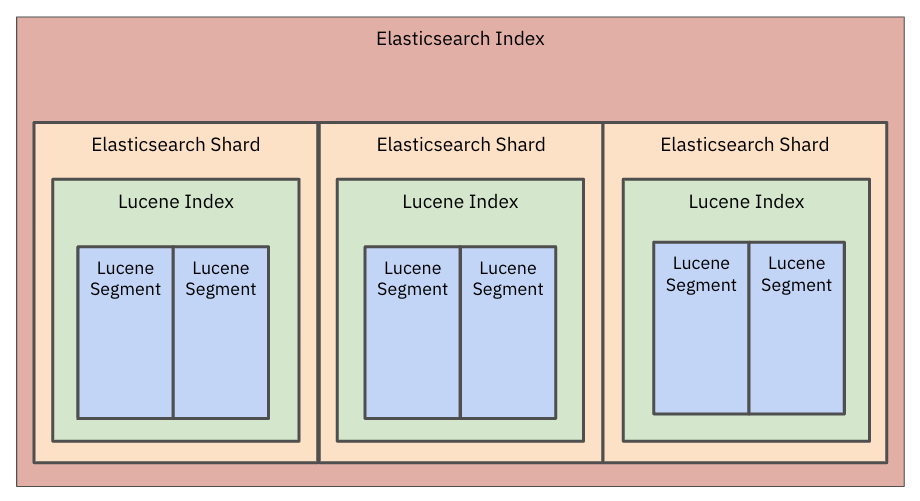

Beneath the hood, Elasticsearch information is saved in a Lucene index and that index is damaged down into smaller segments. Every phase is immutable so paperwork can’t be modified. When an replace is made, the previous doc is marked for deletion and a brand new doc is merged to type a brand new phase. With a view to use the up to date doc, all the analyzers should be run which might additionally enhance CPU utilization. It’s widespread for patrons with always altering information to see index merges eat up a substantial quantity of their total Elasticsearch compute invoice.

Picture 1: Elasticsearch information is saved in a Lucene index and that index is damaged down into smaller segments.

Given the quantity of sources required, Elastic recommends limiting the variety of updates into Elasticsearch. A reference buyer of Elasticsearch, Bol.com, used Elasticsearch for web site search as a part of their e-commerce platform. Bol.com had roughly 700K updates per day made to their choices together with content material, pricing and availability adjustments. They initially needed an answer that stayed in sync with any adjustments as they occurred. However, given the influence of updates on Elasticsearch system efficiency, they opted to permit for 15-20 minute delays. The batching of paperwork into Elasticsearch ensured constant question efficiency.

Deletions and Section Merge Challenges in Elasticsearch

In Elasticsearch, there could be challenges associated to the deletion of previous paperwork and the reclaiming of area.

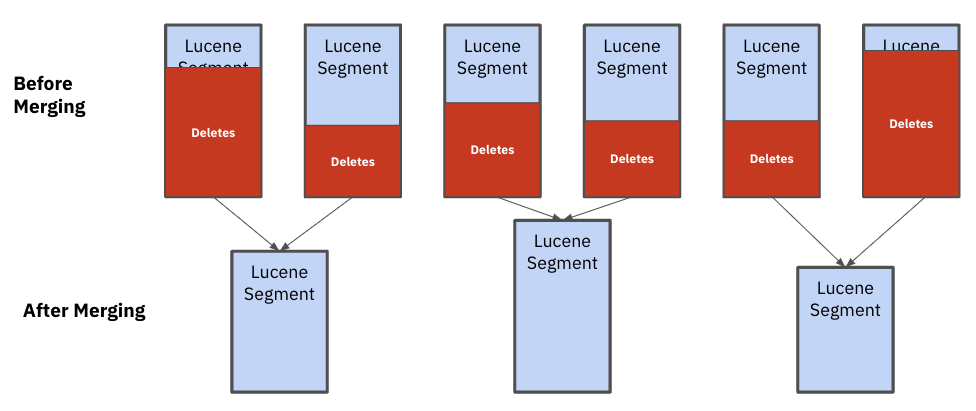

Elasticsearch completes a phase merge within the background when there are a lot of segments in an index or there are a variety of paperwork in a phase which can be marked for deletion. A phase merge is when paperwork are copied from current segments right into a newly fashioned phase and the remaining segments are deleted. Sadly, Lucene just isn’t good at sizing the segments that should be merged, doubtlessly creating uneven segments that influence efficiency and stability.

Picture 2: After merging, you’ll be able to see that the Lucene segments are all completely different sizes. These uneven segments influence efficiency and stability

That’s as a result of Elasticsearch assumes all paperwork are uniformly sized and makes merge selections based mostly on the variety of paperwork deleted. When coping with heterogeneous doc sizes, as is commonly the case in multi-tenant functions, some segments will develop sooner in dimension than others, slowing down efficiency for the most important clients on the appliance. In these circumstances, the one treatment is to reindex a considerable amount of information.

Duplicate Challenges in Elasticsearch

Elasticsearch makes use of a primary-backup mannequin for replication. The first duplicate processes an incoming write operation after which forwards the operation to its replicas. Every duplicate receives this operation and re-indexes the information domestically once more. Which means each duplicate independently spends pricey compute sources to re-index the identical doc over and over. If there are n replicas, Elastic would spend n occasions the cpu to index the identical doc. This may exacerbate the quantity of information that must be reindexed when an replace or insert happens.

Bulk API and Queue Challenges in Elasticsearch

Whereas you should utilize the Replace API in Elasticsearch, it’s typically beneficial to batch frequent adjustments utilizing the Bulk API. When utilizing the Bulk API, engineering groups will usually must create and handle a queue to streamline updates into the system.

A queue is impartial of Elasticsearch and can should be configured and managed. The queue will consolidate the inserts, updates and deletes to the system inside a particular time interval, say quarter-hour, to restrict the influence on Elasticsearch. The queuing system can even apply a throttle when the speed of insertion is excessive to make sure software stability. Whereas queues are useful for updates, they aren’t good at figuring out when there are a variety of information adjustments that require a full reindex of the information. This may happen at any time if there are a variety of updates to the system. It’s normal for groups working Elastic at scale to have devoted operations members managing and tuning their queues every day.

Reindexing in Elasticsearch

As talked about within the earlier part, when there are a slew of updates or it is advisable to change the index mappings then a reindex of information happens. Reindexing is error inclined and does have the potential to take down a cluster. What’s much more frightful, is that reindexing can occur at any time.

If you happen to do wish to change your mappings, you might have extra management over the time that reindexing happens. Elasticsearch has a reindex API to create a brand new index and an Aliases API to make sure that there is no such thing as a downtime when a brand new index is being created. With an alias API, queries are routed to the alias, or the previous index, as the brand new index is being created. When the brand new index is prepared, the aliases API will convert to learn information from the brand new index.

With the aliases API, it’s nonetheless difficult to maintain the brand new index in sync with the newest information. That’s as a result of Elasticsearch can solely write information to at least one index. So, you’ll need to configure the information pipeline upstream to double write into the brand new and the previous index.

Rockset

Knowledge Ingestion in Rockset

Rockset makes use of built-in connectors to maintain your information in sync with supply methods. Rockset’s managed connectors are tuned for every sort of information supply in order that information could be ingested and made queryable inside 2 seconds. This avoids guide pipelines that add latency or can solely ingest information in micro-batches, say each quarter-hour.

At a excessive stage, Rockset provides built-in connectors to OLTP databases, information streams and information lakes and warehouses. Right here’s how they work:

Constructed-In Connectors to OLTP Databases

Rockset does an preliminary scan of your tables in your OLTP database after which makes use of CDC streams to remain in sync with the newest information, with information being made obtainable for querying inside 2 seconds of when it was generated by the supply system.

Constructed-In Connectors to Knowledge Streams

With information streams like Kafka or Kinesis, Rockset repeatedly ingests any new subjects utilizing a pull-based integration that requires no tuning in Kafka or Kinesis.

Constructed-In Connectors to Knowledge Lakes and Warehouses

Rockset always displays for updates and ingests any new objects from information lakes like S3 buckets. We typically discover that groups wish to be a part of real-time streams with information from their information lakes for real-time analytics.

Updates, Inserts and Deletes in Rockset

Rockset has a distributed structure optimized to effectively index information in parallel throughout a number of machines.

Rockset is a document-sharded database, so it writes complete paperwork to a single machine, reasonably than splitting it aside and sending the completely different fields to completely different machines. Due to this, it’s fast so as to add new paperwork for inserts or find current paperwork, based mostly on major key _id for updates and deletes.

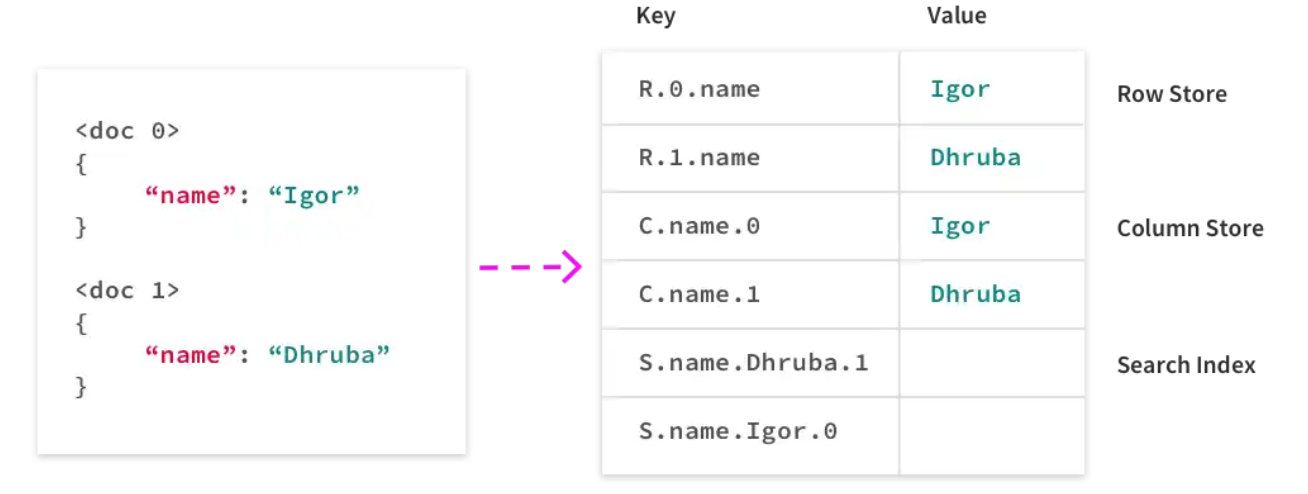

Much like Elasticsearch, Rockset makes use of indexes to shortly and effectively retrieve information when it’s queried. Not like different databases or search engines like google although, Rockset indexes information at ingest time in a Converged Index, an index that mixes a column retailer, search index and row retailer. The Converged Index shops all the values within the fields as a sequence of key-value pairs. Within the instance under you’ll be able to see a doc after which how it’s saved in Rockset.

Picture 3: Rockset’s Converged Index shops all the values within the fields as a sequence of key-value pairs in a search index, column retailer and row retailer.

Beneath the hood, Rockset makes use of RocksDB, a high-performance key-value retailer that makes mutations trivial. RocksDB helps atomic writes and deletes throughout completely different keys. If an replace is available in for the identify discipline of a doc, precisely 3 keys should be up to date, one per index. Indexes for different fields within the doc are unaffected, which means Rockset can effectively course of updates as an alternative of losing cycles updating indexes for complete paperwork each time.

Nested paperwork and arrays are additionally first-class information sorts in Rockset, which means the identical replace course of applies to them as nicely, making Rockset nicely fitted to updates on information saved in trendy codecs like JSON and Avro.

The staff at Rockset has additionally constructed a number of customized extensions for RocksDB to deal with excessive writes and heavy reads, a typical sample in real-time analytics workloads. A type of extensions is distant compactions which introduces a clear separation of question compute and indexing compute to RocksDB Cloud. This permits Rockset to keep away from writes interfering with reads. As a consequence of these enhancements, Rockset can scale its writes based on clients’ wants and make contemporary information obtainable for querying at the same time as mutations happen within the background.

Updates, Inserts and Deletes Utilizing the Rockset API

Customers of Rockset can use the default _id discipline or specify a particular discipline to be the first key. This discipline permits a doc or part of a doc to be overwritten. The distinction between Rockset and Elasticsearch is that Rockset can replace the worth of a person discipline with out requiring a whole doc to be reindexed.

To replace current paperwork in a set utilizing the Rockset API, you may make requests to the Patch Paperwork endpoint. For every current doc you want to replace, you simply specify the _id discipline and an inventory of patch operations to be utilized to the doc.

The Rockset API additionally exposes an Add Paperwork endpoint with the intention to insert information immediately into your collections out of your software code. To delete current paperwork, merely specify the _id fields of the paperwork you want to take away and make a request to the Delete Paperwork endpoint of the Rockset API.

Dealing with Replicas in Rockset

Not like in Elasticsearch, just one duplicate in Rockset does the indexing and compaction utilizing RocksDB distant compactions. This reduces the quantity of CPU required for indexing, particularly when a number of replicas are getting used for sturdiness.

Reindexing in Rockset

At ingest time in Rockset, you should utilize an ingest transformation to specify the specified information transformations to use in your uncooked supply information. If you happen to want to change the ingest transformation at a later date, you’ll need to reindex your information.

That mentioned, Rockset permits schemaless ingest and dynamically sorts the values of each discipline of information. If the dimensions and form of the information or queries change, Rockset will proceed to be performant and never require information to be reindexed.

Rockset can scale to a whole bunch of terabytes of information with out ever needing to be reindexed. This goes again to the sharding technique of Rockset. When the compute {that a} buyer allocates of their Digital Occasion will increase, a subset of shards are shuffled to realize a greater distribution throughout the cluster, permitting for extra parallelized, sooner indexing and question execution. In consequence, reindexing doesn’t must happen in these situations.

Conclusion

Elasticsearch was designed for log analytics the place information just isn’t being ceaselessly up to date, inserted or deleted. Over time, groups have expanded their use for Elasticsearch, usually utilizing Elasticsearch as a secondary information retailer and indexing engine for real-time analytics on always altering transactional information. This is usually a pricey endeavor, particularly for groups optimizing for real-time ingestion of information in addition to contain a substantial quantity of administration overhead.

Rockset, however, was designed for real-time analytics and to make new information obtainable for querying inside 2 seconds of when it was generated. To resolve this use case, Rockset helps in-place inserts, updates and deletes, saving on compute and limiting using reindexing of paperwork. Rockset additionally acknowledges the administration overhead of connectors and ingestion and takes a platform method, incorporating real-time connectors into its cloud providing.

General, we’ve seen corporations that migrate from Elasticsearch to Rockset for real-time analytics save 44% simply on their compute invoice. Be a part of the wave of engineering groups switching from Elasticsearch to Rockset in days. Begin your free trial immediately.

[ad_2]