[ad_1]

The challenges

Buyer expectations and the corresponding calls for on purposes have by no means been increased. Customers count on purposes to be quick, dependable, and obtainable. Additional, knowledge is king, and customers need to have the ability to slice and cube aggregated knowledge as wanted to search out insights. Customers do not wish to anticipate knowledge engineers to provision new indexes or construct new ETL chains. They need unfettered entry to the freshest knowledge obtainable.

However dealing with all your utility wants is a tall process for any single database. For the database, optimizing for frequent, low-latency operations on particular person information is completely different from optimizing for less-frequent aggregations or heavy filtering throughout many information. Many instances, we attempt to deal with each patterns with the identical database and cope with the inconsistent efficiency as our utility scales. We expect we’re optimizing for minimal effort or value, when in reality we’re doing the other. Operating analytics on an OLTP database normally requires that we overprovision a database to account for peaks in visitors. This finally ends up costing some huge cash and normally fails to supply a lovely finish consumer expertise.

On this walkthrough, we’ll see how you can deal with the excessive calls for of customers with each of those entry patterns. We’ll be constructing a monetary utility during which customers are recording transactions and viewing latest transactions whereas additionally wanting complicated filtering or aggregations on their previous transactions.

A hybrid strategy

To deal with our utility wants, we’ll be utilizing Amazon DynamoDB with Rockset. DynamoDB will deal with our core transaction entry patterns — recording transactions plus offering a feed of latest transactions for customers to browse. Rockset will complement DynamoDB to deal with our data-heavy, “pleasant” entry patterns. We’ll let our customers filter by time, service provider, class, or different fields to search out the related transactions, or to carry out highly effective aggregations to view traits in spending over time.

As we work by these patterns, we’ll see how every of those methods are suited to the job at hand. DynamoDB excels at core OLTP operations — studying or writing a person merchandise, or fetching a spread of sequential objects based mostly on identified filters. Because of the manner it partitions knowledge based mostly on the first key, DynamoDB is ready to present constant efficiency for a lot of these queries at any scale.

Conversely, Rockset excels at steady ingestion of enormous quantities of knowledge and using a number of indexing methods on that knowledge to supply extremely selective filtering, real-time or query-time aggregations, and different patterns that DynamoDB can’t deal with simply.

As we work by this instance, we’ll study each the basic ideas underlying the 2 methods in addition to sensible steps to perform our objectives. You’ll be able to observe together with the applying utilizing the GitHub repo.

Implementing core options with DynamoDB

We are going to begin this walkthrough by implementing the core options of our utility. It is a widespread start line for any utility, as you construct the usual “CRUDL” operations to supply the flexibility to control particular person information and record a set of associated information.

For an e-commernce utility, this is able to be the performance to put an order and consider earlier orders. For a social media utility, this is able to be creating posts, including buddies, or viewing the individuals you observe. This performance is often applied by databases specializing in on-line transactional processing (OLTP) workflows that emphasize many concurrent operations in opposition to a small variety of rows.

For this instance, we’re constructing a enterprise finance utility the place a consumer could make and obtain funds, in addition to view the historical past of their transactions.

The instance can be deliberately simplified for this walkthrough, however you’ll be able to consider three core entry patterns for our utility:

- Report transaction, which can retailer a file of a fee made or acquired by the enterprise;

- View transactions by date vary, which can enable customers to see the newest funds made and acquired by a enterprise; and

- View particular person transaction, which can enable a consumer to drill into the specifics of a single transaction.

Every of those entry patterns is a essential, high-volume entry sample. We are going to consistently be recording transactions for customers, and the transaction feed would be the first view after they open the applying. Additional, every of those entry patterns will use identified, constant parameters to fetch the related file(s).

We’ll use DynamoDB to deal with these entry patterns. DynamoDB is a NoSQL database supplied by AWS. It is a absolutely managed database, and it has rising reputation in each high-scale purposes and in serverless purposes.

Considered one of DynamoDB’s most original options is the way it offers constant efficiency at any scale. Whether or not your desk is 1 megabyte or 1 petabyte, you need to see the identical response time on your operations. It is a fascinating high quality for core, OLTP use circumstances like those we’re implementing right here. It is a nice and invaluable engineering achievement, however it is very important perceive that it was achieved by being selective concerning the sorts of queries that can carry out nicely.

DynamoDB is ready to present this constant efficiency by two core design choices. First, every file in your DynamoDB desk should embrace a main key. This main key’s made up of a partition key in addition to an optionally available type key. The second key design choice for DynamoDB is that the API closely enforces using the first key – extra on this later.

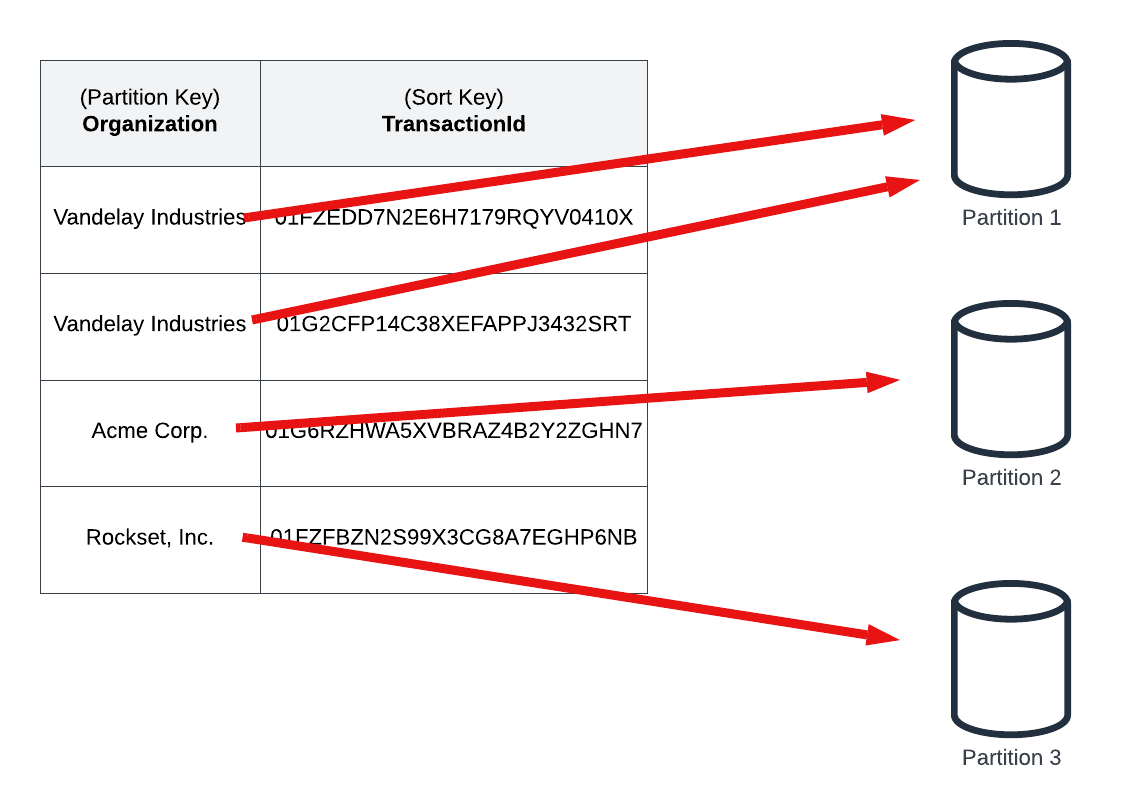

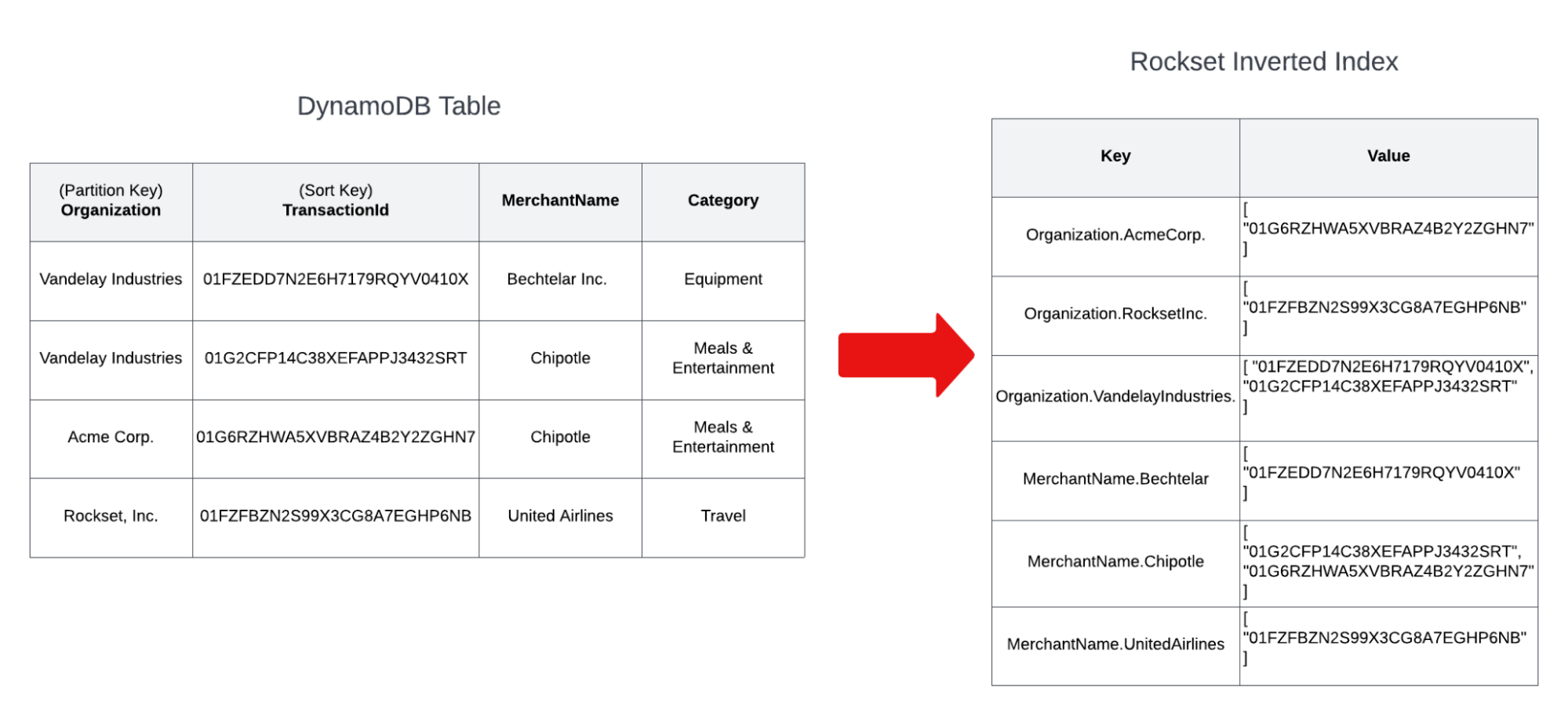

Within the picture beneath, we have now some pattern transaction knowledge in our FinTech utility. Our desk makes use of a partition key of the group identify in our utility, plus a ULID-based type key that gives the distinctiveness traits of a UUID plus sortability by creation time that enable us to make time-based queries.

The information in our desk embrace different attributes, like service provider identify, class, and quantity, which might be helpful in our utility however aren’t as essential to DynamoDB’s underlying structure. The vital half is within the main key, and particularly the partition key.

Underneath the hood, DynamoDB will break up your knowledge into a number of storage partitions, every containing a subset of the information in your desk. DynamoDB makes use of the partition key ingredient of the first key to assign a given file to a selected storage partition.

As the quantity of knowledge in your desk or visitors in opposition to your desk will increase, DynamoDB will add partitions as a method to horizontally scale your database.

As talked about above, the second key design choice for DynamoDB is that the API closely enforces using the first key. Virtually all API actions in DynamoDB require a minimum of the partition key of your main key. Due to this, DynamoDB is ready to rapidly route any request to the right storage partition, regardless of the variety of partitions and complete measurement of the desk.

With these two tradeoffs, there are essentially limitations in how you utilize DynamoDB. You have to fastidiously plan and design on your entry patterns upfront, as your main key have to be concerned in your entry patterns. Altering your entry patterns later could be tough and should require some handbook migration steps.

When your use circumstances fall inside DynamoDB’s core competencies, you’ll reap the advantages. You may obtain constant, predictable efficiency regardless of the dimensions, and you will not see long-term degradation of your utility over time. Additional, you will get a totally managed expertise with low operational burden, permitting you to concentrate on what issues to the enterprise.

The core operations in our instance match completely with this mannequin. When retrieving a feed of transactions for a company, we may have the group ID obtainable in our utility that can enable us to make use of the DynamoDB Question operation to fetch a contiguous set of information with the identical partition key. To retrieve extra particulars on a selected transaction, we may have each the group ID and the transaction ID obtainable to make a DynamoDB GetItem request to fetch the specified merchandise.

You’ll be able to see these operations in motion with the pattern utility. Comply with the directions to deploy the applying and seed it with pattern knowledge. Then, make HTTP requests to the deployed service to fetch the transaction feed for particular person customers. These operations can be quick, environment friendly operations whatever the variety of concurrent requests or the scale of your DynamoDB desk.

Supplementing DynamoDB with Rockset

Thus far, we have used DynamoDB to deal with our core entry patterns. DynamoDB is nice for these patterns as its key-based partitioning will present constant efficiency at any scale.

Nevertheless, DynamoDB shouldn’t be nice at dealing with different entry patterns. DynamoDB doesn’t permit you to effectively question by attributes apart from the first key. You need to use DynamoDB’s secondary indexes to reindex your knowledge by extra attributes, however it may possibly nonetheless be problematic when you have many various attributes which may be used to index your knowledge.

Moreover, DynamoDB doesn’t present any aggregation performance out of the field. You’ll be able to calculate your individual aggregates utilizing DynamoDB, however it could be with diminished flexibility or with unoptimized learn consumption as in comparison with an answer that designs for aggregation up entrance.

To deal with these patterns, we’ll complement DynamoDB with Rockset.

Rockset is finest regarded as a secondary set of indexes in your knowledge. Rockset makes use of solely these indexes at question time and doesn’t venture any load again into DynamoDB throughout a learn. Relatively than particular person, transactional updates out of your utility purchasers, Rockset is designed for steady, streaming ingestion out of your main knowledge retailer. It has direct connectors for quite a lot of main knowledge shops, together with DynamoDB, MongoDB, Kafka, and lots of relational databases.

As Rockset ingests knowledge out of your main database, it then indexes your knowledge in a Converged Index, which borrows ideas from: a row index, an inverted index, and a columnar index. Extra indexes, comparable to vary, kind and geospatial are robotically created based mostly on the information sorts ingested. We’ll talk about the specifics of those indexes beneath, however this Converged Index permits for extra versatile entry patterns in your knowledge.

That is the core idea behind Rockset — it’s a secondary index in your knowledge utilizing a totally managed, near-real-time ingestion pipeline out of your main datastore.

Groups have lengthy been extracting knowledge from DynamoDB to insert into one other system to deal with extra use circumstances. Earlier than we transfer into the specifics of how Rockset ingests knowledge out of your desk, let’s briefly talk about how Rockset differs from different choices on this house. There are a number of core variations between Rockset and different approaches.

Firstly, Rockset is absolutely managed. Not solely are you not required to handle the database infrastructure, but in addition you need not preserve the pipeline to extract, remodel, and cargo knowledge into Rockset. With many different options, you are in control of the “glue” code between your methods. These methods are essential but failure-prone, as you should defensively guard in opposition to any adjustments within the knowledge construction. Upstream adjustments can lead to downstream ache for these sustaining these methods.

Secondly, Rockset can deal with real-time knowledge in a mutable manner. With many different methods, you get one or the opposite. You’ll be able to select to carry out periodic exports and bulk-loads of your knowledge, however this ends in stale knowledge between hundreds. Alternatively, you’ll be able to stream knowledge into your knowledge warehouse in an append-only vogue, however you’ll be able to’t carry out in-place updates on altering knowledge. Rockset is ready to deal with updates on current objects as rapidly and effectively because it inserts new knowledge and thus can provide you a real-time take a look at your altering knowledge.

Thirdly, Rockset generates its indexes robotically. Different ‘absolutely managed’ options nonetheless require you to configure indexes as you want them to help new queries. Rockset’s question engine is designed to make use of one set of indexes to help any and all queries. As you add an increasing number of queries to your system, you do not want so as to add extra indexes, taking over an increasing number of house and computational assets. This additionally implies that advert hoc queries can absolutely leverage the indexes as nicely, making them quick with out ready for an administrator so as to add a bespoke index to help them.

How Rockset ingests knowledge from DynamoDB

Now that we all know the fundamentals of what Rockset is and the way it helps us, let’s join our DynamoDB desk to Rockset. In doing so, we’ll find out how the Rockset ingestion course of works and the way it differs from different choices.

Rockset has purpose-built connectors for quite a lot of knowledge sources, and the precise connector implementation is dependent upon the specifics of the upstream knowledge supply.

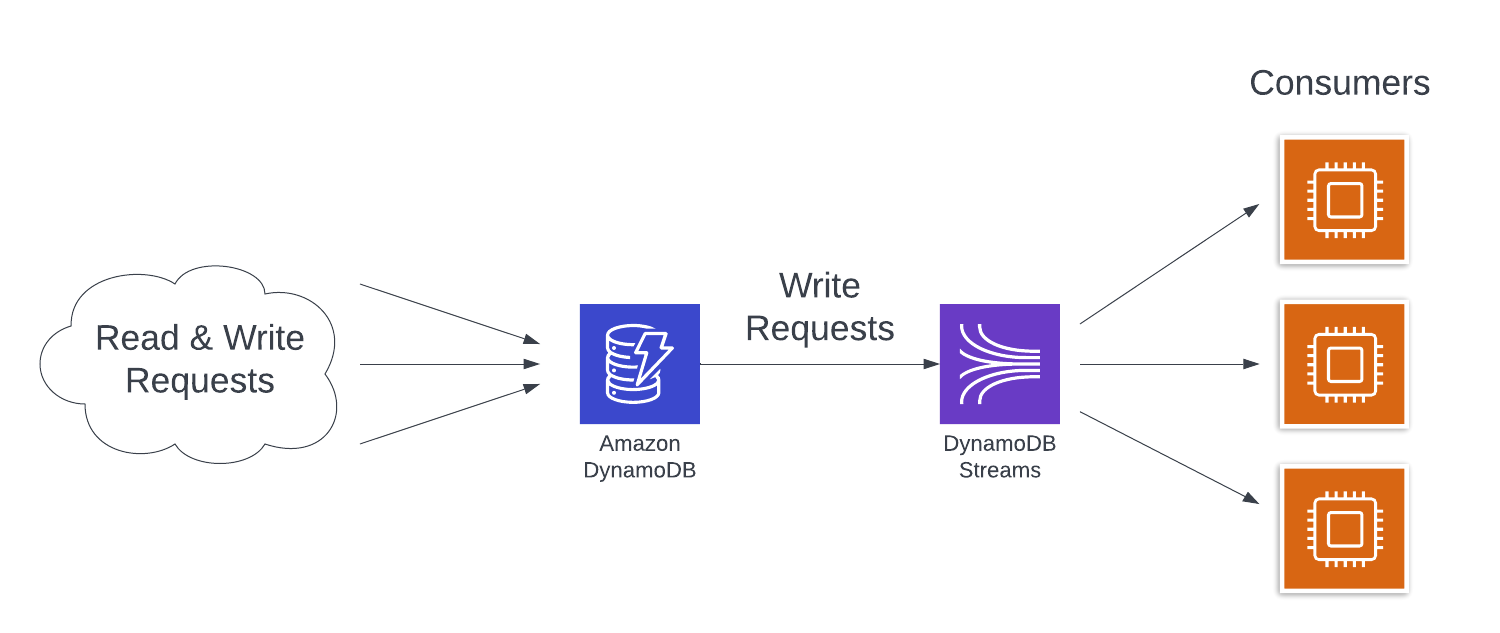

For connecting with DynamoDB, Rockset depends on DynamoDB Streams. DynamoDB Streams is a change knowledge seize function from DynamoDB the place particulars of every write operation in opposition to a DynamoDB desk are recorded within the stream. Customers of the stream can course of these adjustments in the identical order they occurred in opposition to the desk to replace downstream methods.

A DynamoDB Stream is nice for Rockset to remain up-to-date with a DynamoDB desk in close to actual time, but it surely’s not the complete story. A DynamoDB Stream solely accommodates information of write operations that occurred after the Stream was enabled on the desk. Additional, a DynamoDB Stream retains information for under 24 hours. Operations that occurred earlier than the stream was enabled or greater than 24 hours in the past is not going to be current within the stream.

However Rockset wants not solely the newest knowledge, however the entire knowledge in your database in an effort to reply your queries accurately. To deal with this, it does an preliminary bulk export (utilizing both a DynamoDB Scan or an export to S3, relying in your desk measurement) to seize the preliminary state of your desk.

Thus, Rockset’s DynamoDB connection course of has two components:

- An preliminary, bootstrapping course of to export your full desk for ingestion into Rockset;

- A subsequent, steady course of to devour updates out of your DynamoDB Stream and replace the information in Rockset.

Discover that each of those processes are absolutely managed by Rockset and clear to you as a consumer. You will not be in control of sustaining these pipelines and responding to alerts if there’s an error.

Additional, when you select the S3 export methodology for the preliminary ingestion course of, neither of the Rockset ingestion processes will devour learn capability models out of your essential desk. Thus, Rockset will not take consumption out of your utility use circumstances or have an effect on manufacturing availability.

Utility: Connecting DynamoDB to Rockset

Earlier than transferring on to utilizing Rockset in our utility, let’s join Rockset to our DynamoDB desk.

First, we have to create a brand new integration between Rockset and our desk. We’ll stroll by the high-level steps beneath, however you’ll find extra detailed step-by-step directions within the utility repository if wanted.

Within the Rockset console, navigate to the new integration wizard to start out this course of.

Within the integration wizard, select Amazon DynamoDB as your integration kind. Then, click on Begin to maneuver to the subsequent step.

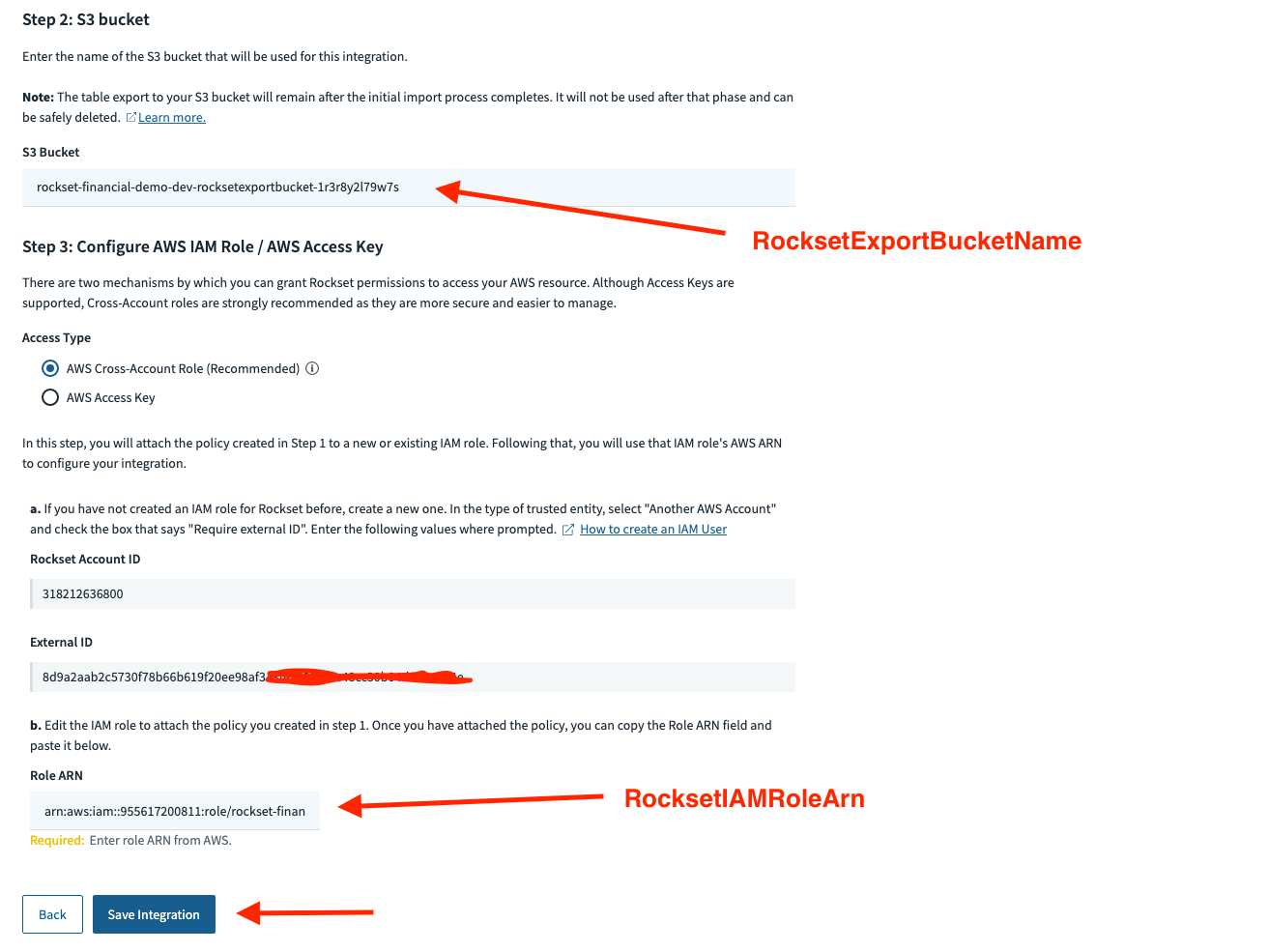

The DynamoDB integration wizard has step-by-step directions for authorizing Rockset to entry your DynamoDB desk. This requires creating an IAM coverage, an IAM position, and an S3 bucket on your desk export.

You’ll be able to observe these directions to create the assets manually when you choose. Within the serverless world, we choose to create issues by way of infrastructure-as-code as a lot as doable, and that features these supporting assets.

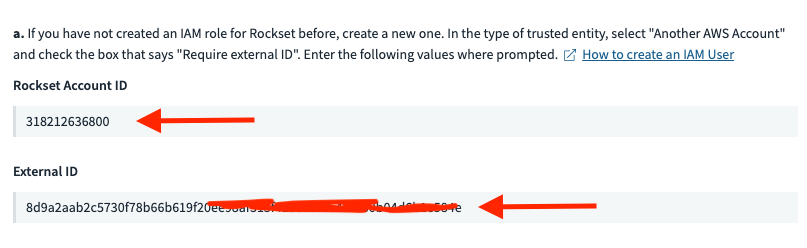

The instance repository contains the infrastructure-as-code essential to create the Rockset integration assets. To make use of these, first discover the Rockset Account ID and Exterior ID values on the backside of the Rockset integration wizard.

Copy and paste these values into the related sections of the customized block of the serverless.yml file. Then, uncomment the assets on strains 71 to 122 of the serverless.yml to create these assets.

Redeploy your utility to create these new assets. Within the outputs from the deploy, copy and paste the S3 bucket identify and the IAM position ARN into the suitable locations within the Rockset console.

Then, click on the Save Integration button to avoid wasting your integration.

After you’ve gotten created your integration, you will have to create a Rockset assortment from the combination. Navigate to the assortment creation wizard within the Rockset console and observe the steps to make use of your integration to create a set. You may also discover step-by-step directions to create a set within the utility repository.

After you have accomplished this connection, typically, on a correctly sized set of situations, inserts, updates or deletes to knowledge in DynamoDB can be mirrored in Rockset’s index and obtainable for querying in lower than 2 seconds.

Utilizing Rockset for complicated filtering

Now that we have now related Rockset to our DynamoDB desk, let’s have a look at how Rockset can allow new entry patterns on our current knowledge.

Recall from our core options part that DynamoDB is closely centered in your main keys. You have to use your main key to effectively entry your knowledge. Accordingly, we structured our desk to make use of the group identify and the transaction time in our main keys.

This construction works for our core entry patterns, however we might wish to present a extra versatile manner for customers to browse their transactions. There are a variety of helpful attributes — class, service provider identify, quantity, and so forth. — that may be helpful in filtering.

We might use DynamoDB’s secondary indexes to allow filtering on extra attributes, however that is nonetheless not an excellent match right here. DynamoDB’s main key construction doesn’t simply enable for versatile querying that contain combos of many, optionally available attributes. You might have a secondary index for filtering by service provider identify and date, however you would wish one other secondary index when you needed to permit filtering by service provider identify, date, and quantity. An entry sample that filters on class would require a 3rd secondary index.

Relatively than cope with that complexity, we’ll lean on Rockset right here.

We noticed earlier than that Rockset makes use of a Converged Index to index your knowledge in a number of methods. A kind of methods is an inverted index. With an inverted index, Rockset indexes every attribute instantly.

Discover how this index is organized. Every attribute identify and worth is used as the important thing of the index, and the worth is a listing of doc IDs that embrace the corresponding attribute identify and worth. The keys are constructed in order that their pure type order can help vary queries effectively.

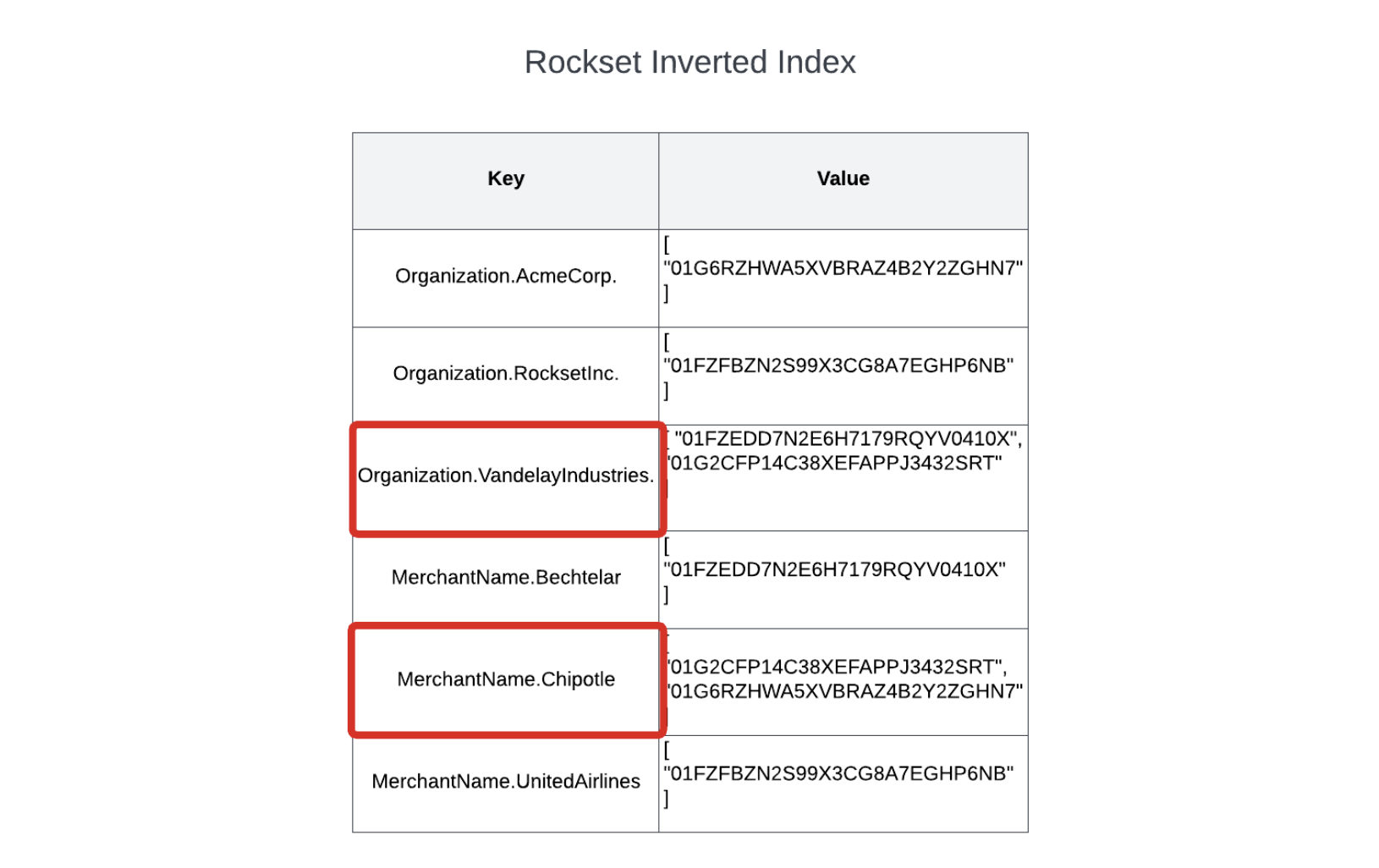

An inverted index is nice for queries which have selective filter situations. Think about we wish to enable our customers to filter their transactions to search out those who match sure standards. Somebody within the Vandelay Industries group is excited about what number of instances they’ve ordered Chipotle not too long ago.

You might discover this with a question as follows:

SELECT *

FROM transactions

WHERE group = 'Vandelay Industries'

AND merchant_name="Chipotle"

As a result of we’re doing selective filters on the client and service provider identify, we are able to use the inverted index to rapidly discover the matching paperwork.

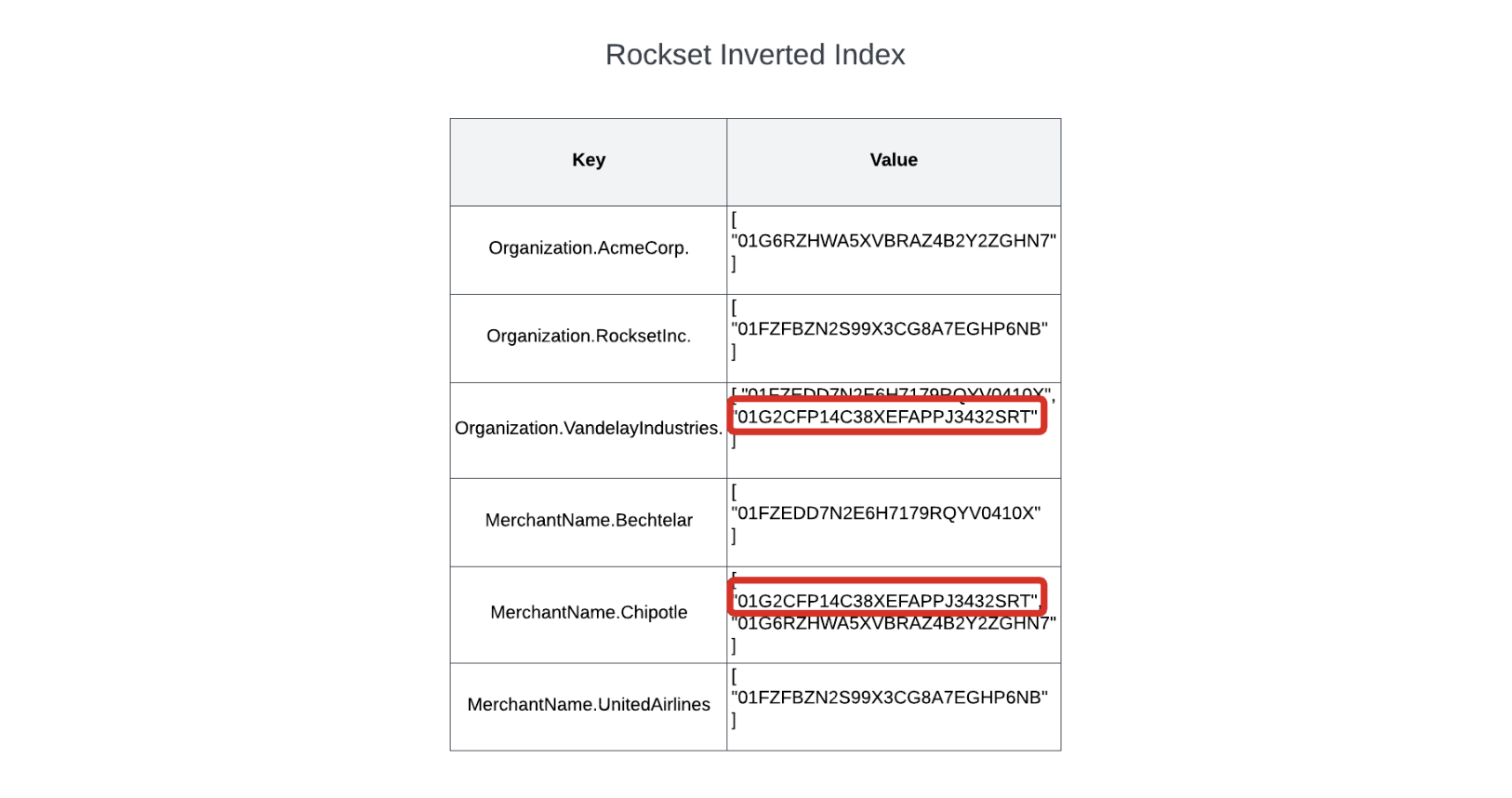

Rockset will lookup each attribute identify and worth pairs within the inverted index to search out the lists of matching paperwork.

As soon as it has these two lists, it may possibly merge them to search out the set of information that match each units of situations, and return the outcomes again to the consumer.

Similar to DynamoDB’s partition-based indexing is environment friendly for operations that use the partition key, Rockset’s inverted index offers you environment friendly lookups on any area in your knowledge set, even on attributes of embedded objects or on values inside embedded arrays.

Utility: Utilizing the Rockset API in your utility

Now that we all know how Rockset can effectively execute selective queries in opposition to our dataset, let’s stroll by the sensible features of integrating Rockset queries into our utility.

Rockset exposes RESTful companies which might be protected by an authorization token. SDKs are additionally obtainable for in style programming languages. This makes it an excellent match for integrating with serverless purposes since you need not arrange sophisticated non-public networking configuration to entry your database.

As a way to work together with the Rockset API in our utility, we’ll want a Rockset API key. You’ll be able to create one within the API keys part of the Rockset console. As soon as you have accomplished so, copy its worth into your serverless.yml file and redeploy to make it obtainable to your utility.

Facet word: For simplicity, we’re utilizing this API key as an atmosphere variable. In an actual utility, you need to use one thing like Parameter Retailer or AWS Secrets and techniques Supervisor to retailer your secret and keep away from atmosphere variables.

Have a look at our TransactionService class to see how we work together with the Rockset API. The category initialization takes in a Rockset consumer object that can be used to make calls to Rockset.

Within the filterTransactions methodology in our service class, we have now the next question to work together with Rockset:

const response = await this._rocksetClient.queries.question({

sql: {

question: `

SELECT *

FROM Transactions

WHERE group = :group

AND class = :class

AND quantity BETWEEN :minAmount AND :maxAmount

ORDER BY transactionTime DESC

LIMIT 20`,

parameters: [

{

name: "organization",

type: "string",

value: organization,

},

{

name: "category",

type: "string",

value: category,

},

{

name: "minAmount",

type: "float",

value: minAmount,

},

{

name: "maxAmount",

type: "float",

value: maxAmount,

},

],

},

});

There are two issues to notice about this interplay. First, we’re utilizing named parameters in our question when dealing with enter from customers. It is a widespread observe with SQL databases to keep away from SQL injection assaults.

Second, the SQL code is intermingled with our utility code, and it may be tough to trace over time. Whereas this will work, there’s a higher manner. As we apply our subsequent use case, we’ll take a look at how you can use Rockset Question Lambdas in our utility.

Utilizing Rockset for aggregation

Up to now, we have reviewed the indexing methods of DynamoDB and Rockset in discussing how the database can discover a person file or set of information that match a selected filter predicate. For instance, we noticed that DynamoDB pushes you in the direction of utilizing a main key to discover a file, whereas Rockset’s inverted index can effectively discover information utilizing highly-selective filter situations.

On this ultimate part, we’ll swap gears a bit to concentrate on knowledge format relatively than indexing instantly. In enthusiastic about knowledge format, we’ll distinction two approaches: row-based vs. column-based.

Row-based databases, just like the identify implies, organize their knowledge on disk in rows. Most relational databases, like PostgreSQL and MySQL, are row-based databases. So are many NoSQL databases, like DynamoDB, even when their information aren’t technically “rows” within the relational database sense.

Row-based databases are nice for the entry patterns we have checked out thus far. When fetching a person transaction by its ID or a set of transactions based on some filter situations, we typically need the entire fields to come back again for every of the transactions. As a result of all of the fields of the file are saved collectively, it typically takes a single learn to return the file. (Notice: some nuance on this coming in a bit).

Aggregation is a unique story altogether. With aggregation queries, we wish to calculate an mixture — a rely of all transactions, a sum of the transaction totals, or a mean spend by month for a set of transactions.

Returning to the consumer from the Vandelay Industries group, think about they wish to take a look at the final three months and discover the full spend by class for every month. A simplified model of that question would look as follows:

SELECT

class,

EXTRACT(month FROM transactionTime) AS month,

sum(quantity) AS quantity

FROM transactions

WHERE group = 'Vandelay Industries'

AND transactionTime > CURRENT_TIMESTAMP() - INTERVAL 3 MONTH

GROUP BY class, month

ORDER BY class, month DESC

For this question, there might be a lot of information that must be learn to calculate the consequence. Nevertheless, discover that we do not want lots of the fields for every of our information. We’d like solely 4 — class, transactionTime, group, and quantity — to find out this consequence.

Thus, not solely do we have to learn much more information to fulfill this question, but in addition our row-based format will learn a bunch of fields which might be pointless to our consequence.

Conversely, a column-based format shops knowledge on disk in columns. Rockset’s Converged Index makes use of a columnar index to retailer knowledge in a column-based format. In a column-based format, knowledge is saved collectively by columns. A person file is shredded into its constituent columns for indexing.

If my question must do an aggregation to sum the “quantity” attribute for a lot of information, Rockset can achieve this by merely scanning the “quantity” portion of the columnar index. This vastly reduces the quantity of knowledge learn and processed as in comparison with row-based layouts.

Notice that, by default, Rockset’s columnar index shouldn’t be going to order the attributes inside a column. As a result of we have now user-facing use circumstances that can function on a selected buyer’s knowledge, we would like to arrange our columnar index by buyer to scale back the quantity of knowledge to scan whereas utilizing the columnar index.

Rockset offers knowledge clustering in your columnar index to assist with this. With clustering, we are able to point out that we wish our columnar index to be clustered by the “group” attribute. This can group all column values by the group inside the columnar indexes. Thus, when Vandelay Industries is doing an aggregation on their knowledge, Rockset’s question processor can skip the parts of the columnar index for different clients.

How Rockset’s row-based index helps processing

Earlier than we transfer on to utilizing the columnar index in our utility, I wish to speak about one other side of Rockset’s Converged Index.

Earlier, I discussed that row-based layouts had been used when retrieving full information and indicated that each DynamoDB and our Rockset inverted-index queries had been utilizing these layouts.

That is solely partially true. The inverted index has some similarities with a column-based index, because it shops column names and values collectively for environment friendly lookups by any attribute. Every index entry features a pointer to the IDs of the information that embrace the given column identify and worth mixture. As soon as the related ID or IDs are found from the inverted index, Rockset can retrieve the complete file utilizing the row index. Rockset makes use of dictionary encoding and different superior compression strategies to reduce the information storage measurement.

Thus, we have now seen how Rockset’s Converged Index suits collectively:

- The column-based index is used for rapidly scanning giant numbers of values in a selected column for aggregations;

- The inverted index is used for selective filters on any column identify and worth;

- The row-based index is used to fetch any extra attributes which may be referenced within the projection clause.

Underneath the hood, Rockset’s highly effective indexing and querying engine is monitoring statistics in your knowledge and producing optimum plans to execute your question effectively.

Utility: Utilizing Rockset Question Lambdas in your utility

Let’s implement our Rockset aggregation question that makes use of the columnar index.

For our earlier question, we wrote our SQL question on to the Rockset API. Whereas that is the proper factor to do from some extremely customizable consumer interfaces, there’s a higher possibility when the SQL code is extra static. We wish to keep away from sustaining our messy SQL code in the course of our utility logic.

To assist with this, Rockset has a function known as Question Lambdas. Question Lambdas are named, versioned, parameterized queries which might be registered within the Rockset console. After you’ve gotten configured a Question Lambda in Rockset, you’ll obtain a totally managed, scalable endpoint for the Question Lambda you can name together with your parameters to be executed by Rockset. Additional, you will even get monitoring statistics for every Question Lambda, so you’ll be able to observe how your Question Lambda is performing as you make adjustments.

You’ll be able to study extra about Question Lambdas right here, however let’s arrange our first Question Lambda to deal with our aggregation question. A full walkthrough could be discovered within the utility repository.

Navigate to the Question Editor part of the Rockset console. Paste the next question into the editor:

SELECT

class,

EXTRACT(

month

FROM

transactionTime

) as month,

EXTRACT(

yr

FROM

transactionTime

) as yr,

TRUNCATE(sum(quantity), 2) AS quantity

FROM

Transactions

WHERE

group = :group

AND transactionTime > CURRENT_TIMESTAMP() - INTERVAL 3 MONTH

GROUP BY

class,

month,

yr

ORDER BY

class,

month,

yr DESC

This question will group transactions during the last three months for a given group into buckets based mostly on the given class and the month of the transaction. Then, it is going to sum the values for a class by month to search out the full quantity spent throughout every month.

Discover that it features a parameter for the “group” attribute, as indicated by the “:group” syntax within the question. This means a company worth have to be handed as much as execute the question.

Save the question as a Question Lambda within the Rockset console. Then, take a look at the fetchTransactionsByCategoryAndMonth code in our TransactionService class. It calls the Question Lambda by identify and passes up the “group” property that was given by a consumer.

That is a lot less complicated code to deal with in our utility. Additional, Rockset offers model management and query-specific monitoring for every Question Lambda. This makes it simpler to keep up your queries over time and perceive how adjustments within the question syntax have an effect on efficiency.

Conclusion

On this publish, we noticed how you can use DynamoDB and Rockset collectively to construct a quick, pleasant utility expertise for our customers. In doing so, we discovered each the conceptual foundations and the sensible steps to implement our utility.

First, we used DynamoDB to deal with the core performance of our utility. This contains entry patterns like retrieving a transaction feed for a selected buyer or viewing a person transaction. Due to DynamoDB’s primary-key-based partitioning technique, it is ready to present constant efficiency at any scale.

However DynamoDB’s design additionally limits its flexibility. It could possibly’t deal with selective queries on arbitrary fields or aggregations throughout a lot of information.

To deal with these patterns, we used Rockset. Rockset offers a totally managed secondary index to energy data-heavy purposes. We noticed how Rockset maintains a steady ingestion pipeline out of your main knowledge retailer that indexes your knowledge in a Converged Index, which mixes inverted, columnar and row indexing. As we walked by our patterns, we noticed how every of Rockset’s indexing strategies work collectively to deal with pleasant consumer experiences. Lastly, we went by the sensible steps to attach Rockset to our DynamoDB desk and work together with Rockset in our utility.

Alex DeBrie is an AWS Information Hero and the creator of The DynamoDB Ebook, a complete information to knowledge modeling with DynamoDB. He works with groups to supply knowledge modeling, architectural, and efficiency recommendation on cloud-based architectures on AWS.

[ad_2]