[ad_1]

Aggregator Leaf Tailer (ALT) is the information structure favored by web-scale corporations, like Fb, LinkedIn, and Google, for its effectivity and scalability. On this weblog publish, I’ll describe the Aggregator Leaf Tailer structure and its benefits for low-latency information processing and analytics.

After we began Rockset, we got down to implement a real-time analytics engine that made the developer’s job so simple as attainable. That meant a system that was sufficiently nimble and highly effective to execute quick SQL queries on uncooked information, basically performing any wanted transformations as a part of the question step, and never as a part of a fancy information pipeline. That additionally meant a system that took full benefit of cloud efficiencies–responsive useful resource scheduling and disaggregation of compute and storage–whereas abstracting away all infrastructure-related particulars from customers. We selected ALT for Rockset.

Conventional Information Processing: Batch and Streaming

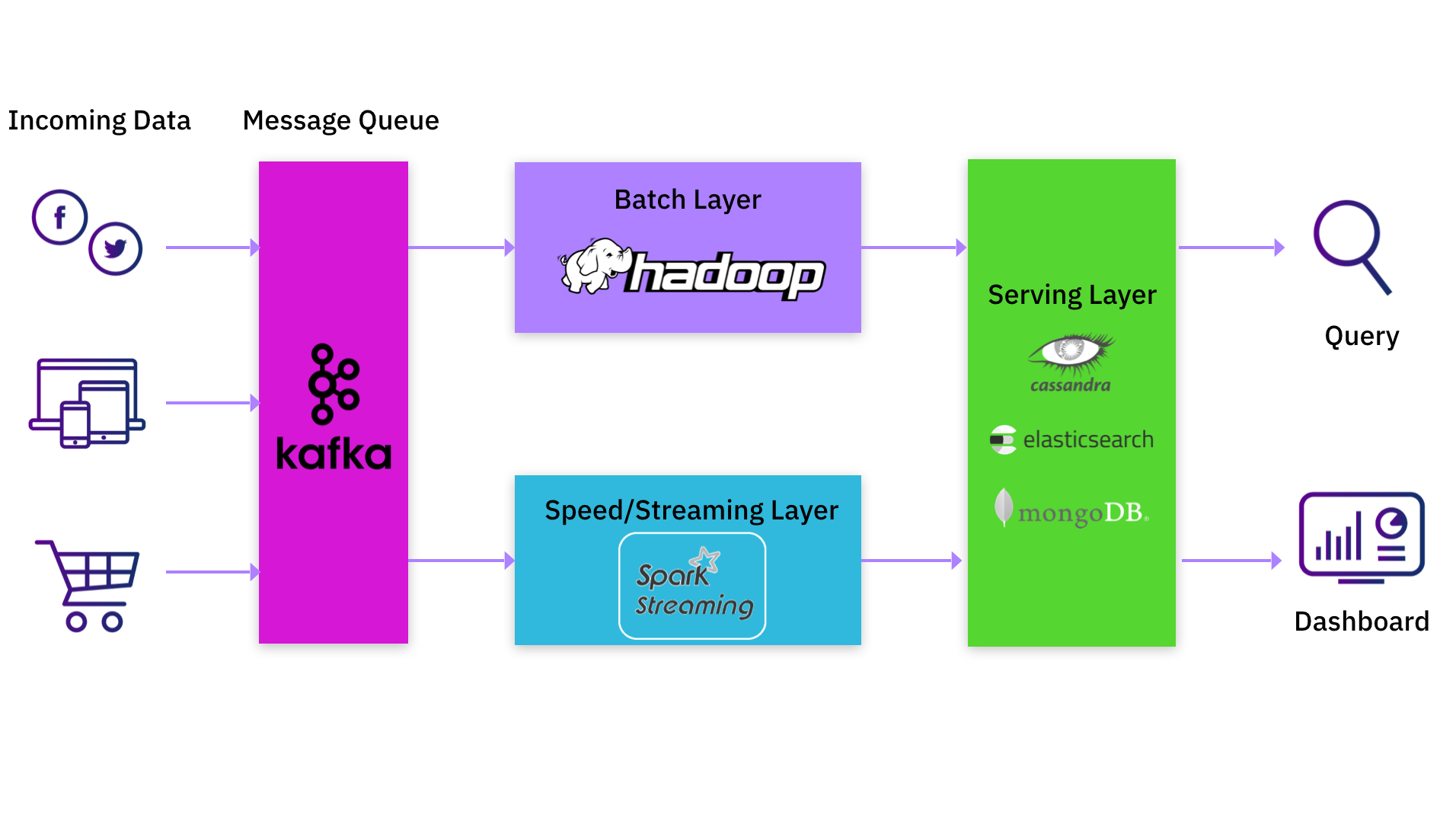

MapReduce, mostly related to Apache Hadoop, is a pure batch system that usually introduces important time lag in massaging new information into processed outcomes. To mitigate the delays inherent in MapReduce, the Lambda structure was conceived to complement batch outcomes from a MapReduce system with a real-time stream of updates. A serving layer unifies the outputs of the batch and streaming layers, and responds to queries.

The actual-time stream is often a set of pipelines that course of new information as and when it’s deposited into the system. These pipelines implement windowing queries on new information after which replace the serving layer. This structure has turn into fashionable within the final decade as a result of it addresses the stale-output downside of MapReduce methods.

Frequent Lambda Architectures: Kafka, Spark, and MongoDB/Elasticsearch

In case you are a knowledge practitioner, you’ll in all probability have both applied or used a knowledge processing platform that includes the Lambda structure. A standard implementation would have giant batch jobs in Hadoop complemented by an replace stream saved in Apache Kafka. Apache Spark is commonly used to learn this information stream from Kafka, carry out transformations, after which write the consequence to a different Kafka log. Usually, this is able to not be a single Spark job however a pipeline of Spark jobs. Every Spark job within the pipeline would learn information produced by the earlier job, do its personal transformations, and feed it to the following job within the pipeline. The ultimate output can be written to a serving system like Apache Cassandra, Elasticsearch or MongoDB.

Shortcomings of Lambda Architectures

Being a knowledge practitioner myself, I acknowledge the worth the Lambda structure presents by permitting information processing in actual time. However it is not a really perfect structure, from my perspective, as a result of a number of shortcomings:

- Sustaining two totally different processing paths, one through the batch system and one other through the real-time streaming system, is inherently troublesome. In the event you ship new code performance to the streaming software program however fail to make the required equal change to the batch software program, you possibly can get misguided outcomes.

- In case you are an utility developer or information scientist who desires to make modifications to your streaming or batch pipeline, you must both discover ways to function and modify the pipeline, or you must look ahead to another person to make the modifications in your behalf. The previous possibility requires you to choose up information engineering duties and detracts out of your major position, whereas the latter forces you right into a holding sample ready on the pipeline staff for decision.

- Many of the information transformation occurs as new information enters the system at write time, whereas the serving layer is a less complicated key-value lookup that doesn’t deal with complicated transformations. This complicates the job of the appliance developer as a result of she/he can not simply apply new transformations retroactively on pre-existing information.

The most important benefit of the Lambda structure is that information processing happens when new information arrives within the system, however sarcastically that is its largest weak spot as properly. Most processing within the Lambda structure occurs within the pipeline and never at question time. As a lot of the complicated enterprise logic is tied to the pipeline software program, the appliance developer is unable to make fast modifications to the appliance and has restricted flexibility within the methods she or he can use the information. Having to take care of a pipeline simply slows you down.

ALT: Actual-Time Analytics With out Pipelines

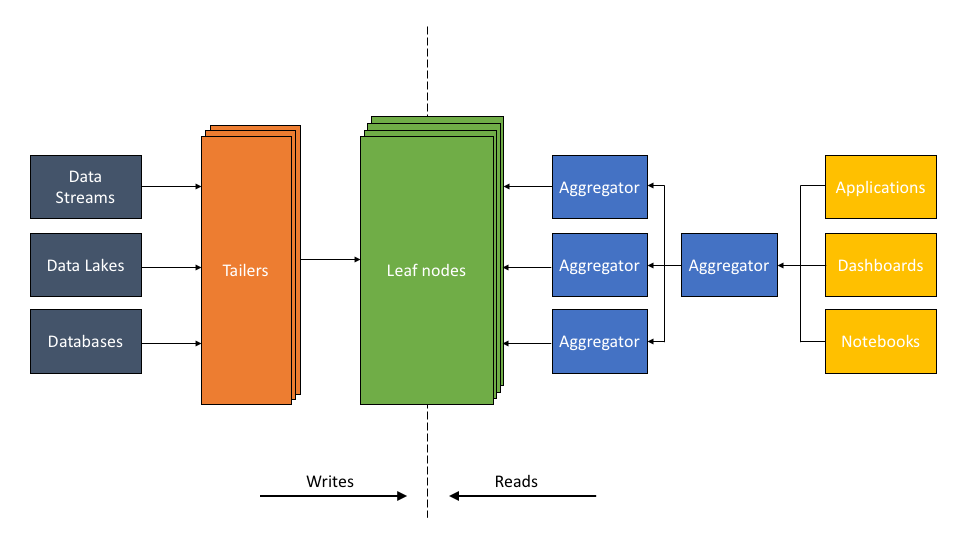

The ALT structure addresses these shortcomings of Lambda architectures. The important thing element of ALT is a high-performance serving layer that serves complicated queries, and never simply key-value lookups. The existence of this serving layer obviates the necessity for complicated information pipelines.

The ALT structure described:

- The Tailer pulls new incoming information from a static or streaming supply into an indexing engine. Its job is to fetch from all information sources, be it a knowledge lake, like S3, or a dynamic supply, like Kafka or Kinesis.

- The Leaf is a robust indexing engine. It indexes all information as and when it arrives through the Tailer. The indexing element builds a number of varieties of indexes—inverted, columnar, doc, geo, and plenty of others—on the fields of a knowledge set. The purpose of indexing is to make any question on any information area quick.

- The scalable Aggregator tier is designed to ship low-latency aggregations, be it columnar aggregations, joins, relevance sorting, or grouping. The Aggregators leverage indexing so effectively that complicated logic sometimes executed by pipeline software program in different architectures will be executed on the fly as a part of the question.

Benefits of ALT

The ALT structure permits the app developer or information scientist to run low-latency queries on uncooked information units with none prior transformation. A big portion of the information transformation course of can happen as a part of the question itself. How is that this attainable within the ALT structure?

- Indexing is important to creating queries quick. The Leaves preserve quite a lot of indexes concurrently, in order that information will be rapidly accessed no matter the kind of question—aggregation, key-value, time sequence, or search. Each doc and area is listed, together with each worth and sort of every area, leading to quick question efficiency that permits considerably extra complicated information processing to be inserted into queries.

- Queries are distributed throughout a scalable Aggregator tier. The flexibility to scale the variety of Aggregators, which give compute and reminiscence sources, permits compute energy to be focused on any complicated processing executed on the fly.

- The Tailer, Leaf, and Aggregator run as discrete microservices in disaggregated trend. Every Tailer, Leaf, or Aggregator tier will be independently scaled up and down as wanted. The system scales Tailers when there’s extra information to ingest, scales Leaves when information measurement grows, and scales Aggregators when the quantity or complexity of queries will increase. This impartial scalability permits the system to deliver important sources to bear on complicated queries when wanted, whereas making it cost-effective to take action.

Probably the most important distinction is that the Lambda structure performs information transformations up entrance in order that outcomes are pre-materialized, whereas the ALT structure permits for question on demand with on-the-fly transformations.

Why ALT Makes Sense Immediately

Whereas not as extensively generally known as the Lambda structure, the ALT structure has been in existence for nearly a decade, employed totally on high-volume methods.

- Fb’s Multifeed structure has been utilizing the ALT methodology since 2010, backed by the open-source RocksDB engine, which permits giant information units to be listed effectively.

- LinkedIn’s FollowFeed was redesigned in 2016 to make use of the ALT structure. Their earlier structure, just like the Lambda structure mentioned above, used a pre-materialization method, additionally known as fan-out-on-write, the place outcomes have been precomputed and made accessible for easy lookup queries. LinkedIn’s new ALT structure makes use of a question on demand or fan-out-on-read mannequin utilizing RocksDB indexing as an alternative of Lucene indexing. A lot of the computation is completed on the fly, permitting higher pace and adaptability for builders on this method.

- Rockset makes use of RocksDB as a foundational information retailer and implements the ALT structure (see white paper) in a cloud service.

The ALT structure clearly has the efficiency, scale, and effectivity to deal with real-time use instances at among the largest on-line corporations. Why has it not been used as extensively until not too long ago? The quick reply is that “indexing” software program is historically expensive, and never commercially viable, when information measurement is giant. That dominated out many smaller organizations from pursuing an ALT, query-on-demand method previously. However the present state of expertise—the mixture of highly effective indexing software program constructed on open-source RocksDB and favorable cloud economics—has made ALT not solely commercially possible at present, however a sublime structure for real-time information processing and analytics.

Study extra about Rockset’s structure on this 30 minute whiteboard video session by Rockset CTO and Co-founder Dhruba Borthakur.

Embedded content material: https://youtu.be/msW8nh5TTwQ

Rockset is the main real-time analytics platform constructed for the cloud, delivering quick analytics on real-time information with stunning effectivity. Study extra at rockset.com.

[ad_2]