[ad_1]

Many growth groups flip to DynamoDB for constructing event-driven architectures and user-friendly, performant purposes at scale. As an operational database, DynamoDB is optimized for real-time transactions even when deployed throughout a number of geographic places. Nonetheless, it doesn’t present sturdy efficiency for analytics workloads.

Analytics on DynamoDB

Whereas NoSQL databases like DynamoDB usually have glorious scaling traits, they help solely a restricted set of operations which can be centered on on-line transaction processing. This makes it tough to develop analytics instantly on them.

DynamoDB shops knowledge underneath the hood by partitioning it over numerous nodes based mostly on a user-specified partition key area current in every merchandise. This user-specified partition key could be optionally mixed with a form key to symbolize a major key. The first key acts as an index, making question operations cheap. A question operation can do equality comparisons (=)

on the partition key and comparative operations (>, <, =, BETWEEN) on the type key if specified.

Performing analytical queries not coated by the above scheme requires the usage of a scan operation, which is often executed by scanning over the whole DynamoDB desk in parallel. These scans could be sluggish and costly by way of learn throughput as a result of they require a full learn of the whole desk. Scans additionally are likely to decelerate when the desk dimension grows, as there’s

extra knowledge to scan to supply outcomes. If we need to help analytical queries with out encountering prohibitive scan prices, we are able to leverage secondary indexes, which we’ll focus on subsequent.

Indexing in DynamoDB

In DynamoDB, secondary indexes are sometimes used to enhance software efficiency by indexing fields which can be queried incessantly. Question operations on secondary indexes may also be used to energy particular options via analytic queries which have clearly outlined necessities.

Secondary indexes consist of making partition keys and optionally available kind keys over fields that we need to question. There are two forms of secondary indexes:

- Native secondary indexes (LSIs): LSIs lengthen the hash and vary key attributes for a single partition.

- International secondary indexes (GSIs): GSIs are indexes which can be utilized to a complete desk as a substitute of a single partition.

Nonetheless, as Nike found, overusing GSIs in DynamoDB could be costly. Analytics in DynamoDB, except they’re used just for quite simple level lookups or small vary scans, may end up in overuse of secondary indexes and excessive prices.

The prices for provisioned capability when utilizing indexes can add up shortly as a result of all updates to the bottom desk should be made within the corresponding GSIs as properly. Actually, AWS advises that the provisioned write capability for a world secondary index must be equal to or better than the write capability of the bottom desk to keep away from throttling writes to the bottom desk and crippling the applying. The price of provisioned write capability grows linearly with the variety of GSIs configured, making it value prohibitive to make use of many GSIs to help many entry patterns.

DynamoDB can also be not well-designed to index knowledge in nested constructions, together with arrays and objects. Earlier than indexing the information, customers might want to denormalize the information, flattening the nested objects and arrays. This might tremendously improve the variety of writes and related prices.

For a extra detailed examination of utilizing DynamoDB secondary indexes for analytics, see our weblog Secondary Indexes For Analytics On DynamoDB.

The underside line is that for analytical use circumstances, you’ll be able to achieve important efficiency and price benefits by syncing the DynamoDB desk with a distinct instrument or service that acts as an exterior secondary index for operating advanced analytics effectively.

DynamoDB + Elasticsearch

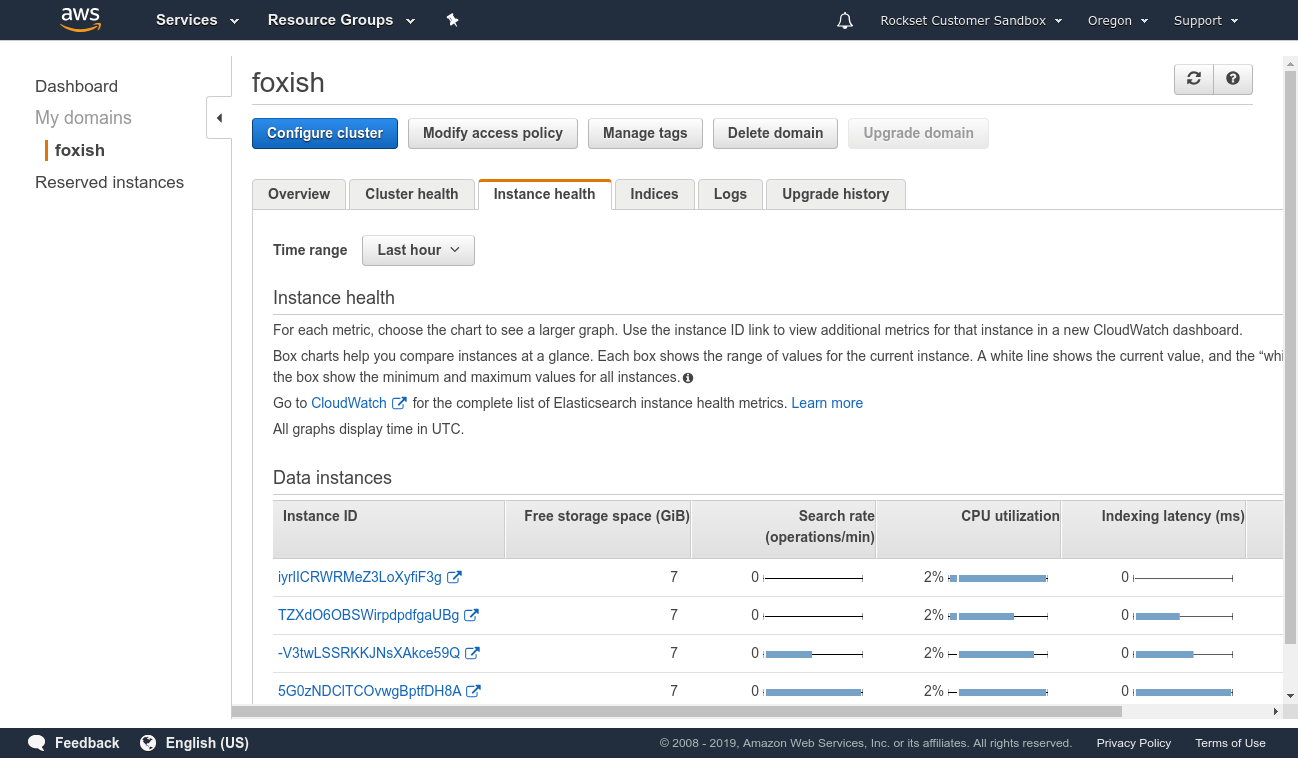

One strategy to constructing a secondary index over our knowledge is to make use of DynamoDB with Elasticsearch. Cloud-based Elasticsearch, similar to Elastic Cloud or Amazon OpenSearch Service, can be utilized to provision and configure nodes in line with the dimensions of the indexes, replication, and different necessities. A managed cluster requires some operations to improve, safe, and hold performant, however much less so than operating it completely by your self on EC2 cases.

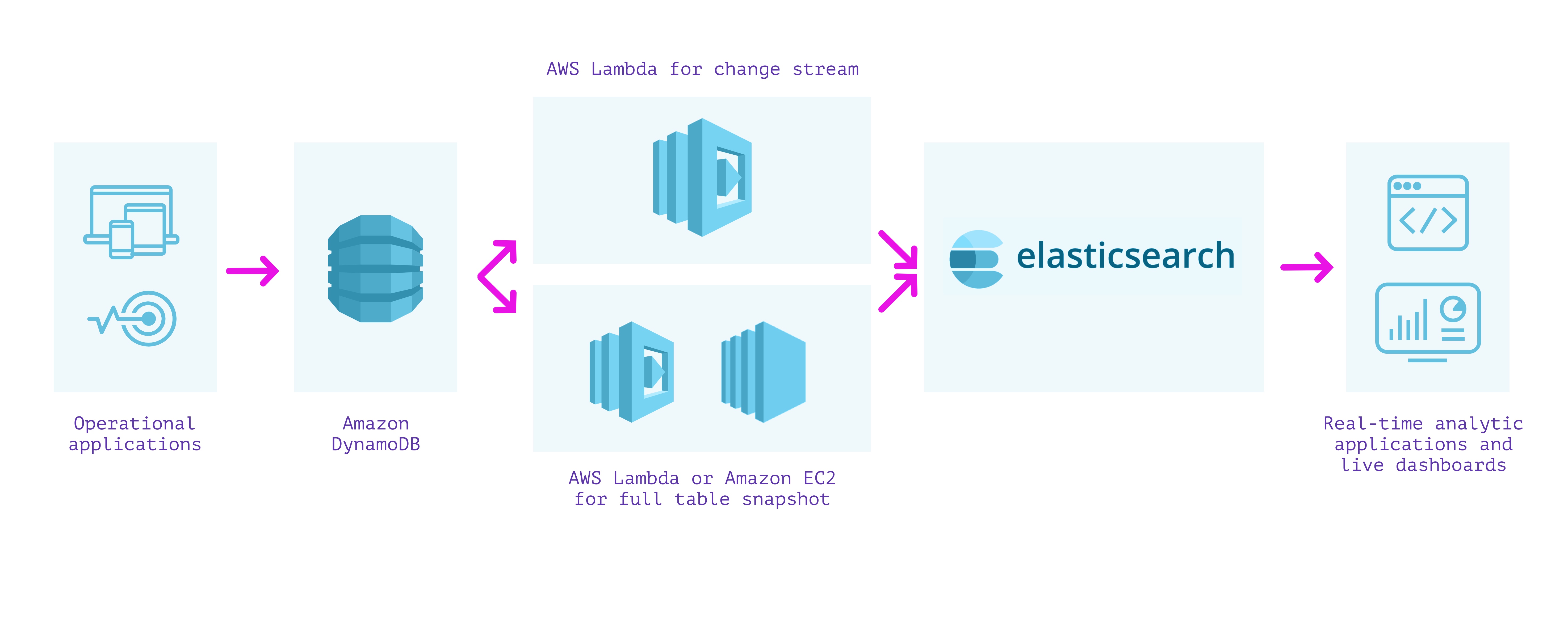

Because the strategy utilizing the Logstash Plugin for Amazon DynamoDB is unsupported and somewhat tough to arrange, we are able to as a substitute stream writes from DynamoDB into Elasticsearch utilizing DynamoDB Streams and an AWS Lambda perform. This strategy requires us to carry out two separate steps:

- We first create a lambda perform that’s invoked on the DynamoDB stream to put up every replace because it happens in DynamoDB into Elasticsearch.

- We then create a lambda perform (or EC2 occasion operating a script if it should take longer than the lambda execution timeout) to put up all the prevailing contents of DynamoDB into Elasticsearch.

We should write and wire up each of those lambda capabilities with the proper permissions so as to make sure that we don’t miss any writes into our tables. When they’re arrange together with required monitoring, we are able to obtain paperwork in Elasticsearch from DynamoDB and might use Elasticsearch to run analytical queries on the information.

The benefit of this strategy is that Elasticsearch helps full-text indexing and several other forms of analytical queries. Elasticsearch helps purchasers in varied languages and instruments like Kibana for visualization that may assist shortly construct dashboards. When a cluster is configured accurately, question latencies could be tuned for quick analytical queries over knowledge flowing into Elasticsearch.

Disadvantages embrace that the setup and upkeep value of the answer could be excessive. Even managed Elasticsearch requires coping with replication, resharding, index progress, and efficiency tuning of the underlying cases.

Elasticsearch has a tightly coupled structure that doesn’t separate compute and storage. This implies assets are sometimes overprovisioned as a result of they can’t be independently scaled. As well as, a number of workloads, similar to reads and writes, will contend for a similar compute assets.

Elasticsearch additionally can not deal with updates effectively. Updating any area will set off a reindexing of the whole doc. Elasticsearch paperwork are immutable, so any replace requires a brand new doc to be listed and the outdated model marked deleted. This leads to further compute and I/O expended to reindex even the unchanged fields and to put in writing complete paperwork upon replace.

As a result of lambdas fireplace once they see an replace within the DynamoDB stream, they’ll have have latency spikes on account of chilly begins. The setup requires metrics and monitoring to make sure that it’s accurately processing occasions from the DynamoDB stream and capable of write into Elasticsearch.

Functionally, by way of analytical queries, Elasticsearch lacks help for joins, that are helpful for advanced analytical queries that contain multiple index. Elasticsearch customers usually should denormalize knowledge, carry out application-side joins, or use nested objects or parent-child relationships to get round this limitation.

Benefits

- Full-text search help

- Assist for a number of forms of analytical queries

- Can work over the newest knowledge in DynamoDB

Disadvantages

- Requires administration and monitoring of infrastructure for ingesting, indexing, replication, and sharding

- Tightly coupled structure leads to useful resource overprovisioning and compute competition

- Inefficient updates

- Requires separate system to make sure knowledge integrity and consistency between DynamoDB and Elasticsearch

- No help for joins between completely different indexes

This strategy can work properly when implementing full-text search over the information in DynamoDB and dashboards utilizing Kibana. Nonetheless, the operations required to tune and keep an Elasticsearch cluster in manufacturing, its inefficient use of assets and lack of be part of capabilities could be difficult.

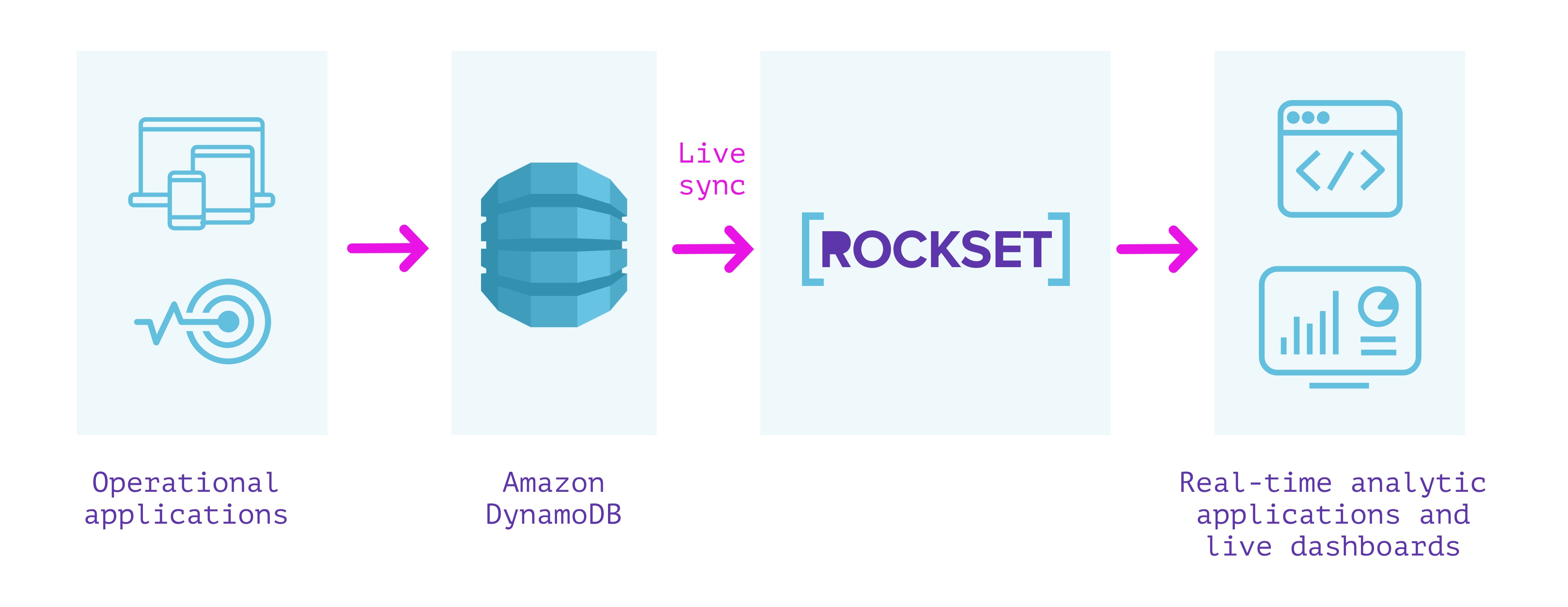

DynamoDB + Rockset

Rockset is a totally managed search and analytics database constructed primarily to help real-time purposes with excessive QPS necessities. It’s usually used as an exterior secondary index for knowledge from OLTP databases.

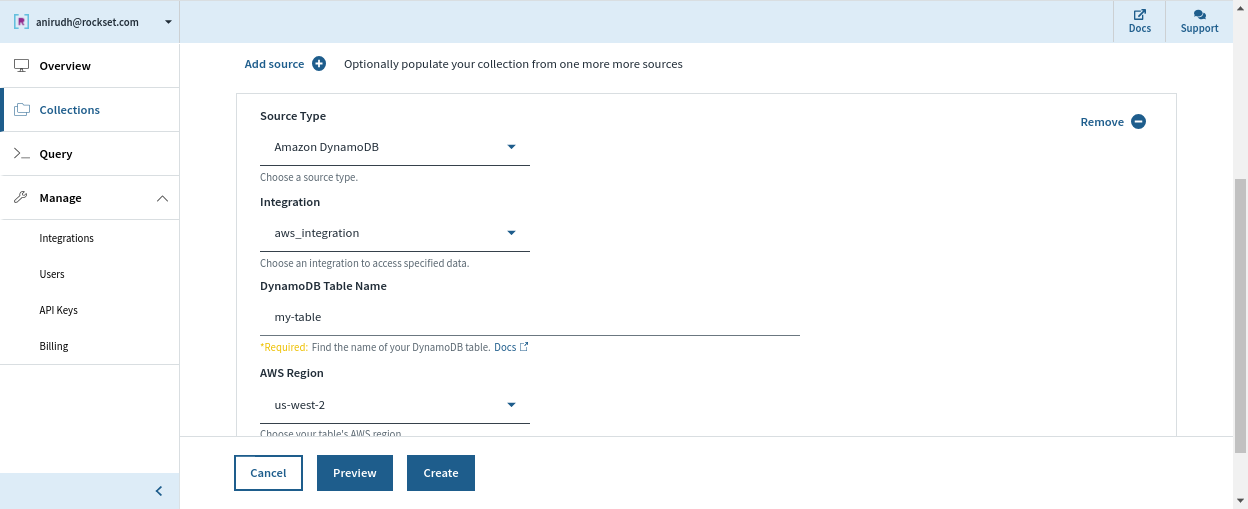

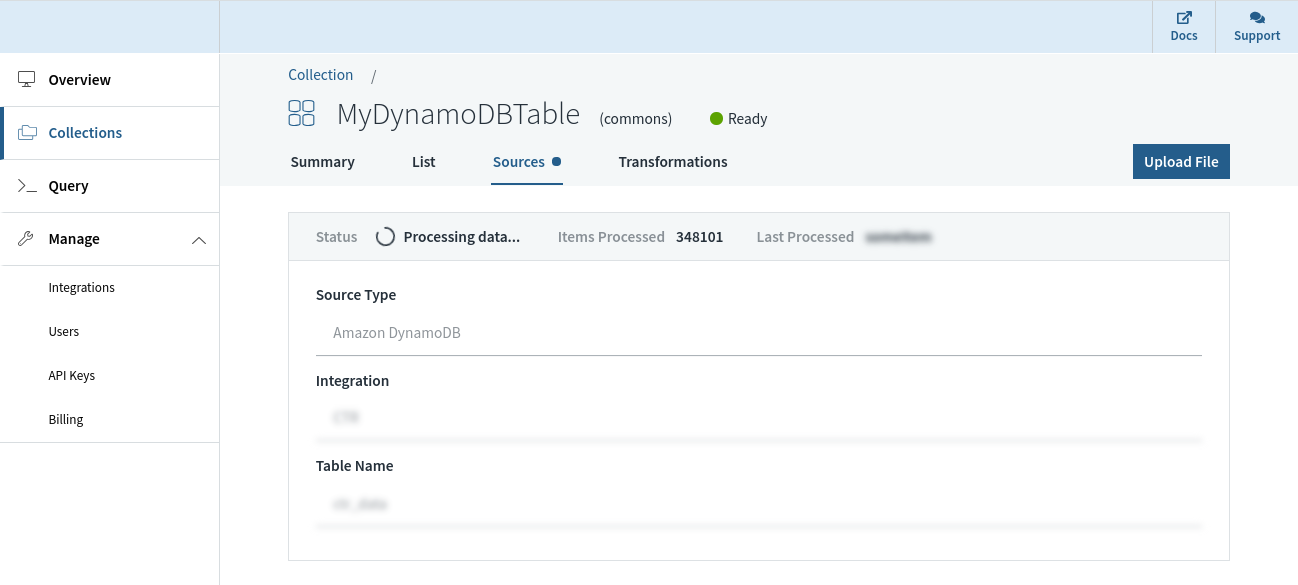

Rockset has a built-in connector with DynamoDB that can be utilized to maintain knowledge in sync between DynamoDB and Rockset. We are able to specify the DynamoDB desk we need to sync contents from and a Rockset assortment that indexes the desk. Rockset indexes the contents of the DynamoDB desk in a full snapshot after which syncs new modifications as they happen. The contents of the Rockset assortment are all the time in sync with the DynamoDB supply; no various seconds aside in regular state.

Rockset manages the information integrity and consistency between the DynamoDB desk and the Rockset assortment robotically by monitoring the state of the stream and offering visibility into the streaming modifications from DynamoDB.

With no schema definition, a Rockset assortment can robotically adapt when fields are added/eliminated, or when the construction/sort of the information itself modifications in DynamoDB. That is made attainable by sturdy dynamic typing and good schemas that obviate the necessity for any further ETL.

The Rockset assortment we sourced from DynamoDB helps SQL for querying and could be simply utilized by builders with out having to be taught a domain-specific language. It may also be used to serve queries to purposes over a REST API or utilizing shopper libraries in a number of programming languages. The superset of ANSI SQL that Rockset helps can work natively on deeply nested JSON arrays and objects, and leverage indexes which can be robotically constructed over all fields, to get millisecond latencies on even advanced analytical queries.

Rockset has pioneered compute-compute separation, which permits isolation of workloads in separate compute models whereas sharing the identical underlying real-time knowledge. This presents customers better useful resource effectivity when supporting simultaneous ingestion and queries or a number of purposes on the identical knowledge set.

As well as, Rockset takes care of safety, encryption of knowledge, and role-based entry management for managing entry to it. Rockset customers can keep away from the necessity for ETL by leveraging ingest transformations we are able to arrange in Rockset to switch the information because it arrives into a group. Customers can even optionally handle the lifecycle of the information by establishing retention insurance policies to robotically purge older knowledge. Each knowledge ingestion and question serving are robotically managed, which lets us deal with constructing and deploying stay dashboards and purposes whereas eradicating the necessity for infrastructure administration and operations.

Particularly related in relation to syncing with DynamoDB, Rockset helps in-place field-level updates, in order to keep away from expensive reindexing.

Abstract

- Constructed to ship excessive QPS and serve real-time purposes

- Fully serverless. No operations or provisioning of infrastructure or database required

- Compute-compute separation for predictable efficiency and environment friendly useful resource utilization

- Reside sync between DynamoDB and the Rockset assortment, in order that they’re by no means various seconds aside

- Monitoring to make sure consistency between DynamoDB and Rockset

- Computerized indexes constructed over the information enabling low-latency queries

- In-place updates that avoids costly reindexing and lowers knowledge latency

- Joins with knowledge from different sources similar to Amazon Kinesis, Apache Kafka, Amazon S3, and many others.

We are able to use Rockset for implementing real-time analytics over the information in DynamoDB with none operational, scaling, or upkeep issues. This will considerably velocity up the event of real-time purposes. If you would like to construct your software on DynamoDB knowledge utilizing Rockset, you may get began free of charge on right here.

[ad_2]