[ad_1]

Deep neural networks have gotten more and more related throughout varied industries, and for good purpose. When educated utilizing supervised studying, they are often extremely efficient at fixing varied issues; nonetheless, to realize optimum outcomes, a big quantity of coaching knowledge is required. The information should be of a top quality and consultant of the manufacturing setting.

Whereas giant quantities of knowledge can be found on-line, most of it’s unprocessed and never helpful for machine studying (ML). Let’s assume we wish to construct a site visitors gentle detector for autonomous driving. Coaching photographs ought to comprise site visitors lights and bounding packing containers to precisely seize the borders of those site visitors lights. However remodeling uncooked knowledge into organized, labeled, and helpful knowledge is time-consuming and difficult.

To optimize this course of, I developed Cortex: The Greatest AI Dataset, a brand new SaaS product that focuses on picture knowledge labeling and pc imaginative and prescient however might be prolonged to various kinds of knowledge and different synthetic intelligence (AI) subfields. Cortex has varied use instances that profit many fields and picture sorts:

- Enhancing mannequin efficiency for fine-tuning of customized knowledge units: Pretraining a mannequin on a big and numerous knowledge set like Cortex can considerably enhance the mannequin’s efficiency when it’s fine-tuned on a smaller, specialised knowledge set. As an example, within the case of a cat breed identification app, pretraining a mannequin on a various assortment of cat photographs helps the mannequin shortly acknowledge varied options throughout totally different cat breeds. This improves the app’s accuracy in classifying cat breeds when fine-tuned on a selected knowledge set.

- Coaching a mannequin for common object detection: As a result of the info set incorporates labeled photographs of assorted objects, a mannequin might be educated to detect and establish sure objects in photographs. One frequent instance is the identification of automobiles, helpful for functions similar to automated parking methods, site visitors administration, legislation enforcement, and safety. In addition to automotive detection, the strategy for common object detection might be prolonged to different MS COCO lessons (the info set at present handles solely MS COCO lessons).

- Coaching a mannequin for extracting object embeddings: Object embeddings seek advice from the illustration of objects in a high-dimensional area. By coaching a mannequin on Cortex, you possibly can train it to generate embeddings for objects in photographs, which may then be used for functions similar to similarity search or clustering.

- Producing semantic metadata for photographs: Cortex can be utilized to generate semantic metadata for photographs, similar to object labels. This will empower utility customers with extra insights and interactivity (e.g., clicking on objects in a picture to study extra about them or seeing associated photographs in a information portal). This characteristic is especially advantageous for interactive studying platforms, by which customers can discover objects (animals, automobiles, home goods, and so forth.) in larger element.

Our Cortex walkthrough will deal with the final use case, extracting semantic metadata from web site photographs and creating clickable bounding packing containers over these photographs. When a person clicks on a bounding field, the system initiates a Google seek for the MS COCO object class recognized inside it.

The Significance of Excessive-quality Knowledge for Fashionable AI

Many subfields of recent AI have just lately seen important breakthroughs in pc imaginative and prescient, pure language processing (NLP), and tabular knowledge evaluation. All these subfields share a typical reliance on high-quality knowledge. AI is barely pretty much as good as the info it’s educated on, and, as such, data-centric AI has change into an more and more vital space of analysis. Strategies like switch studying and artificial knowledge technology have been developed to handle the problem of knowledge shortage, whereas knowledge labeling and cleansing stay vital for making certain knowledge high quality.

Specifically, labeled knowledge performs a significant function within the improvement of recent AI fashions similar to fine-tuned LLMs or pc imaginative and prescient fashions. It’s straightforward to acquire trivial labels for pretraining language fashions, similar to predicting the following phrase in a sentence. Nonetheless, gathering labeled knowledge for conversational AI fashions like ChatGPT is extra difficult; these labels should show the specified conduct of the mannequin to make it seem to create significant conversations. The challenges multiply when coping with picture labeling. To create fashions like DALL-E 2 and Steady Diffusion, an enormous knowledge set with labeled photographs and textual descriptions was obligatory to coach them to generate photographs primarily based on person prompts.

Low-quality knowledge for methods like ChatGPT would result in poor conversational skills, and low-quality knowledge for picture object bounding packing containers would result in inaccurate predictions, similar to assigning the flawed lessons to the flawed bounding packing containers, failing to detect objects, and so forth. Low-quality picture knowledge may comprise noise and blur photographs. Cortex goals to make high-quality knowledge available to builders creating or coaching their picture fashions, making the coaching course of quicker, extra environment friendly, and predictable.

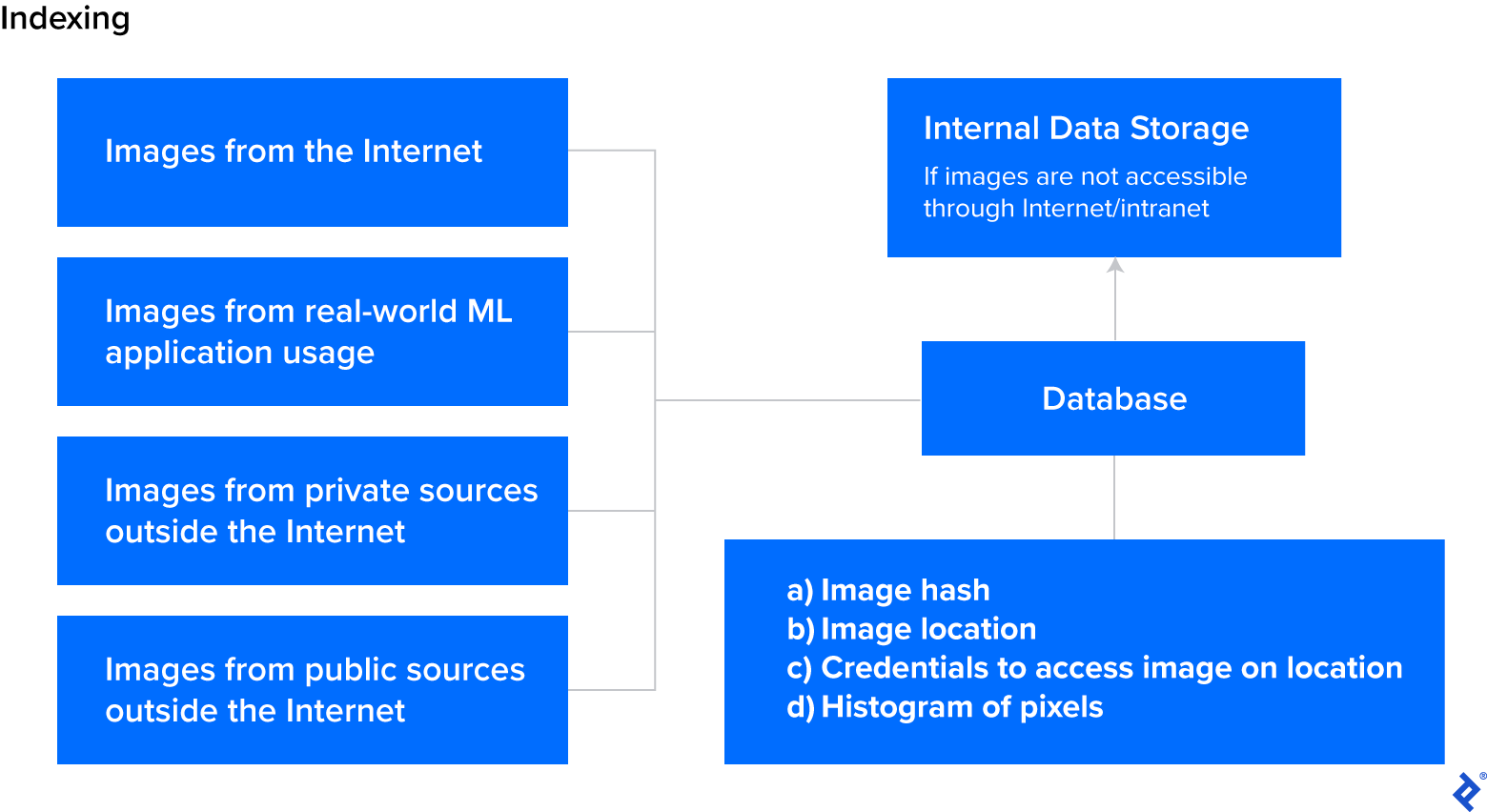

An Overview of Massive Knowledge Set Processing

Creating a big AI knowledge set is a strong course of that entails a number of phases. Usually, within the knowledge assortment section, photographs are scraped from the Web with saved URLs and structural attributes (e.g., picture hash, picture width and peak, and histogram). Subsequent, fashions carry out automated picture labeling so as to add semantic metadata (e.g., picture embeddings, object detection labels) to photographs. Lastly, high quality assurance (QA) efforts confirm the accuracy of labels via rule-based and ML-based approaches.

Knowledge Assortment

There are numerous strategies of acquiring knowledge for AI methods, every with its personal set of benefits and downsides:

-

Labeled knowledge units: These are created by researchers to resolve particular issues. These knowledge units, similar to MNIST and ImageNet, already comprise labels for mannequin coaching. Platforms like Kaggle present an area for sharing and discovering such knowledge units, however these are sometimes supposed for analysis, not industrial use.

-

Personal knowledge: This sort is proprietary to organizations and is often wealthy in domain-specific data. Nonetheless, it typically wants extra cleansing, knowledge labeling, and presumably consolidation from totally different subsystems.

-

Public knowledge: This knowledge is freely accessible on-line and collectible through internet crawlers. This strategy might be time-consuming, particularly if knowledge is saved on high-latency servers.

-

Crowdsourced knowledge: This sort entails participating human staff to gather real-world knowledge. The standard and format of the info might be inconsistent attributable to variations in particular person staff’ output.

-

Artificial knowledge: This knowledge is generated by making use of managed modifications to present knowledge. Artificial knowledge strategies embody generative adversarial networks (GANs) or easy picture augmentations, proving particularly helpful when substantial knowledge is already out there.

When constructing AI methods, acquiring the proper knowledge is essential to make sure effectiveness and accuracy.

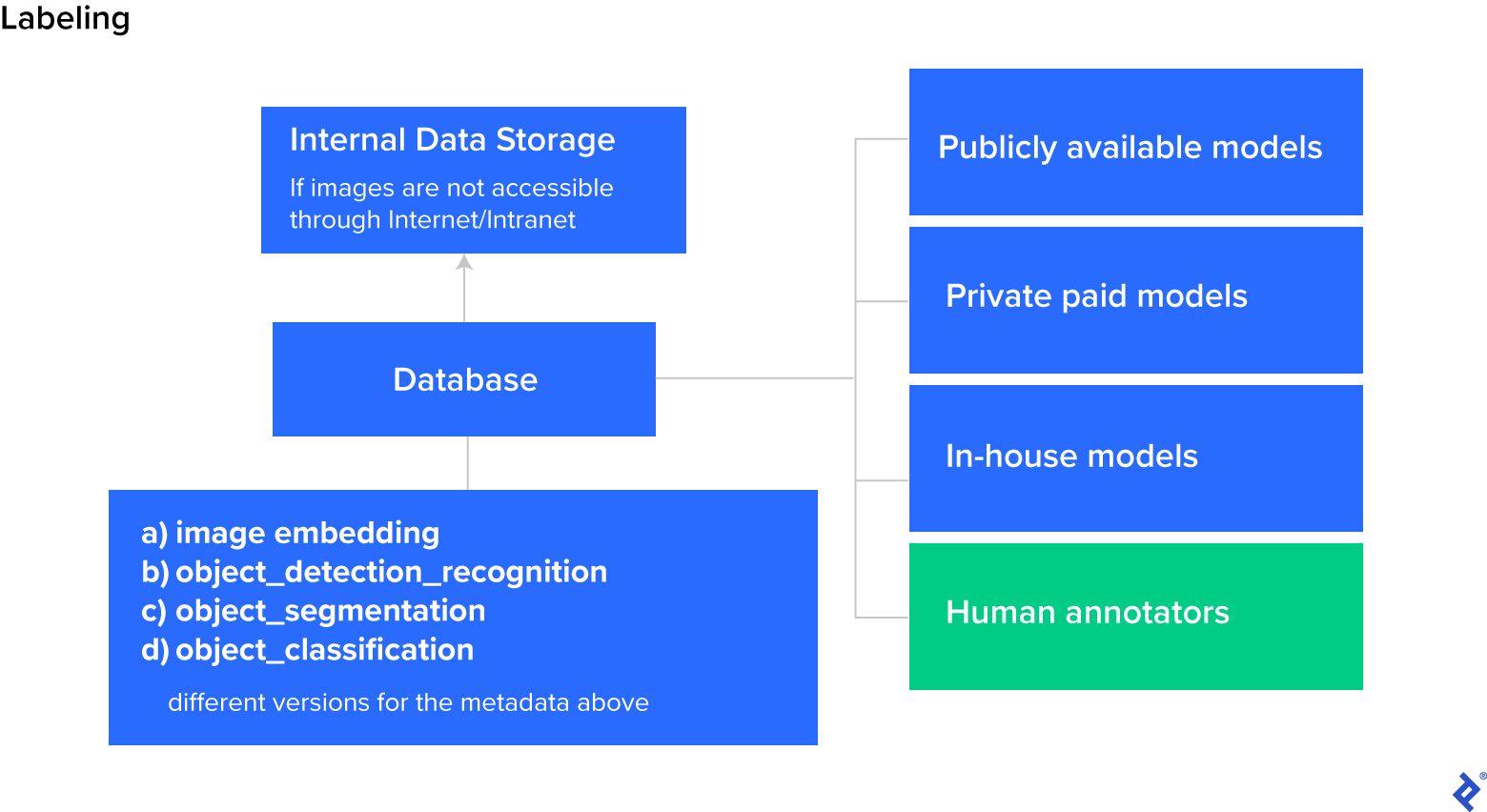

Knowledge Labeling

Knowledge labeling refers back to the strategy of assigning labels to knowledge samples in order that the AI system can study from them. The most typical knowledge labeling strategies are the next:

-

Guide knowledge labeling: That is essentially the most simple strategy. A human annotator examines every knowledge pattern and manually assigns a label to it. This strategy might be time-consuming and costly, however it’s typically obligatory for knowledge that requires particular area experience or is extremely subjective.

-

Rule-based labeling: That is a substitute for guide labeling that entails making a algorithm or algorithms to assign labels to knowledge samples. For instance, when creating labels for video frames, as a substitute of manually annotating each doable body, you possibly can annotate the primary and final body and programmatically interpolate for frames in between.

-

ML-based labeling: This strategy entails utilizing present machine studying fashions to supply labels for brand new knowledge samples. For instance, a mannequin may be educated on a big knowledge set of labeled photographs after which used to routinely label photographs. Whereas this strategy requires an important many labeled photographs for coaching, it may be notably environment friendly, and a latest paper means that ChatGPT is already outperforming crowdworkers for textual content annotation duties.

The selection of labeling technique is dependent upon the complexity of the info and the out there sources. By rigorously deciding on and implementing the suitable knowledge labeling technique, researchers and practitioners can create high-quality labeled knowledge units to coach more and more superior AI fashions.

High quality Assurance

High quality assurance ensures that the info and labels used for coaching are correct, constant, and related to the duty at hand. The most typical QA strategies mirror knowledge labeling strategies:

-

Guide QA: This strategy entails manually reviewing knowledge and labels to test for accuracy and relevance.

-

Rule-based QA: This technique employs predefined guidelines to test knowledge and labels for accuracy and consistency.

-

ML-based QA: This technique makes use of machine studying algorithms to detect errors or inconsistencies in knowledge and labels routinely.

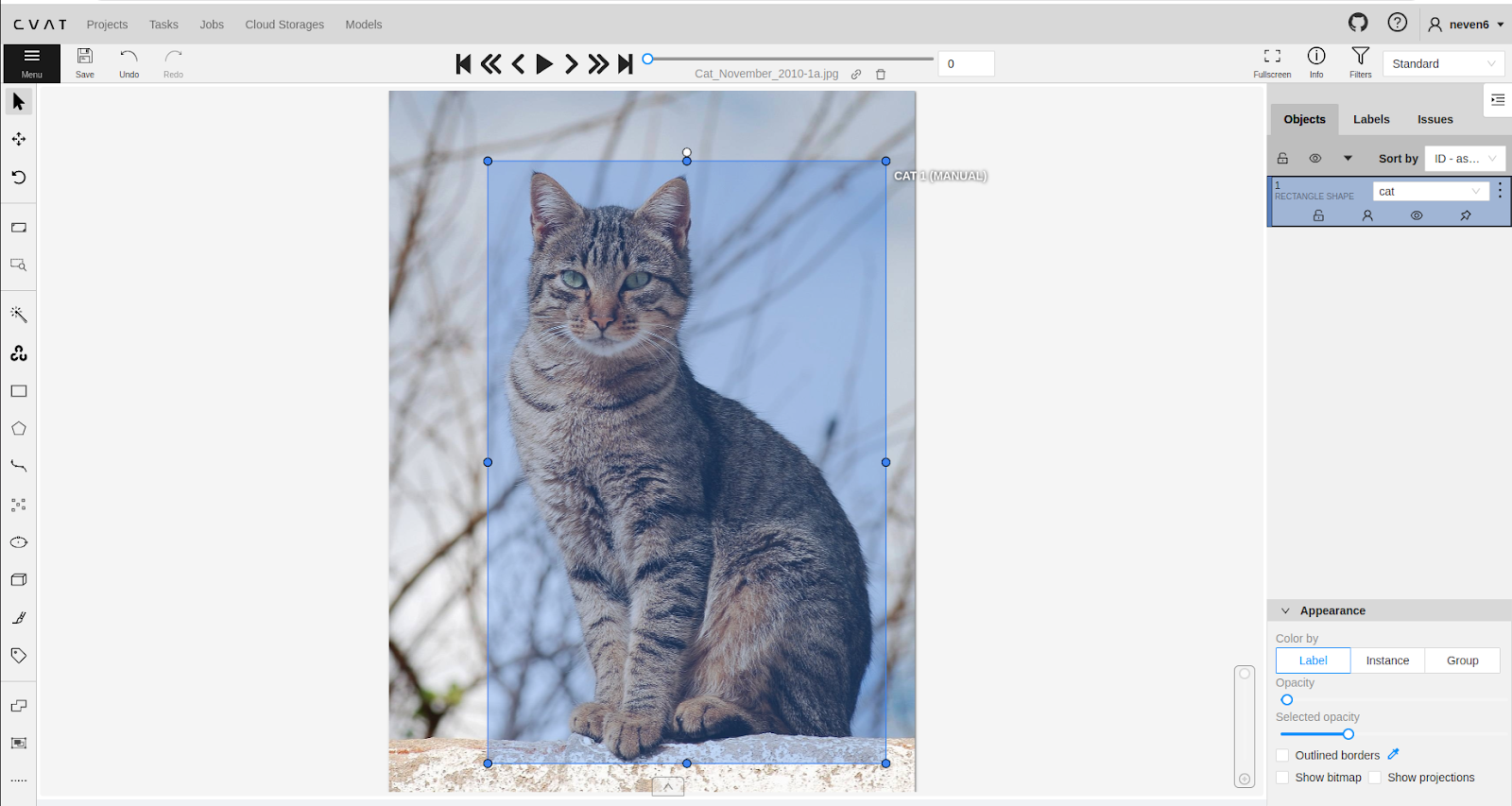

One of many ML-based instruments out there for QA is FiftyOne, an open-source toolkit for constructing high-quality knowledge units and pc imaginative and prescient fashions. For guide QA, human annotators can use instruments like CVAT to enhance effectivity. Counting on human annotators is the costliest and least fascinating choice, and will solely be carried out if automated annotators don’t produce high-quality labels.

When validating knowledge processing efforts, the extent of element required for labeling ought to match the wants of the duty at hand. Some functions might require precision all the way down to the pixel stage, whereas others could also be extra forgiving.

QA is an important step in constructing high-quality neural community fashions; it verifies that these fashions are efficient and dependable. Whether or not you utilize guide, rule-based, or ML-based QA, it is very important be diligent and thorough to make sure one of the best end result.

Cortex Walkthrough: From URL to Labeled Picture

Cortex makes use of each guide and automatic processes to gather and label the info and carry out QA; nonetheless, the objective is to scale back guide work by feeding human outputs to rule-based and ML algorithms.

Cortex samples encompass URLs that reference the unique photographs, that are scraped from the Widespread Crawl database. Knowledge factors are labeled with object bounding packing containers. Object lessons are MS COCO lessons, like “individual,” “automotive,” or “site visitors gentle.” To make use of the info set, customers should obtain the pictures they’re all for from the given URLs utilizing img2dataset. Labels within the context of Cortex are referred to as semantic metadata as they offer the info that means and expose helpful data hidden in each single knowledge pattern (e.g., picture width and peak).

The Cortex knowledge set additionally features a filtering characteristic that allows customers to look the database to retrieve particular photographs. Moreover, it gives an interactive picture labeling characteristic that permits customers to supply hyperlinks to photographs that aren’t listed within the database. The system then dynamically annotates the pictures and presents the semantic metadata and structural attributes for the pictures at that particular URL.

Code Examples and Implementation

Cortex lives on RapidAPI and permits free semantic metadata and structural attribute extraction for any URL on the Web. The paid model permits customers to get batches of scraped labeled knowledge from the Web utilizing filters for bulk picture labeling.

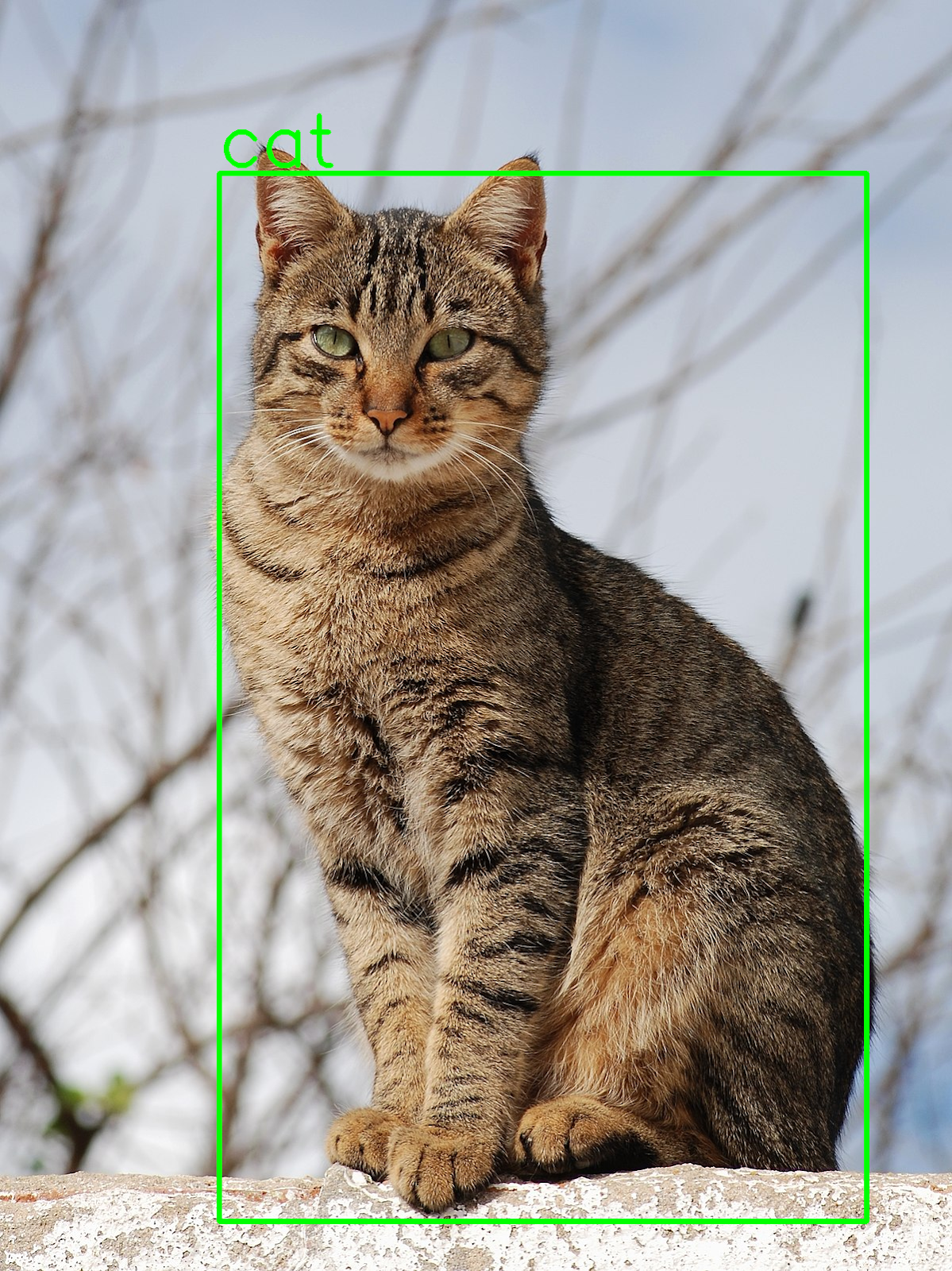

The Python code instance introduced on this part demonstrates the right way to use Cortex to get semantic metadata and structural attributes for a given URL and draw bounding packing containers for object detection. Because the system evolves, performance will likely be expanded to incorporate extra attributes, similar to a histogram, pose estimation, and so forth. Each extra attribute provides worth to the processed knowledge and makes it appropriate for extra use instances.

import cv2

import json

import requests

import numpy as np

cortex_url = 'https://cortex-api.piculjantechnologies.ai/add'

img_url =

'https://add.wikimedia.org/wikipedia/commons/thumb/4/4d/Cat_November_2010-1a.jpg/1200px-Cat_November_2010-1a.jpg'

req = requests.get(img_url)

png_as_np = np.frombuffer(req.content material, dtype=np.uint8)

img = cv2.imdecode(png_as_np, -1)

knowledge = {'url_or_id': img_url}

response = requests.put up(cortex_url, knowledge=json.dumps(knowledge), headers={'Content material-Sort': 'utility/json'})

content material = json.masses(response.content material)

object_analysis = content material['object_analysis'][0]

for i in vary(len(object_analysis)):

x1 = object_analysis[i]['x1']

y1 = object_analysis[i]['y1']

x2 = object_analysis[i]['x2']

y2 = object_analysis[i]['y2']

classname = object_analysis[i]['classname']

cv2.rectangle(img, (x1, y1), (x2, y2), (0, 255, 0), 5)

cv2.putText(img, classname,

(x1, y1 - 10),

cv2.FONT_HERSHEY_SIMPLEX, 3, (0, 255, 0), 5)

cv2.imwrite('visualization.png', img)

The contents of the response appear like this:

{

"_id":"PT::63b54db5e6ca4c53498bb4e5",

"url":"https://add.wikimedia.org/wikipedia/commons/thumb/4/4d/Cat_November_2010-1a.jpg/1200px-Cat_November_2010-1a.jpg",

"datetime":"2023-01-04 09:58:14.082248",

"object_analysis_processed":"true",

"pose_estimation_processed":"false",

"face_analysis_processed":"false",

"kind":"picture",

"peak":1602,

"width":1200,

"hash":"d0ad50c952a9a153fd7b0f9765dec721f24c814dbe2ca1010d0b28f0f74a2def",

"object_analysis":[

[

{

"classname":"cat",

"conf":0.9876543879508972,

"x1":276,

"y1":218,

"x2":1092,

"y2":1539

}

]

],

"label_quality_estimation":2.561230587616592e-7

}

Let’s take a more in-depth look and description what every bit of knowledge can be utilized for:

-

_idis the inner identifier used for indexing the info and is self-explanatory. -

urlis the URL of the picture, which permits us to see the place the picture originated and to doubtlessly filter photographs from sure sources. -

datetimeshows the date and time when the picture was seen by the method for the primary time. This knowledge might be essential for time-sensitive functions, e.g., when processing photographs from a real-time supply similar to a livestream. -

object_analysis_processed,pose_estimation_processed, andface_analysis_processedflags inform if the labels for object evaluation, pose estimation, and face evaluation have been created. -

kinddenotes the kind of knowledge (e.g., picture, audio, video). Since Cortex is at present restricted to picture knowledge, this flag will likely be expanded with different varieties of knowledge sooner or later. -

peakandwidthare self-explanatory structural attributes and supply the peak and width of the pattern. -

hashis self-explanatory and shows the hashed key. -

object_analysisincorporates details about object evaluation labels and shows essential semantic metadata data, similar to the category identify and stage of confidence. -

label_quality_estimationincorporates the label high quality rating, ranging in worth from 0 (poor high quality) to 1 (good high quality). The rating is calculated utilizing ML-based QA for labels.

That is what the visualization.png picture created by the Python code snippet seems to be like:

The following code snippet reveals the right way to use the paid model of Cortex to filter and get URLs of photographs scraped from the Web:

import json

import requests

url = 'https://cortex4.p.rapidapi.com/get-labeled-data'

querystring = {'web page': '1',

'q': '{"object_analysis": {"$elemMatch": {"$elemMatch": {"classname": "cat"}}}, "width": {"$gt": 100}}'}

headers = {

'X-RapidAPI-Key': 'SIGN-UP-FOR-KEY',

'X-RapidAPI-Host': 'cortex4.p.rapidapi.com'

}

response = requests.request("GET", url, headers=headers, params=querystring)

content material = json.masses(response.content material)

The endpoint makes use of a MongoDB Question Language question ( q) to filter the database primarily based on semantic metadata and accesses the web page quantity within the physique parameter named web page.

The instance question returns photographs containing object evaluation semantic metadata with the classname cat and a width larger than 100 pixels. The content material of the response seems to be like this:

{

"output":[

{

"_id":"PT::639339ad4552ef52aba0b372",

"url":"https://teamglobalasset.com/rtp/PP/31.png",

"datetime":"2022-12-09 13:35:41.733010",

"object_analysis_processed":"true",

"pose_estimation_processed":"false",

"face_analysis_processed":"false",

"source":"commoncrawl",

"type":"image",

"height":234,

"width":325,

"hash":"bf2f1a63ecb221262676c2650de5a9c667ef431c7d2350620e487b029541cf7a",

"object_analysis":[

[

{

"classname":"cat",

"conf":0.9602264761924744,

"x1":245,

"y1":65,

"x2":323,

"y2":176

},

{

"classname":"dog",

"conf":0.8493766188621521,

"x1":68,

"y1":18,

"x2":255,

"y2":170

}

]

],

“label_quality_estimation”:3.492028982676312e-18

}, … <as much as 25 knowledge factors in complete>

]

"size":1454

}

The output incorporates as much as 25 knowledge factors on a given web page, together with semantic metadata, structural attributes, and details about the supply from the place the picture is scraped (commoncrawl on this case). It additionally exposes the entire question size within the size key.

Basis Fashions and ChatGPT Integration

Basis fashions, or AI fashions educated on a considerable amount of unlabeled knowledge via self-supervised studying, have revolutionized the sphere of AI since their introduction in 2018. Basis fashions might be additional fine-tuned for specialised functions (e.g., mimicking a sure individual’s writing type) utilizing small quantities of labeled knowledge, permitting them to be tailored to a wide range of totally different duties.

Cortex’s labeled knowledge units can be utilized as a dependable supply of knowledge to make pretrained fashions an excellent higher place to begin for all kinds of duties, and people fashions are one step above basis fashions that also use labels for pretraining in a self-supervised method. By leveraging huge quantities of knowledge labeled by Cortex, AI fashions might be pretrained extra successfully and produce extra correct outcomes when fine-tuned. What units Cortex aside from different options is its scale and variety—the info set continually grows, and new knowledge factors with numerous labels are added recurrently. On the time of publication, the entire variety of knowledge factors was greater than 20 million.

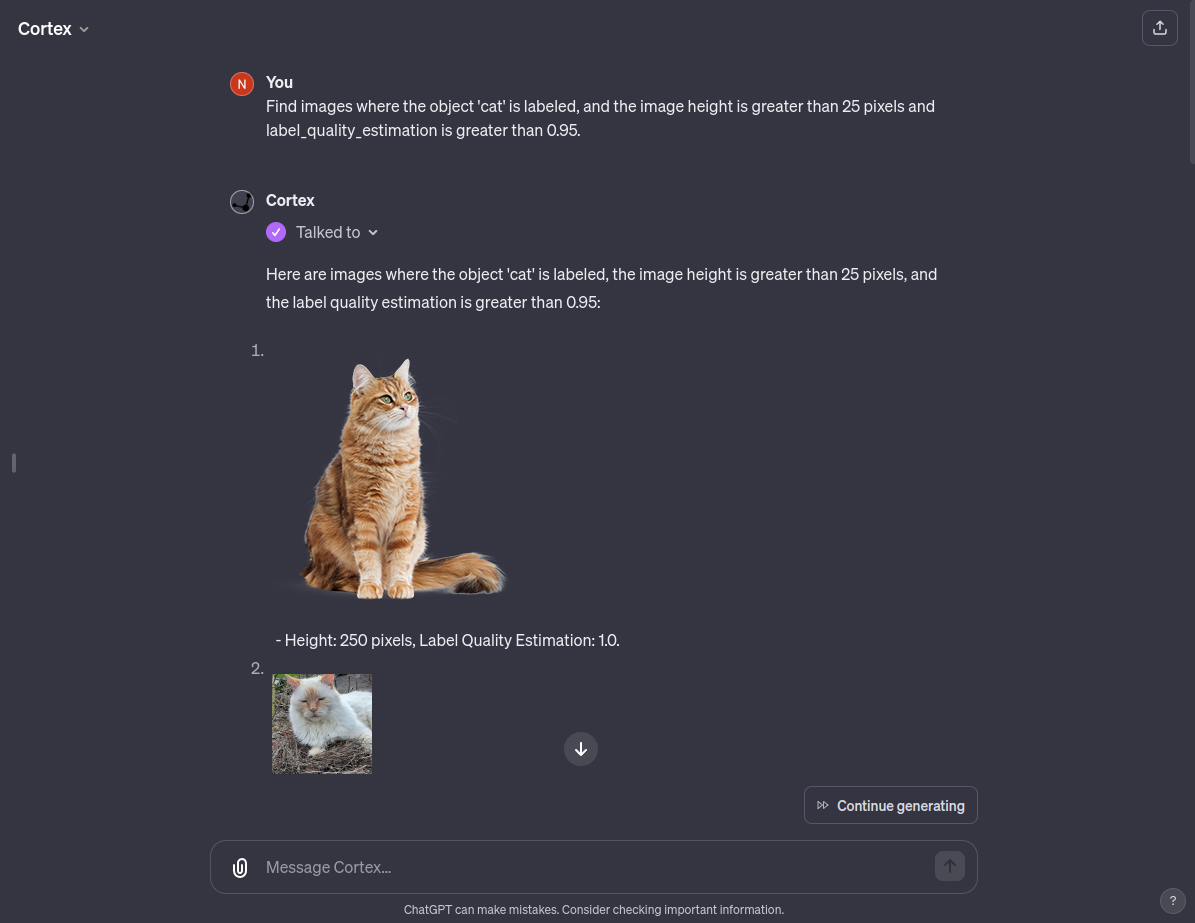

Cortex additionally gives a custom-made ChatGPT chatbot, giving customers unparalleled entry to and utilization of a complete database full of meticulously labeled knowledge. This user-friendly performance improves ChatGPT’s capabilities, offering it with deep entry to each semantic and structural metadata for photographs, however we plan to increase it to totally different knowledge past photographs.

With the present state of Cortex, customers can ask this custom-made ChatGPT to supply an inventory of photographs containing sure objects that eat a lot of the picture’s area or photographs containing a number of objects. Personalized ChatGPT can perceive deep semantics and seek for particular varieties of photographs primarily based on a easy immediate. With future refinements that can introduce numerous object lessons to Cortex, the customized GPT may act as a robust picture search chatbot.

Picture Knowledge Labeling because the Spine of AI Techniques

We’re surrounded by giant quantities of knowledge, however unprocessed uncooked knowledge is generally irrelevant from a coaching perspective, and must be refined to construct profitable AI methods. Cortex tackles this problem by serving to rework huge portions of uncooked knowledge into helpful knowledge units. The flexibility to shortly refine uncooked knowledge reduces reliance on third-party knowledge and companies, hurries up coaching, and allows the creation of extra correct, custom-made AI fashions.

The system at present returns semantic metadata for object evaluation together with a high quality estimate, however will ultimately help face evaluation, pose estimation, and visible embeddings. There are additionally plans to help modalities aside from photographs, similar to video, audio, and textual content knowledge. The system at present returns width and peak structural attributes, however it will help a histogram of pixels as properly.

As AI methods change into extra commonplace, demand for high quality knowledge is certain to go up, and the way in which we accumulate and course of knowledge will evolve. Present AI options are solely pretty much as good as the info they’re educated on, and might be extraordinarily efficient and highly effective when meticulously educated on giant quantities of high quality knowledge. The last word objective is to make use of Cortex to index as a lot publicly out there knowledge as doable and assign semantic metadata and structural attributes to it, making a helpful repository of high-quality labeled knowledge wanted to coach the AI methods of tomorrow.

The editorial crew of the Toptal Engineering Weblog extends its gratitude to Shanglun Wang for reviewing the code samples and different technical content material introduced on this article.

All knowledge set photographs and pattern photographs courtesy of Pičuljan Applied sciences.

[ad_2]