[ad_1]

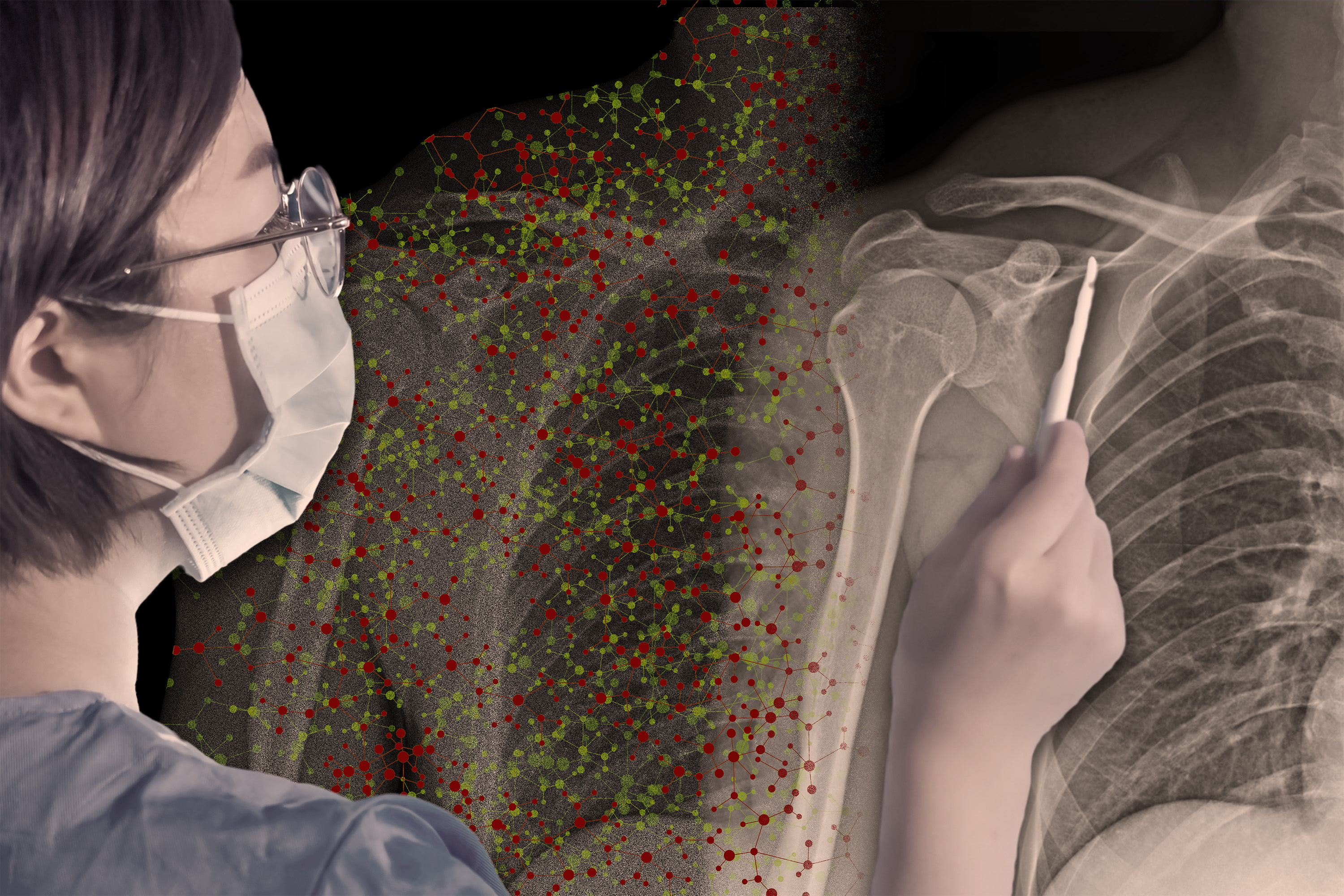

Synthetic intelligence fashions that pick patterns in pictures can typically achieve this higher than human eyes — however not at all times. If a radiologist is utilizing an AI mannequin to assist her decide whether or not a affected person’s X-rays present indicators of pneumonia, when ought to she belief the mannequin’s recommendation and when ought to she ignore it?

A custom-made onboarding course of might assist this radiologist reply that query, in line with researchers at MIT and the MIT-IBM Watson AI Lab. They designed a system that teaches a person when to collaborate with an AI assistant.

On this case, the coaching methodology may discover conditions the place the radiologist trusts the mannequin’s recommendation — besides she shouldn’t as a result of the mannequin is unsuitable. The system mechanically learns guidelines for the way she ought to collaborate with the AI, and describes them with pure language.

Throughout onboarding, the radiologist practices collaborating with the AI utilizing coaching workout routines primarily based on these guidelines, receiving suggestions about her efficiency and the AI’s efficiency.

The researchers discovered that this onboarding process led to a couple of 5 p.c enchancment in accuracy when people and AI collaborated on a picture prediction activity. Their outcomes additionally present that simply telling the person when to belief the AI, with out coaching, led to worse efficiency.

Importantly, the researchers’ system is absolutely automated, so it learns to create the onboarding course of primarily based on information from the human and AI performing a particular activity. It will probably additionally adapt to completely different duties, so it may be scaled up and utilized in many conditions the place people and AI fashions work collectively, equivalent to in social media content material moderation, writing, and programming.

“So typically, individuals are given these AI instruments to make use of with none coaching to assist them work out when it will be useful. That’s not what we do with almost each different software that individuals use — there’s virtually at all times some sort of tutorial that comes with it. However for AI, this appears to be lacking. We try to sort out this downside from a methodological and behavioral perspective,” says Hussein Mozannar, a graduate pupil within the Social and Engineering Programs doctoral program throughout the Institute for Information, Programs, and Society (IDSS) and lead writer of a paper about this coaching course of.

The researchers envision that such onboarding will probably be a vital a part of coaching for medical professionals.

“One might think about, for instance, that docs making therapy selections with the assistance of AI will first should do coaching just like what we suggest. We might must rethink every part from persevering with medical training to the best way medical trials are designed,” says senior writer David Sontag, a professor of EECS, a member of the MIT-IBM Watson AI Lab and the MIT Jameel Clinic, and the chief of the Scientific Machine Studying Group of the Pc Science and Synthetic Intelligence Laboratory (CSAIL).

Mozannar, who can be a researcher with the Scientific Machine Studying Group, is joined on the paper by Jimin J. Lee, an undergraduate in electrical engineering and pc science; Dennis Wei, a senior analysis scientist at IBM Analysis; and Prasanna Sattigeri and Subhro Das, analysis employees members on the MIT-IBM Watson AI Lab. The paper will probably be offered on the Convention on Neural Info Processing Programs.

Coaching that evolves

Present onboarding strategies for human-AI collaboration are sometimes composed of coaching supplies produced by human specialists for particular use circumstances, making them tough to scale up. Some associated methods depend on explanations, the place the AI tells the person its confidence in every resolution, however analysis has proven that explanations are hardly ever useful, Mozannar says.

“The AI mannequin’s capabilities are consistently evolving, so the use circumstances the place the human might probably profit from it are rising over time. On the identical time, the person’s notion of the mannequin continues altering. So, we’d like a coaching process that additionally evolves over time,” he provides.

To perform this, their onboarding methodology is mechanically discovered from information. It’s constructed from a dataset that comprises many situations of a activity, equivalent to detecting the presence of a visitors mild from a blurry picture.

The system’s first step is to gather information on the human and AI performing this activity. On this case, the human would attempt to predict, with the assistance of AI, whether or not blurry pictures comprise visitors lights.

The system embeds these information factors onto a latent area, which is a illustration of information during which related information factors are nearer collectively. It makes use of an algorithm to find areas of this area the place the human collaborates incorrectly with the AI. These areas seize situations the place the human trusted the AI’s prediction however the prediction was unsuitable, and vice versa.

Maybe the human mistakenly trusts the AI when pictures present a freeway at night time.

After discovering the areas, a second algorithm makes use of a big language mannequin to explain every area as a rule, utilizing pure language. The algorithm iteratively fine-tunes that rule by discovering contrasting examples. It’d describe this area as “ignore AI when it’s a freeway throughout the night time.”

These guidelines are used to construct coaching workout routines. The onboarding system reveals an instance to the human, on this case a blurry freeway scene at night time, in addition to the AI’s prediction, and asks the person if the picture reveals visitors lights. The person can reply sure, no, or use the AI’s prediction.

If the human is unsuitable, they’re proven the right reply and efficiency statistics for the human and AI on these situations of the duty. The system does this for every area, and on the finish of the coaching course of, repeats the workout routines the human bought unsuitable.

“After that, the human has discovered one thing about these areas that we hope they’ll take away sooner or later to make extra correct predictions,” Mozannar says.

Onboarding boosts accuracy

The researchers examined this method with customers on two duties — detecting visitors lights in blurry pictures and answering a number of selection questions from many domains (equivalent to biology, philosophy, pc science, and so on.).

They first confirmed customers a card with details about the AI mannequin, the way it was skilled, and a breakdown of its efficiency on broad classes. Customers have been cut up into 5 teams: Some have been solely proven the cardboard, some went by means of the researchers’ onboarding process, some went by means of a baseline onboarding process, some went by means of the researchers’ onboarding process and got suggestions of when they need to or shouldn’t belief the AI, and others have been solely given the suggestions.

Solely the researchers’ onboarding process with out suggestions improved customers’ accuracy considerably, boosting their efficiency on the visitors mild prediction activity by about 5 p.c with out slowing them down. Nevertheless, onboarding was not as efficient for the question-answering activity. The researchers consider it’s because the AI mannequin, ChatGPT, offered explanations with every reply that convey whether or not it must be trusted.

However offering suggestions with out onboarding had the alternative impact — customers not solely carried out worse, they took extra time to make predictions.

“If you solely give somebody suggestions, it looks like they get confused and don’t know what to do. It derails their course of. Folks additionally don’t like being informed what to do, so that may be a issue as properly,” Mozannar says.

Offering suggestions alone might hurt the person if these suggestions are unsuitable, he provides. With onboarding, then again, the largest limitation is the quantity of obtainable information. If there aren’t sufficient information, the onboarding stage received’t be as efficient, he says.

Sooner or later, he and his collaborators wish to conduct bigger research to judge the short- and long-term results of onboarding. In addition they wish to leverage unlabeled information for the onboarding course of, and discover strategies to successfully cut back the variety of areas with out omitting necessary examples.

“Individuals are adopting AI techniques willy-nilly, and certainly AI affords nice potential, however these AI brokers nonetheless generally makes errors. Thus, it’s essential for AI builders to plot strategies that assist people know when it’s protected to depend on the AI’s ideas,” says Dan Weld, professor emeritus on the Paul G. Allen College of Pc Science and Engineering on the College of Washington, who was not concerned with this analysis. “Mozannar et al. have created an revolutionary methodology for figuring out conditions the place the AI is reliable, and (importantly) to explain them to folks in a approach that results in higher human-AI workforce interactions.”

This work is funded, partially, by the MIT-IBM Watson AI Lab.

[ad_2]