[ad_1]

Folks have the outstanding means to absorb an amazing quantity of knowledge (estimated to be ~1010 bits/s getting into the retina) and selectively attend to a couple task-relevant and attention-grabbing areas for additional processing (e.g., reminiscence, comprehension, motion). Modeling human consideration (the results of which is commonly known as a saliency mannequin) has due to this fact been of curiosity throughout the fields of neuroscience, psychology, human-computer interplay (HCI) and laptop imaginative and prescient. The power to foretell which areas are prone to appeal to consideration has quite a few essential purposes in areas like graphics, images, picture compression and processing, and the measurement of visible high quality.

We’ve beforehand mentioned the potential for accelerating eye motion analysis utilizing machine studying and smartphone-based gaze estimation, which earlier required specialised {hardware} costing as much as $30,000 per unit. Associated analysis contains “Look to Converse”, which helps customers with accessibility wants (e.g., folks with ALS) to speak with their eyes, and the not too long ago revealed “Differentially non-public heatmaps” approach to compute heatmaps, like these for consideration, whereas defending customers’ privateness.

On this weblog, we current two papers (one from CVPR 2022, and one simply accepted to CVPR 2023) that spotlight our current analysis within the space of human consideration modeling: “Deep Saliency Prior for Decreasing Visible Distraction” and “Studying from Distinctive Views: Person-aware Saliency Modeling”, along with current analysis on saliency pushed progressive loading for picture compression (1, 2). We showcase how predictive fashions of human consideration can allow pleasant consumer experiences equivalent to picture modifying to attenuate visible litter, distraction or artifacts, picture compression for quicker loading of webpages or apps, and guiding ML fashions in direction of extra intuitive human-like interpretation and mannequin efficiency. We deal with picture modifying and picture compression, and talk about current advances in modeling within the context of those purposes.

Consideration-guided picture modifying

Human consideration fashions normally take a picture as enter (e.g., a pure picture or a screenshot of a webpage), and predict a heatmap as output. The expected heatmap on the picture is evaluated in opposition to ground-truth consideration information, that are sometimes collected by a watch tracker or approximated by way of mouse hovering/clicking. Earlier fashions leveraged handcrafted options for visible clues, like coloration/brightness distinction, edges, and form, whereas newer approaches robotically be taught discriminative options primarily based on deep neural networks, from convolutional and recurrent neural networks to newer imaginative and prescient transformer networks.

In “Deep Saliency Prior for Decreasing Visible Distraction” (extra info on this venture web site), we leverage deep saliency fashions for dramatic but visually real looking edits, which might considerably change an observer’s consideration to totally different picture areas. For instance, eradicating distracting objects within the background can cut back litter in photographs, resulting in elevated consumer satisfaction. Equally, in video conferencing, lowering litter within the background could improve deal with the primary speaker (instance demo right here).

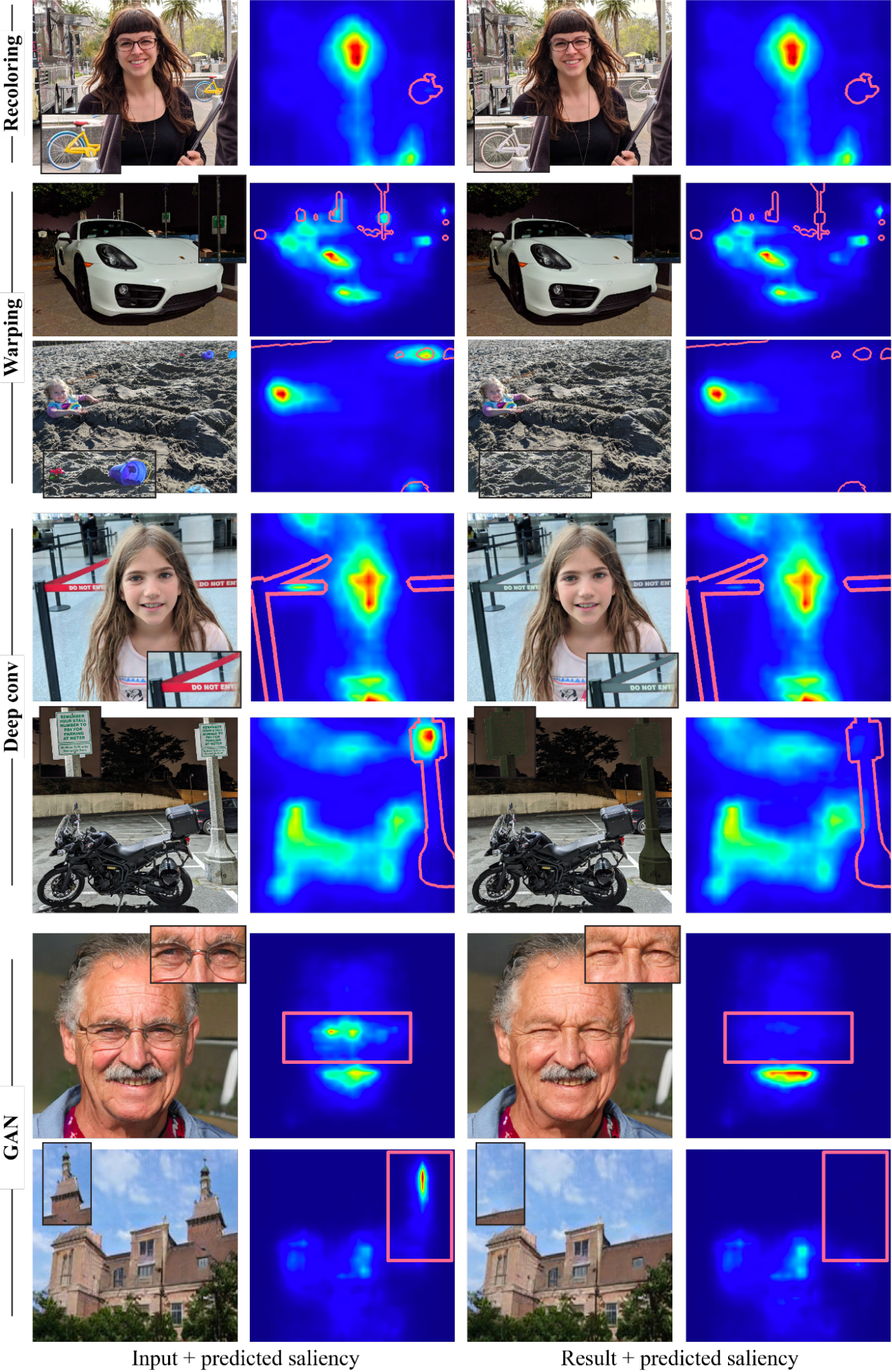

To discover what sorts of modifying results may be achieved and the way these have an effect on viewers’ consideration, we developed an optimization framework for guiding visible consideration in photos utilizing a differentiable, predictive saliency mannequin. Our methodology employs a state-of-the-art deep saliency mannequin. Given an enter picture and a binary masks representing the distractor areas, pixels throughout the masks can be edited below the steerage of the predictive saliency mannequin such that the saliency throughout the masked area is lowered. To verify the edited picture is pure and real looking, we fastidiously select 4 picture modifying operators: two normal picture modifying operations, particularly recolorization and picture warping (shift); and two discovered operators (we don’t outline the modifying operation explicitly), particularly a multi-layer convolution filter, and a generative mannequin (GAN).

With these operators, our framework can produce a wide range of highly effective results, with examples within the determine under, together with recoloring, inpainting, camouflage, object modifying or insertion, and facial attribute modifying. Importantly, all these results are pushed solely by the only, pre-trained saliency mannequin, with none further supervision or coaching. Be aware that our objective is to not compete with devoted strategies for producing every impact, however slightly to reveal how a number of modifying operations may be guided by the information embedded inside deep saliency fashions.

|

| Examples of lowering visible distractions, guided by the saliency mannequin with a number of operators. The distractor area is marked on prime of the saliency map (crimson border) in every instance. |

Enriching experiences with user-aware saliency modeling

Prior analysis assumes a single saliency mannequin for the entire inhabitants. Nevertheless, human consideration varies between people — whereas the detection of salient clues is pretty constant, their order, interpretation, and gaze distributions can differ considerably. This provides alternatives to create personalised consumer experiences for people or teams. In “Studying from Distinctive Views: Person-aware Saliency Modeling”, we introduce a user-aware saliency mannequin, the primary that may predict consideration for one consumer, a gaggle of customers, and the final inhabitants, with a single mannequin.

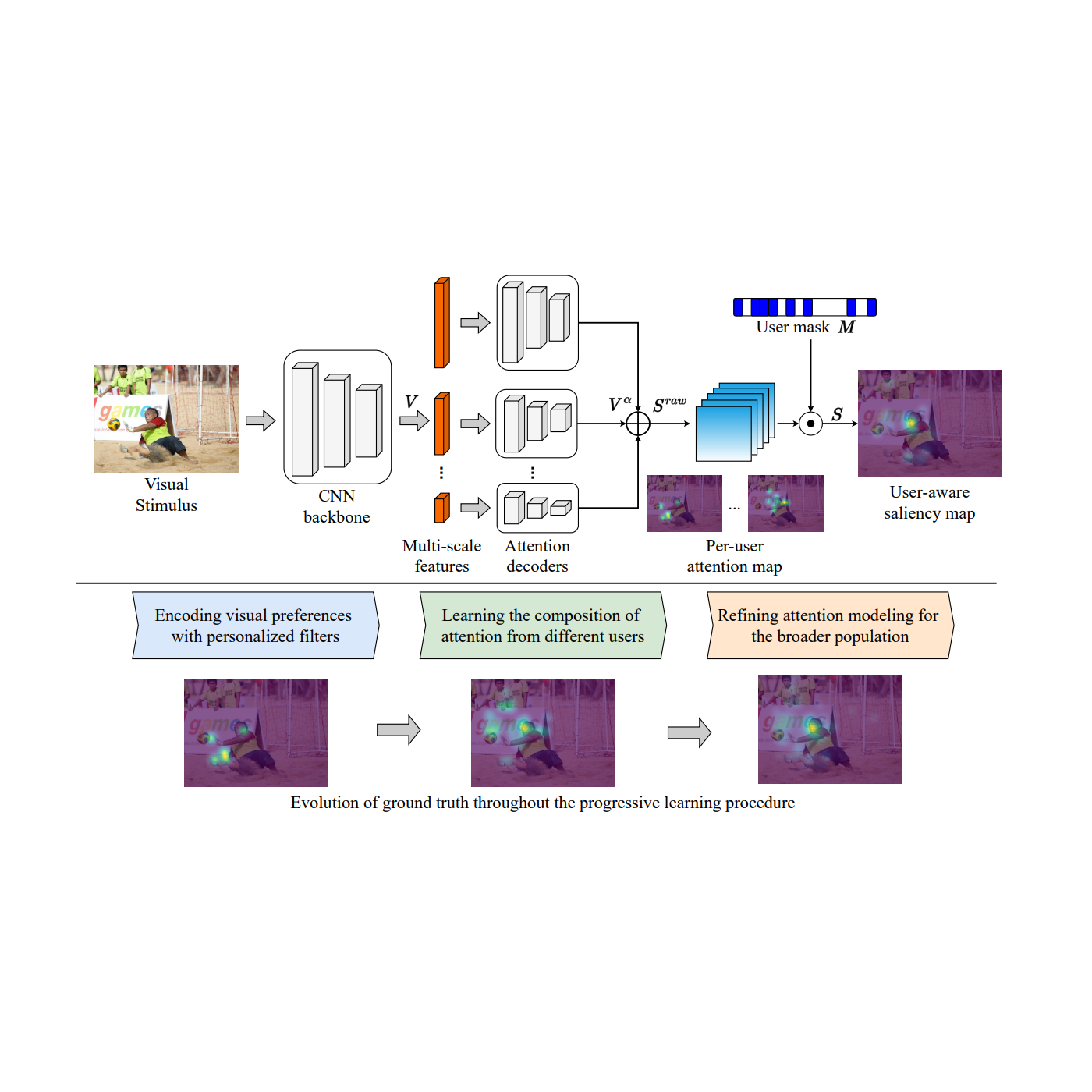

As proven within the determine under, core to the mannequin is the mix of every participant’s visible preferences with a per-user consideration map and adaptive consumer masks. This requires per-user consideration annotations to be accessible within the coaching information, e.g., the OSIE cellular gaze dataset for pure photos; FiWI and WebSaliency datasets for net pages. As a substitute of predicting a single saliency map representing consideration of all customers, this mannequin predicts per-user consideration maps to encode people’ consideration patterns. Additional, the mannequin adopts a consumer masks (a binary vector with the scale equal to the variety of individuals) to point the presence of individuals within the present pattern, which makes it doable to pick a gaggle of individuals and mix their preferences right into a single heatmap.

|

| An outline of the consumer conscious saliency mannequin framework. The instance picture is from OSIE picture set. |

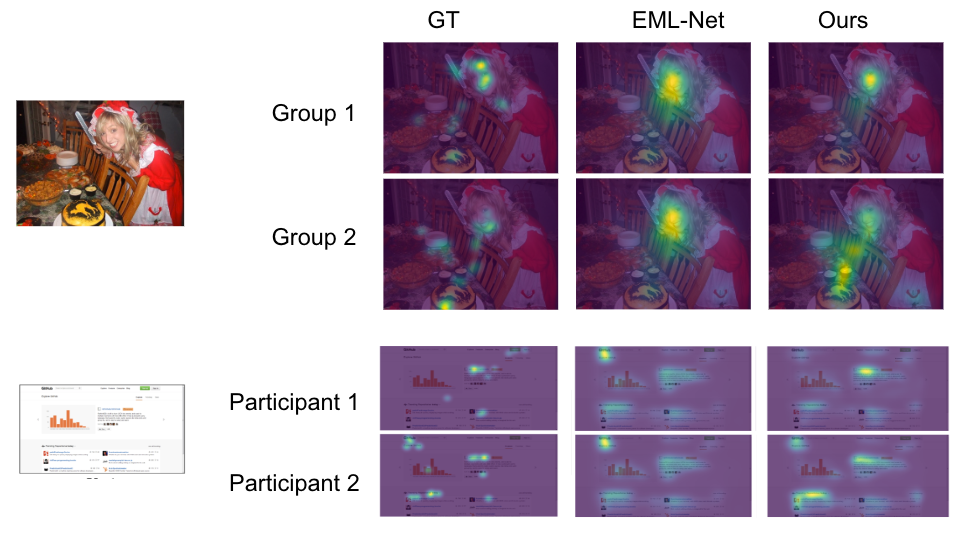

Throughout inference, the consumer masks permits making predictions for any mixture of individuals. Within the following determine, the primary two rows are consideration predictions for 2 totally different teams of individuals (with three folks in every group) on a picture. A standard consideration prediction mannequin will predict equivalent consideration heatmaps. Our mannequin can distinguish the 2 teams (e.g., the second group pays much less consideration to the face and extra consideration to the meals than the primary). Equally, the final two rows are predictions on a webpage for 2 distinctive individuals, with our mannequin displaying totally different preferences (e.g., the second participant pays extra consideration to the left area than the primary).

|

| Predicted consideration vs. floor fact (GT). EML-Web: predictions from a state-of-the-art mannequin, which may have the identical predictions for the 2 individuals/teams. Ours: predictions from our proposed consumer conscious saliency mannequin, which might predict the distinctive desire of every participant/group appropriately. The primary picture is from OSIE picture set, and the second is from FiWI. |

Progressive picture decoding centered on salient options

Moreover picture modifying, human consideration fashions may also enhance customers’ shopping expertise. Some of the irritating and annoying consumer experiences whereas shopping is ready for net pages with photos to load, particularly in circumstances with low community connectivity. A method to enhance the consumer expertise in such instances is with progressive decoding of photos, which decodes and shows more and more higher-resolution picture sections as information are downloaded, till the full-resolution picture is prepared. Progressive decoding normally proceeds in a sequential order (e.g., left to proper, prime to backside). With a predictive consideration mannequin (1, 2), we are able to as a substitute decode photos primarily based on saliency, making it doable to ship the info essential to show particulars of essentially the most salient areas first. For instance, in a portrait, bytes for the face may be prioritized over these for the out-of-focus background. Consequently, customers understand higher picture high quality earlier and expertise considerably lowered wait occasions. Extra particulars may be present in our open supply weblog posts (publish 1, publish 2). Thus, predictive consideration fashions will help with picture compression and quicker loading of net pages with photos, enhance rendering for giant photos and streaming/VR purposes.

Conclusion

We’ve proven how predictive fashions of human consideration can allow pleasant consumer experiences by way of purposes equivalent to picture modifying that may cut back litter, distractions or artifacts in photos or photographs for customers, and progressive picture decoding that may tremendously cut back the perceived ready time for customers whereas photos are absolutely rendered. Our user-aware saliency mannequin can additional personalize the above purposes for particular person customers or teams, enabling richer and extra distinctive experiences.

One other attention-grabbing route for predictive consideration fashions is whether or not they will help enhance robustness of laptop imaginative and prescient fashions in duties equivalent to object classification or detection. For instance, in “Instructor-generated spatial-attention labels enhance robustness and accuracy of contrastive fashions”, we present {that a} predictive human consideration mannequin can information contrastive studying fashions to attain higher illustration and enhance the accuracy/robustness of classification duties (on the ImageNet and ImageNet-C datasets). Additional analysis on this route may allow purposes equivalent to utilizing radiologist’s consideration on medical photos to enhance well being screening or prognosis, or utilizing human consideration in advanced driving eventualities to information autonomous driving techniques.

Acknowledgements

This work concerned collaborative efforts from a multidisciplinary staff of software program engineers, researchers, and cross-functional contributors. We’d prefer to thank all of the co-authors of the papers/analysis, together with Kfir Aberman, Gamaleldin F. Elsayed, Moritz Firsching, Shi Chen, Nachiappan Valliappan, Yushi Yao, Chang Ye, Yossi Gandelsman, Inbar Mosseri, David E. Jacobes, Yael Pritch, Shaolei Shen, and Xinyu Ye. We additionally need to thank staff members Oscar Ramirez, Venky Ramachandran and Tim Fujita for his or her assist. Lastly, we thank Vidhya Navalpakkam for her technical management in initiating and overseeing this physique of labor.

[ad_2]