[ad_1]

Extracting time collection on given geographical coordinates from satellite tv for pc or Numerical Climate Prediction knowledge could be difficult due to the amount of knowledge and of its multidimensional nature (time, latitude, longitude, peak, a number of parameters). This sort of processing could be present in climate and local weather analysis, but additionally in purposes like photovoltaic and wind energy. As an illustration, time collection describing the amount of photo voltaic power reaching particular geographical factors may also help in designing photovoltaic energy vegetation, monitoring their operation, and detecting yield loss.

A generalization of the issue might be said as follows: how can we extract knowledge alongside a dimension that’s not the partition key from a big quantity of multidimensional knowledge? For tabular knowledge, this drawback could be simply solved with AWS Glue, which you should use to create a job to filter and repartition the info, as proven on the finish of this submit. However what if the info is multidimensional and supplied in a domain-specific format, like within the use case that we need to deal with?

AWS Lambda is a serverless compute service that permits you to run code with out provisioning or managing servers. With AWS Step Features, you may launch parallel runs of Lambda capabilities. This submit exhibits how you should use these companies to run parallel duties, with the instance of time collection extraction from a big quantity of satellite tv for pc climate knowledge saved on Amazon Easy Storage Service (Amazon S3). You additionally use AWS Glue to consolidate the information produced by the parallel duties.

Be aware that Lambda is a normal function serverless engine. It has not been particularly designed for heavy knowledge transformation duties. We’re utilizing it right here after having confirmed the next:

- Job period is predictable and is lower than quarter-hour, which is the utmost timeout for Lambda capabilities

- The use case is straightforward, with low compute necessities and no exterior dependencies that would decelerate the method

We work on a dataset supplied by EUMESAT: the MSG Whole and Diffuse Downward Floor Shortwave Flux (MDSSFTD). This dataset accommodates satellite tv for pc knowledge at 15-minute intervals, in netcdf format, which represents roughly 100 GB for 1 12 months.

We course of the 12 months 2018 to extract time collection on 100 geographical factors.

Resolution overview

To realize our aim, we use parallel Lambda capabilities. Every Lambda operate processes 1 day of knowledge: 96 information representing a quantity of roughly 240 MB. We then have 365 information containing the extracted knowledge for every day, and we use AWS Glue to concatenate them for the complete 12 months and cut up them throughout the 100 geographical factors. This workflow is proven within the following structure diagram.

Deployment of this resolution: On this submit, we offer step-by-step directions to deploy every a part of the structure manually. If you happen to choose an computerized deployment, now we have ready for you a Github repository containing the required infrastructure as code template.

The dataset is partitioned by day, with YYYY/MM/DD/ prefixes. Every partition accommodates 96 information that shall be processed by one Lambda operate.

We use Step Features to launch the parallel processing of the three hundred and sixty five days of the 12 months 2018. Step Features helps builders use AWS companies to construct distributed purposes, automate processes, orchestrate microservices, and create knowledge and machine studying (ML) pipelines.

However earlier than beginning, we have to obtain the dataset and add it to an S3 bucket.

Stipulations

Create an S3 bucket to retailer the enter dataset, the intermediate outputs, and the ultimate outputs of the info extraction.

Obtain the dataset and add it to Amazon S3

A free registration on the info supplier web site is required to obtain the dataset. To obtain the dataset, you should use the next command from a Linux terminal. Present the credentials that you just obtained at registration. Your Linux terminal might be in your native machine, however you can even use an AWS Cloud9 occasion. Just be sure you have at the very least 100 GB of free storage to deal with the whole dataset.

As a result of the dataset is sort of giant, this obtain might take a very long time. Within the meantime, you may put together the following steps.

When the obtain is full, you may add the dataset to an S3 bucket with the next command:

If you happen to use short-term credentials, they may expire earlier than the copy is full. On this case, you may resume by utilizing the aws s3 sync command.

Now that the info is on Amazon S3, you may delete the listing that has been downloaded out of your Linux machine.

Create the Lambda capabilities

For step-by-step directions on how you can create a Lambda operate, discuss with Getting began with Lambda.

The primary Lambda operate within the workflow generates the listing of days that we need to course of:

We then use the Map state of Step Features to course of every day. The Map state will launch one Lambda operate for every ingredient returned by the earlier operate, and can move this ingredient as an enter. These Lambda capabilities shall be launched concurrently for all the weather within the listing. The processing time for the complete 12 months will subsequently be equivalent to the time wanted to course of 1 single day, permitting scalability for very long time collection and enormous volumes of enter knowledge.

The next is an instance of code for the Lambda operate that processes every day:

You should affiliate a job to the Lambda operate to authorize it to entry the S3 buckets. As a result of the runtime is a couple of minute, you additionally need to configure the timeout of the Lambda operate accordingly. Let’s set it to five minutes. We additionally enhance the reminiscence allotted to the Lambda operate to 2048 MB, which is required by the netcdf4 library for extracting a number of factors at a time from satellite tv for pc knowledge.

This Lambda operate relies on the pandas and netcdf4 libraries. They are often put in as Lambda layers. The pandas library is supplied as an AWS managed layer. The netcdf4 library should be packaged in a customized layer.

Configure the Step Features workflow

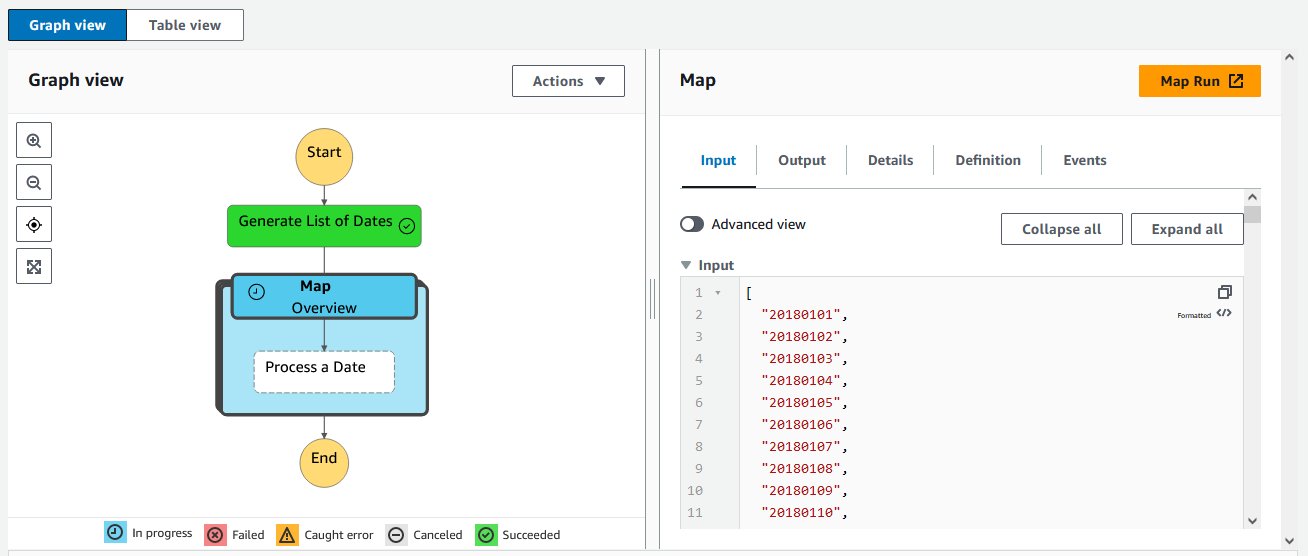

After you create the 2 Lambda capabilities, you may design the Step Features workflow within the visible editor by utilizing the Lambda Invoke and Map blocks, as proven within the following diagram.

Within the Map state block, select Distributed processing mode and enhance concurrency restrict to 365 in Runtime settings. This may allow parallel processing of all the times.

The variety of Lambda capabilities that may run concurrently is restricted for every account. Your account could have inadequate quota. You’ll be able to request a quota enhance.

Launch the state machine

Now you can launch the state machine. On the Step Features console, navigate to your state machine and select Begin execution to run your workflow.

This may set off a popup in which you’ll enter non-obligatory enter to your state machine. For this submit, you may depart the defaults and select Begin execution.

The state machine ought to take 1–2 minutes to run, throughout which era it is possible for you to to observe the progress of your workflow. You’ll be able to choose one of many blocks within the diagram and examine its enter, output, and different info in actual time, as proven within the following screenshot. This may be very helpful for debugging functions.

When all of the blocks flip inexperienced, the state machine is full. At this step, now we have extracted the info for 100 geographical factors for a complete 12 months of satellite tv for pc knowledge.

Within the S3 bucket configured as output for the processing Lambda operate, we are able to test that now we have one file per day, containing the info for all of the 100 factors.

Rework knowledge per day to knowledge per geographical level with AWS Glue

For now, now we have one file per day. Nevertheless, our aim is to get time collection for each geographical level. This transformation includes altering the way in which the info is partitioned. From a day partition, now we have to go to a geographical level partition.

Fortuitously, this operation could be completed very merely with AWS Glue.

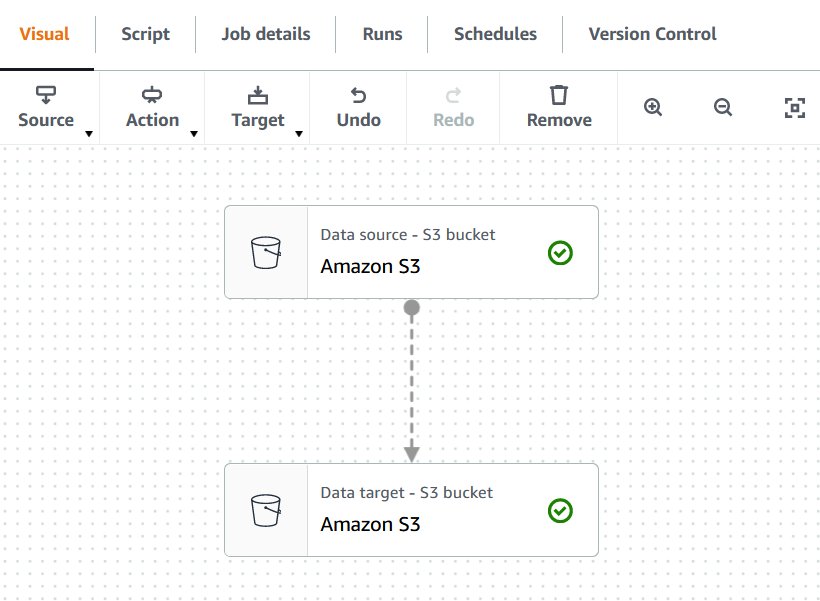

- On the AWS Glue Studio console, create a brand new job and select Visible with a clean canvas.

For this instance, we create a easy job with a supply and goal block.

- Add a knowledge supply block.

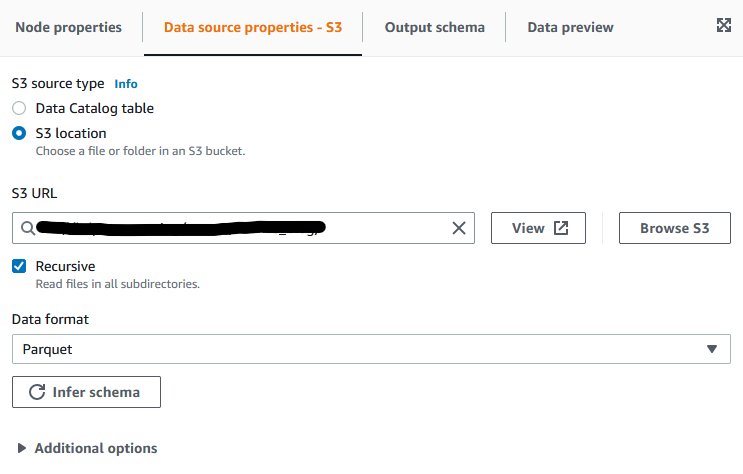

- On the Information supply properties tab, choose S3 location for S3 supply sort.

- For S3 URL, enter the situation the place you created your information within the earlier step.

- For Information format, maintain the default as Parquet.

- Select Infer schema and consider the Output schema tab to substantiate the schema has been accurately detected.

- Add a knowledge goal block.

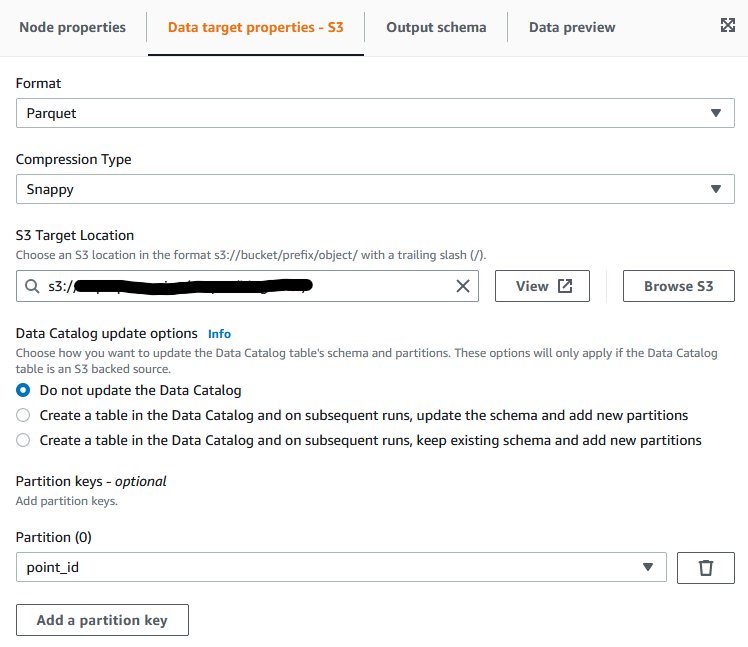

- On the Information goal properties tab, for Format, select Parquet.

- For Compression sort, select Snappy.

- For S3 Goal Location, enter the S3 goal location to your output information.

We now need to configure the magic!

- Add a partition key, and select

point_id.

This tells AWS Glue the way you need your output knowledge to be partitioned. AWS Glue will mechanically partition the output knowledge in line with the point_id column, and subsequently we’ll get one folder for every geographical level, containing the entire time collection for this level as requested.

To complete the configuration, we have to assign an AWS Identification and Entry Administration (IAM) position to the AWS Glue job.

- Select Job particulars, and for IAM position¸ select a job that has permissions to learn from the enter S3 bucket and to put in writing to the output S3 bucket.

You might have to create the position on the IAM console in case you don’t have already got an applicable one.

- Enter a reputation for our AWS Glue job, reserve it, and run it.

We are able to monitor the run by selecting Run particulars. It ought to take 1–2 minutes to finish.

Ultimate outcomes

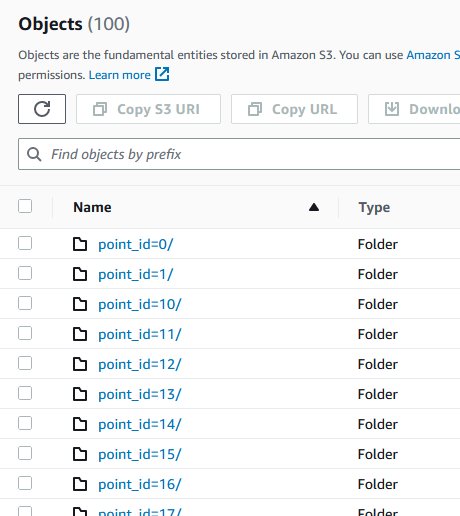

After the AWS Glue job succeeds, we are able to test within the output S3 bucket that now we have one folder for every geographical level, containing some Parquet information with the entire 12 months of knowledge, as anticipated.

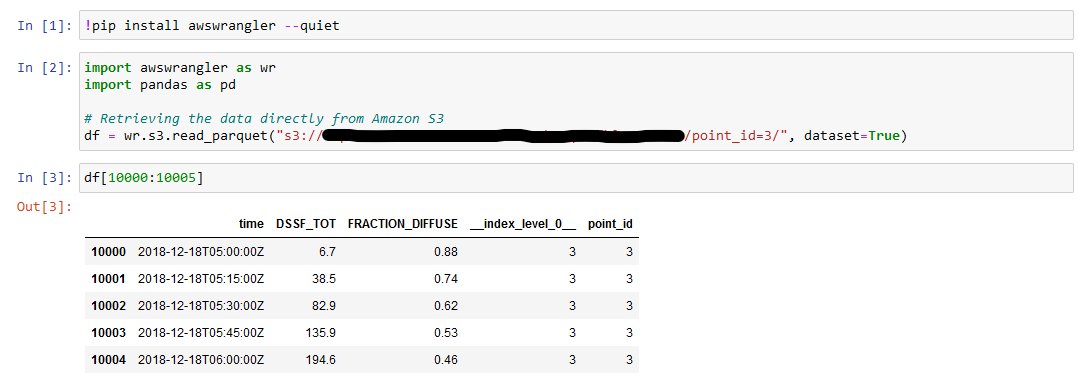

To load the time collection for a selected level right into a pandas knowledge body, you should use the awswrangler library out of your Python code:

If you wish to check this code now, you may create a pocket book occasion in Amazon SageMaker, after which open a Jupyter pocket book. The next screenshot illustrates operating the previous code in a Jupyter pocket book.

As we are able to see, now we have efficiently extracted the time collection for particular geographical factors!

Clear up

To keep away from incurring future prices, delete the sources that you’ve created:

- The S3 bucket

- The AWS Glue job

- The Step Features state machine

- The 2 Lambda capabilities

- The SageMaker pocket book occasion

Conclusion

On this submit, we confirmed how you can use Lambda, Step Features, and AWS Glue for serverless ETL (extract, remodel, and cargo) on a big quantity of climate knowledge. The proposed structure permits extraction and repartitioning of the info in only a few minutes. It’s scalable and cost-effective, and could be tailored to different ETL and knowledge processing use instances.

Fascinated with studying extra concerning the companies introduced on this submit? You will discover hands-on labs to enhance your information with AWS Workshops. Moreover, take a look at the official documentation of AWS Glue, Lambda, and Step Features. It’s also possible to uncover extra architectural patterns and finest practices at AWS Whitepapers & Guides.

In regards to the Creator

Lior Perez is a Principal Options Architect on the Enterprise staff based mostly in Toulouse, France. He enjoys supporting clients of their digital transformation journey, utilizing huge knowledge and machine studying to assist clear up their enterprise challenges. He’s additionally personally captivated with robotics and IoT, and continuously appears to be like for brand new methods to leverage applied sciences for innovation.

[ad_2]