[ad_1]

Software program isn’t created in a single dramatic step. It improves little by little, one little step at a time — enhancing, operating unit checks, fixing construct errors, addressing code critiques, enhancing some extra, appeasing linters, and fixing extra errors — till lastly it turns into adequate to merge right into a code repository. Software program engineering isn’t an remoted course of, however a dialogue amongst human builders, code reviewers, bug reporters, software program architects and instruments, comparable to compilers, unit checks, linters and static analyzers.

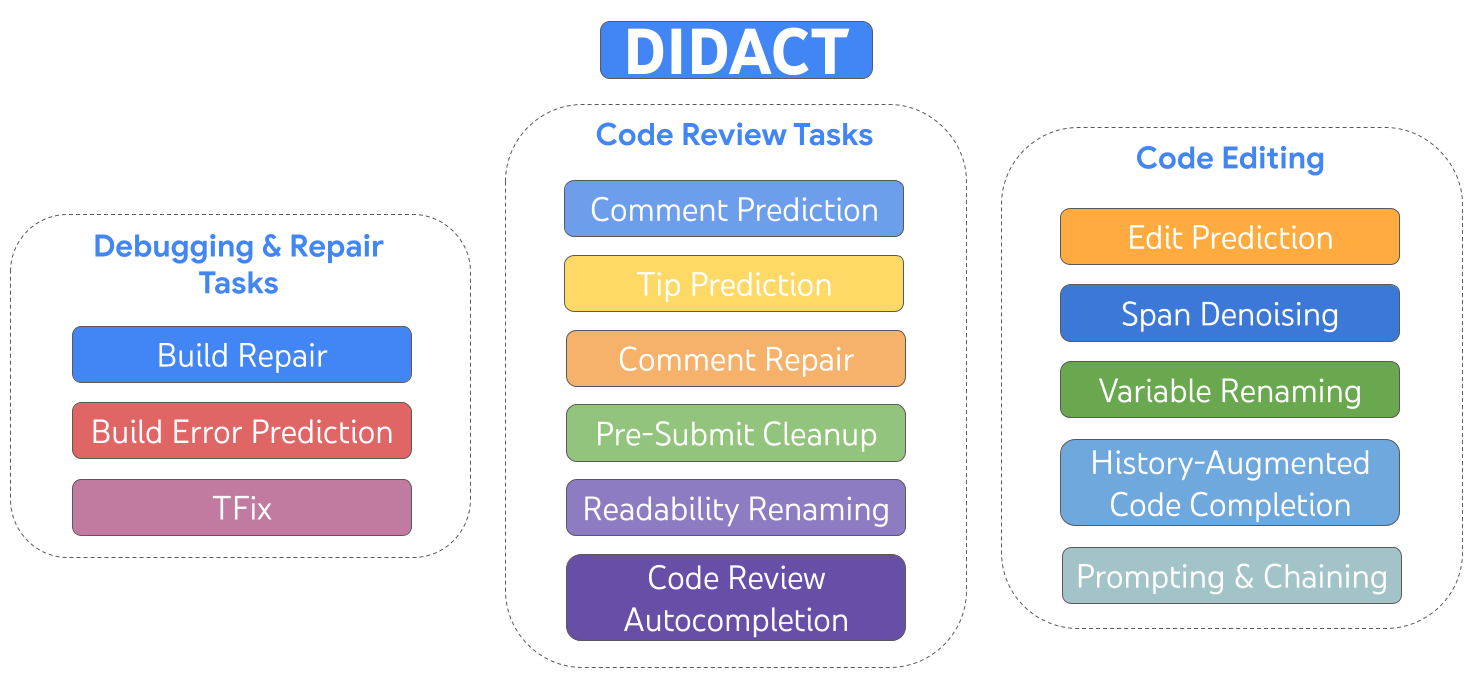

Right now we describe DIDACT (Dynamic Built-in Developer ACTivity), which is a strategy for coaching giant machine studying (ML) fashions for software program growth. The novelty of DIDACT is that it makes use of the method of software program growth because the supply of coaching information for the mannequin, fairly than simply the polished finish state of that course of, the completed code. By exposing the mannequin to the contexts that builders see as they work, paired with the actions they absorb response, the mannequin learns concerning the dynamics of software program growth and is extra aligned with how builders spend their time. We leverage instrumentation of Google’s software program growth to scale up the amount and variety of developer-activity information past earlier works. Outcomes are extraordinarily promising alongside two dimensions: usefulness to skilled software program builders, and as a possible foundation for imbuing ML fashions with normal software program growth expertise.

|

| DIDACT is a multi-task mannequin skilled on growth actions that embrace enhancing, debugging, restore, and code assessment. |

We constructed and deployed internally three DIDACT instruments, Remark Decision (which we just lately introduced), Construct Restore, and Tip Prediction, every built-in at completely different phases of the event workflow. All three of those instruments obtained enthusiastic suggestions from 1000’s of inside builders. We see this as the final word check of usefulness: do skilled builders, who are sometimes specialists on the code base and who’ve rigorously honed workflows, leverage the instruments to enhance their productiveness?

Maybe most excitingly, we reveal how DIDACT is a primary step in direction of a general-purpose developer-assistance agent. We present that the skilled mannequin can be utilized in quite a lot of stunning methods, by way of prompting with prefixes of developer actions, and by chaining collectively a number of predictions to roll out longer exercise trajectories. We consider DIDACT paves a promising path in direction of creating brokers that may usually help throughout the software program growth course of.

A treasure trove of information concerning the software program engineering course of

Google’s software program engineering toolchains retailer each operation associated to code as a log of interactions amongst instruments and builders, and have performed so for many years. In precept, one may use this document to replay intimately the important thing episodes within the “software program engineering video” of how Google’s codebase got here to be, step-by-step — one code edit, compilation, remark, variable rename, and so on., at a time.

Google code lives in a monorepo, a single repository of code for all instruments and methods. A software program developer usually experiments with code adjustments in an area copy-on-write workspace managed by a system known as Purchasers within the Cloud (CitC). When the developer is able to bundle a set of code adjustments collectively for a selected objective (e.g., fixing a bug), they create a changelist (CL) in Critique, Google’s code-review system. As with different kinds of code-review methods, the developer engages in a dialog with a peer reviewer about performance and magnificence. The developer edits their CL to handle reviewer feedback because the dialog progresses. Ultimately, the reviewer declares “LGTM!” (“appears good to me”), and the CL is merged into the code repository.

In fact, along with a dialog with the code reviewer, the developer additionally maintains a “dialog” of kinds with a plethora of different software program engineering instruments, such because the compiler, the testing framework, linters, static analyzers, fuzzers, and so on.

A multi-task mannequin for software program engineering

DIDACT makes use of interactions amongst engineers and instruments to energy ML fashions that help Google builders, by suggesting or enhancing actions builders take — in context — whereas pursuing their software-engineering duties. To do this, we’ve outlined numerous duties about particular person developer actions: repairing a damaged construct, predicting a code-review remark, addressing a code-review remark, renaming a variable, enhancing a file, and so on. We use a standard formalism for every exercise: it takes some State (a code file), some Intent (annotations particular to the exercise, comparable to code-review feedback or compiler errors), and produces an Motion (the operation taken to handle the duty). This Motion is sort of a mini programming language, and will be prolonged for newly added actions. It covers issues like enhancing, including feedback, renaming variables, marking up code with errors, and so on. We name this language DevScript.

|

| The DIDACT mannequin is prompted with a job, code snippets, and annotations associated to that job, and produces growth actions, e.g., edits or feedback. |

This state-intent-action formalism allows us to seize many various duties in a normal means. What’s extra, DevScript is a concise option to specific advanced actions, with out the necessity to output the entire state (the unique code) as it might be after the motion takes place; this makes the mannequin extra environment friendly and extra interpretable. For instance, a rename would possibly contact a file in dozens of locations, however a mannequin can predict a single rename motion.

An ML peer programmer

DIDACT does a great job on particular person assistive duties. For instance, under we present DIDACT doing code clean-up after performance is generally performed. It appears on the code together with some last feedback by the code reviewer (marked with “human” within the animation), and predicts edits to handle these feedback (rendered as a diff).

The multimodal nature of DIDACT additionally provides rise to some stunning capabilities, harking back to behaviors rising with scale. One such functionality is historical past augmentation, which will be enabled by way of prompting. Figuring out what the developer did just lately allows the mannequin to make a greater guess about what the developer ought to do subsequent.

|

| An illustration of history-augmented code completion in motion. |

A strong such job exemplifying this functionality is history-augmented code completion. Within the determine under, the developer provides a brand new operate parameter (1), and strikes the cursor into the documentation (2). Conditioned on the historical past of developer edits and the cursor place, the mannequin completes the road (3) by accurately predicting the docstring entry for the brand new parameter.

|

| An illustration of edit prediction, over a number of chained iterations. |

In an much more highly effective history-augmented job, edit prediction, the mannequin can select the place to edit subsequent in a vogue that’s traditionally constant. If the developer deletes a operate parameter (1), the mannequin can use historical past to accurately predict an replace to the docstring (2) that removes the deleted parameter (with out the human developer manually inserting the cursor there) and to replace a press release within the operate (3) in a syntactically (and — arguably — semantically) appropriate means. With historical past, the mannequin can unambiguously resolve the way to proceed the “enhancing video” accurately. With out historical past, the mannequin wouldn’t know whether or not the lacking operate parameter is intentional (as a result of the developer is within the strategy of an extended edit to take away it) or unintentional (through which case the mannequin ought to re-add it to repair the issue).

The mannequin can go even additional. For instance, we began with a clean file and requested the mannequin to successively predict what edits would come subsequent till it had written a full code file. The astonishing half is that the mannequin developed code in a step-by-step means that would appear pure to a developer: It began by first creating a completely working skeleton with imports, flags, and a primary major operate. It then incrementally added new performance, like studying from a file and writing outcomes, and added performance to filter out some traces primarily based on a user-provided common expression, which required adjustments throughout the file, like including new flags.

Conclusion

DIDACT turns Google’s software program growth course of into coaching demonstrations for ML developer assistants, and makes use of these demonstrations to coach fashions that assemble code in a step-by-step vogue, interactively with instruments and code reviewers. These improvements are already powering instruments loved by Google builders day by day. The DIDACT strategy enhances the good strides taken by giant language fashions at Google and elsewhere, in direction of applied sciences that ease toil, enhance productiveness, and improve the standard of labor of software program engineers.

Acknowledgements

This work is the results of a multi-year collaboration amongst Google Analysis, Google Core Programs and Experiences, and DeepMind. We wish to acknowledge our colleagues Jacob Austin, Pascal Lamblin, Pierre-Antoine Manzagol, and Daniel Zheng, who be a part of us as the important thing drivers of this venture. This work couldn’t have occurred with out the numerous and sustained contributions of our companions at Alphabet (Peter Choy, Henryk Michalewski, Subhodeep Moitra, Malgorzata Salawa, Vaibhav Tulsyan, and Manushree Vijayvergiya), in addition to the many individuals who collected information, recognized duties, constructed merchandise, strategized, evangelized, and helped us execute on the numerous sides of this agenda (Ankur Agarwal, Paige Bailey, Marc Brockschmidt, Rodrigo Damazio Bovendorp, Satish Chandra, Savinee Dancs, Denis Davydenko, Matt Frazier, Alexander Frömmgen, Nimesh Ghelani, Chris Gorgolewski, Chenjie Gu, Vincent Hellendoorn, Franjo Ivančić, Marko Ivanković, Emily Johnston, Luka Kalinovcic, Lera Kharatyan, Jessica Ko, Markus Kusano, Kathy Nix, Christian Perez, Sara Qu, Marc Rasi, Marcus Revaj, Ballie Sandhu, Michael Sloan, Tom Small, Gabriela Surita, Maxim Tabachnyk, David Tattersall, Sara Toth, Kevin Villela, Sara Wiltberger, and Donald Duo Zhao) and our extraordinarily supportive management (Martín Abadi, Joelle Barral, Jeff Dean, Madhura Dudhgaonkar, Douglas Eck, Zoubin Ghahramani, Hugo Larochelle, Chandu Thekkath, and Niranjan Tulpule). Thanks!

[ad_2]