[ad_1]

Fashionable neural networks have achieved spectacular efficiency throughout quite a lot of functions, corresponding to language, mathematical reasoning, and imaginative and prescient. Nevertheless, these networks typically use massive architectures that require plenty of computational assets. This will make it impractical to serve such fashions to customers, particularly in resource-constrained environments like wearables and smartphones. A extensively used method to mitigate the inference prices of pre-trained networks is to prune them by eradicating a few of their weights, in a approach that doesn’t considerably have an effect on utility. In customary neural networks, every weight defines a connection between two neurons. So after weights are pruned, the enter will propagate via a smaller set of connections and thus requires much less computational assets.

|

| Unique community vs. a pruned community. |

Pruning strategies could be utilized at completely different levels of the community’s coaching course of: submit, throughout, or earlier than coaching (i.e., instantly after weight initialization). On this submit, we give attention to the post-training setting: given a pre-trained community, how can we decide which weights must be pruned? One well-liked methodology is magnitude pruning, which removes weights with the smallest magnitude. Whereas environment friendly, this methodology doesn’t instantly think about the impact of eradicating weights on the community’s efficiency. One other well-liked paradigm is optimization-based pruning, which removes weights primarily based on how a lot their removing impacts the loss operate. Though conceptually interesting, most present optimization-based approaches appear to face a severe tradeoff between efficiency and computational necessities. Strategies that make crude approximations (e.g., assuming a diagonal Hessian matrix) can scale properly, however have comparatively low efficiency. However, whereas strategies that make fewer approximations are inclined to carry out higher, they look like a lot much less scalable.

In “Quick as CHITA: Neural Community Pruning with Combinatorial Optimization”, introduced at ICML 2023, we describe how we developed an optimization-based method for pruning pre-trained neural networks at scale. CHITA (which stands for “Combinatorial Hessian-free Iterative Thresholding Algorithm”) outperforms present pruning strategies when it comes to scalability and efficiency tradeoffs, and it does so by leveraging advances from a number of fields, together with high-dimensional statistics, combinatorial optimization, and neural community pruning. For instance, CHITA could be 20x to 1000x sooner than state-of-the-art strategies for pruning ResNet and improves accuracy by over 10% in lots of settings.

Overview of contributions

CHITA has two notable technical enhancements over well-liked strategies:

- Environment friendly use of second-order data: Pruning strategies that use second-order data (i.e., regarding second derivatives) obtain the cutting-edge in lots of settings. Within the literature, this data is often utilized by computing the Hessian matrix or its inverse, an operation that may be very tough to scale as a result of the Hessian dimension is quadratic with respect to the variety of weights. By cautious reformulation, CHITA makes use of second-order data with out having to compute or retailer the Hessian matrix explicitly, thus permitting for extra scalability.

- Combinatorial optimization: Common optimization-based strategies use a easy optimization method that prunes weights in isolation, i.e., when deciding to prune a sure weight they don’t consider whether or not different weights have been pruned. This might result in pruning essential weights as a result of weights deemed unimportant in isolation might grow to be essential when different weights are pruned. CHITA avoids this situation by utilizing a extra superior, combinatorial optimization algorithm that takes under consideration how pruning one weight impacts others.

Within the sections beneath, we focus on CHITA’s pruning formulation and algorithms.

A computation-friendly pruning formulation

There are various doable pruning candidates, that are obtained by retaining solely a subset of the weights from the unique community. Let ok be a user-specified parameter that denotes the variety of weights to retain. Pruning could be naturally formulated as a best-subset choice (BSS) drawback: amongst all doable pruning candidates (i.e., subsets of weights) with solely ok weights retained, the candidate that has the smallest loss is chosen.

Fixing the pruning BSS drawback on the unique loss operate is mostly computationally intractable. Thus, just like earlier work, corresponding to OBD and OBS, we approximate the loss with a quadratic operate by utilizing a second-order Taylor sequence, the place the Hessian is estimated with the empirical Fisher data matrix. Whereas gradients could be usually computed effectively, computing and storing the Hessian matrix is prohibitively costly because of its sheer dimension. Within the literature, it’s common to cope with this problem by making restrictive assumptions on the Hessian (e.g., diagonal matrix) and likewise on the algorithm (e.g., pruning weights in isolation).

CHITA makes use of an environment friendly reformulation of the pruning drawback (BSS utilizing the quadratic loss) that avoids explicitly computing the Hessian matrix, whereas nonetheless utilizing all the data from this matrix. That is made doable by exploiting the low-rank construction of the empirical Fisher data matrix. This reformulation could be seen as a sparse linear regression drawback, the place every regression coefficient corresponds to a sure weight within the neural community. After acquiring an answer to this regression drawback, coefficients set to zero will correspond to weights that must be pruned. Our regression knowledge matrix is (n x p), the place n is the batch (sub-sample) dimension and p is the variety of weights within the authentic community. Sometimes n << p, so storing and working with this knowledge matrix is far more scalable than frequent pruning approaches that function with the (p x p) Hessian.

Scalable optimization algorithms

CHITA reduces pruning to a linear regression drawback beneath the next sparsity constraint: at most ok regression coefficients could be nonzero. To acquire an answer to this drawback, we think about a modification of the well-known iterative onerous thresholding (IHT) algorithm. IHT performs gradient descent the place after every replace the next post-processing step is carried out: all regression coefficients outdoors the High-ok (i.e., the ok coefficients with the most important magnitude) are set to zero. IHT usually delivers a great answer to the issue, and it does so iteratively exploring completely different pruning candidates and collectively optimizing over the weights.

Because of the scale of the issue, customary IHT with fixed studying price can endure from very sluggish convergence. For sooner convergence, we developed a brand new line-search methodology that exploits the issue construction to discover a appropriate studying price, i.e., one which results in a sufficiently massive lower within the loss. We additionally employed a number of computational schemes to enhance CHITA’s effectivity and the standard of the second-order approximation, resulting in an improved model that we name CHITA++.

Experiments

We evaluate CHITA’s run time and accuracy with a number of state-of-the-art pruning strategies utilizing completely different architectures, together with ResNet and MobileNet.

Run time: CHITA is far more scalable than comparable strategies that carry out joint optimization (versus pruning weights in isolation). For instance, CHITA’s speed-up can attain over 1000x when pruning ResNet.

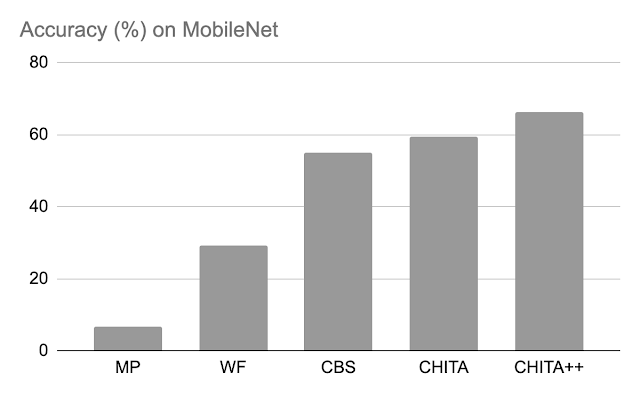

Put up-pruning accuracy: Under, we evaluate the efficiency of CHITA and CHITA++ with magnitude pruning (MP), Woodfisher (WF), and Combinatorial Mind Surgeon (CBS), for pruning 70% of the mannequin weights. Total, we see good enhancements from CHITA and CHITA++.

|

| Put up-pruning accuracy of assorted strategies on ResNet20. Outcomes are reported for pruning 70% of the mannequin weights. |

|

| Put up-pruning accuracy of assorted strategies on MobileNet. Outcomes are reported for pruning 70% of the mannequin weights. |

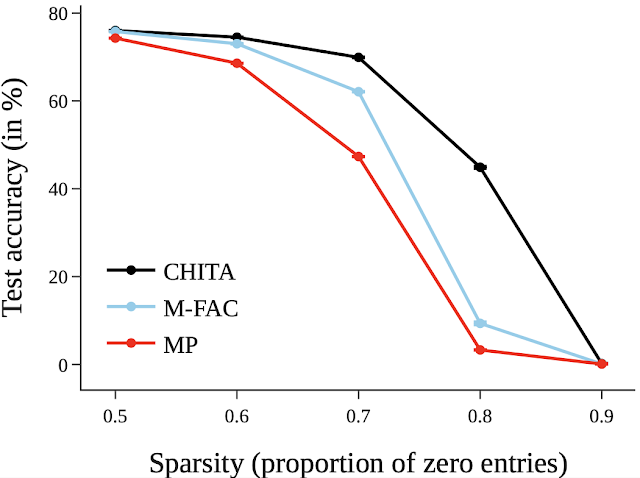

Subsequent, we report outcomes for pruning a bigger community: ResNet50 (on this community, a number of the strategies listed within the ResNet20 determine couldn’t scale). Right here we evaluate with magnitude pruning and M-FAC. The determine beneath reveals that CHITA achieves higher check accuracy for a variety of sparsity ranges.

|

| Take a look at accuracy of pruned networks, obtained utilizing completely different strategies. |

Conclusion, limitations, and future work

We introduced CHITA, an optimization-based method for pruning pre-trained neural networks. CHITA presents scalability and aggressive efficiency by effectively utilizing second-order data and drawing on concepts from combinatorial optimization and high-dimensional statistics.

CHITA is designed for unstructured pruning during which any weight could be eliminated. In principle, unstructured pruning can considerably scale back computational necessities. Nevertheless, realizing these reductions in follow requires particular software program (and probably {hardware}) that help sparse computations. In distinction, structured pruning, which removes complete buildings like neurons, might supply enhancements which can be simpler to realize on general-purpose software program and {hardware}. It will be fascinating to increase CHITA to structured pruning.

Acknowledgements

This work is a part of a analysis collaboration between Google and MIT. Because of Rahul Mazumder, Natalia Ponomareva, Wenyu Chen, Xiang Meng, Zhe Zhao, and Sergei Vassilvitskii for his or her assist in getting ready this submit and the paper. Additionally due to John Guilyard for creating the graphics on this submit.

[ad_2]