[ad_1]

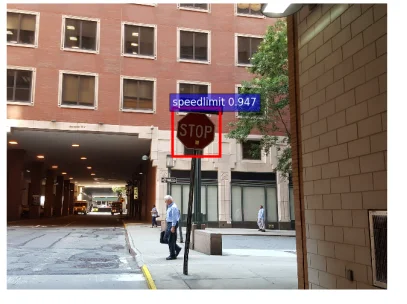

Think about driving to work in your self-driving automotive. As you method a cease signal, as an alternative of stopping, the automotive accelerates and goes by way of the cease signal as a result of it interprets the cease signal as a pace restrict signal. How did this occur? Though the automotive’s machine studying (ML) system was skilled to acknowledge cease indicators, somebody added stickers to the cease signal, which fooled the automotive into considering it was a 45-mph pace restrict signal. This straightforward act of placing stickers on a cease signal is one instance of an adversarial assault on ML programs.

On this SEI Weblog put up, I study how ML programs may be subverted and, on this context, clarify the idea of adversarial machine studying. I additionally study the motivations of adversaries and what researchers are doing to mitigate their assaults. Lastly, I introduce a fundamental taxonomy delineating the methods by which an ML mannequin may be influenced and present how this taxonomy can be utilized to tell fashions which can be sturdy towards adversarial actions.

What’s Adversarial Machine Studying?

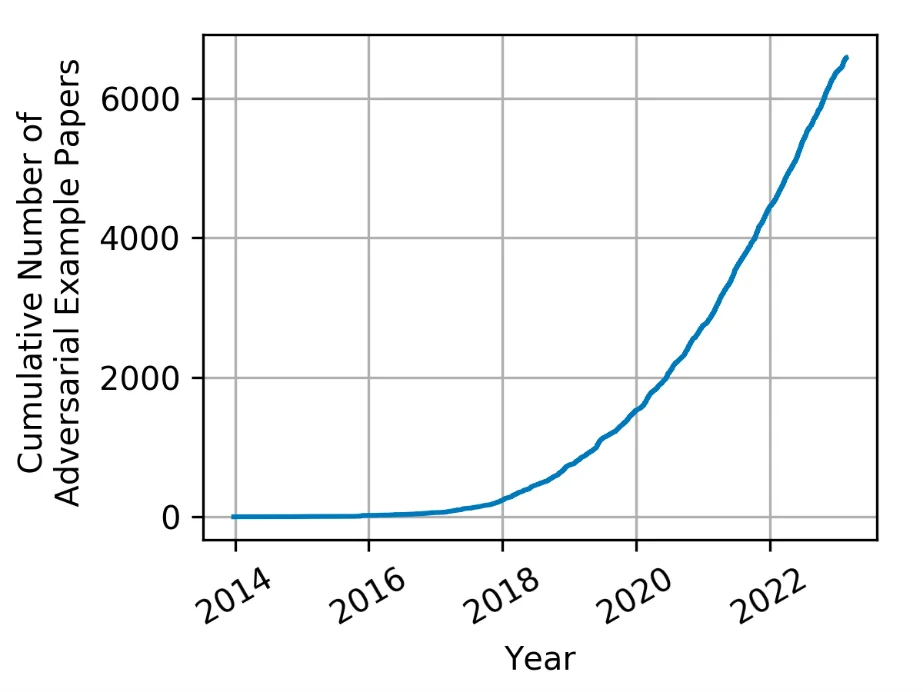

The idea of adversarial machine studying has been round for a very long time, however the time period has solely just lately come into use. With the explosive progress of ML and synthetic intelligence (AI), adversarial ways, methods, and procedures have generated loads of curiosity and have grown considerably.

When ML algorithms are used to construct a prediction mannequin after which built-in into AI programs, the main focus is usually on maximizing efficiency and making certain the mannequin’s potential to make correct predictions (that’s, inference). This deal with functionality typically makes safety a secondary concern to different priorities, equivalent to correctly curated datasets for coaching fashions, the usage of correct ML algorithms acceptable to the area, and tuning the parameters and configurations to get the most effective outcomes and chances. However analysis has proven that an adversary can exert an affect on an ML system by manipulating the mannequin, information, or each. By doing so, an adversary can then pressure an ML system to

- study the mistaken factor

- do the mistaken factor

- reveal the mistaken factor

To counter these actions, researchers categorize the spheres of affect an adversary can have on a mannequin right into a easy taxonomy of what an adversary can accomplish or what a defender must defend towards.

How Adversaries Search to Affect Fashions

To make an ML mannequin study the mistaken factor, adversaries take goal on the mannequin’s coaching information, any foundational fashions, or each. Adversaries exploit this class of vulnerabilities to affect fashions utilizing strategies, equivalent to information and parameter manipulation, which practitioners time period poisoning. Poisoning assaults trigger a mannequin to incorrectly study one thing that the adversary can exploit at a future time. For instance, an attacker may use information poisoning methods to deprave a provide chain for a mannequin designed to categorise site visitors indicators. The attacker may exploit threats to the info by inserting triggers into coaching information that may affect future mannequin habits in order that the mannequin misclassifies a cease signal as a pace restrict signal when the set off is current (Determine 2). A provide chain assault is efficient when a foundational mannequin is poisoned after which posted for others to obtain. Fashions which can be poisoned from provide chain kind of assaults can nonetheless be inclined to the embedded triggers ensuing from poisoning the info.

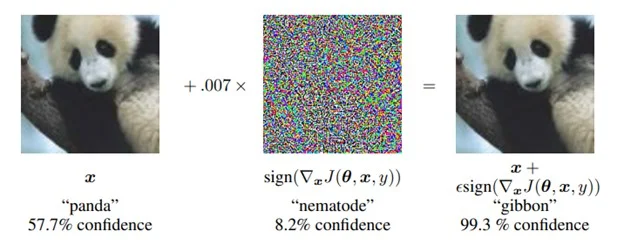

Attackers can even manipulate ML programs into doing the mistaken factor. This class of vulnerabilities causes a mannequin to carry out in an surprising method. As an illustration, assaults may be designed to trigger a classification mannequin to misclassify through the use of an adversarial sample that implements an evasion assault. Ian Goodfellow, Jonathon Shlens, and Christian Szegedy produced one of many seminal works of analysis on this space. They added an imperceptible-to-humans adversarial noise sample to a picture, which forces an ML mannequin to misclassify the picture. The researchers took a picture of a panda that the ML mannequin categorised correctly, then generated and utilized a particular noise sample to the picture. The ensuing picture gave the impression to be the identical Panda to a human observer (Determine 3). Nevertheless, when this picture was categorised by the ML mannequin, it produced a prediction results of gibbon, thus inflicting the mannequin to do the mistaken factor.

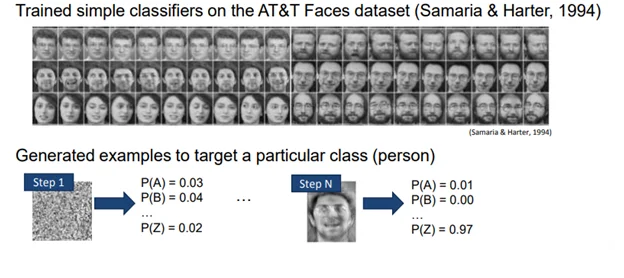

Lastly, adversaries may cause ML to reveal the mistaken factor. On this class of vulnerabilities, an adversary makes use of an ML mannequin to disclose some side of the mannequin, or the coaching dataset, that the mannequin’s creator didn’t intend to disclose. Adversaries can execute these assaults in a number of methods. In a mannequin extraction assault, an adversary can create a reproduction of a mannequin that the creator needs to maintain non-public. To execute this assault, the adversary solely wants to question a mannequin and observe the outputs. This class of assault is regarding to ML-enabled software programming interface (API) suppliers since it may possibly allow a buyer to steal the mannequin that allows the API.

Adversaries use mannequin inversion assaults to disclose details about the dataset that was used to coach a mannequin. If the adversaries can achieve a greater understanding of the lessons and the non-public dataset used, they will use this data to open a door for a follow-on assault or to compromise the privateness of coaching information. The idea of mannequin inversion was illustrated by Matt Fredrikson et al. of their paper Mannequin Inversion Assaults that Exploit Confidence Data and Primary Countermeasures, which examined a mannequin skilled with a dataset of faces.

On this paper the authors demonstrated how an adversary makes use of a mannequin inversion assault to show an preliminary random noise sample right into a face from the ML system. The adversary does so through the use of a generated noise sample as an enter to a skilled mannequin after which utilizing conventional ML mechanisms to repetitively information the refinement of the sample till the arrogance degree will increase. Utilizing the outcomes of the mannequin as a information, the noise sample finally begins trying like a face. When this face was offered to human observers, they have been capable of hyperlink it again to the unique individual with higher than 80 % accuracy (Determine 4).

Defending Towards Adversarial AI

Defending a machine studying system towards an adversary is a tough drawback and an space of energetic analysis with few confirmed generalizable options. Whereas generalized and confirmed defenses are uncommon, the adversarial ML analysis neighborhood is tough at work producing particular defenses that may be utilized to guard towards particular assaults. Growing check and analysis pointers will assist practitioners establish flaws in programs and consider potential defenses. This space of analysis has developed right into a race within the adversarial ML analysis neighborhood by which defenses are proposed by one group after which disproved by others utilizing current or newly developed strategies. Nevertheless, the plethora of things influencing the effectiveness of any defensive technique preclude articulating a easy menu of defensive methods geared to the assorted strategies of assault. Fairly, now we have centered on robustness testing.

ML fashions that efficiently defend towards assaults are sometimes assumed to be sturdy, however the robustness of ML fashions should be proved by way of check and analysis. The ML neighborhood has began to define the circumstances and strategies for performing robustness evaluations on ML fashions. The primary consideration is to outline the circumstances underneath which the protection or adversarial analysis will function. These circumstances ought to have a said aim, a practical set of capabilities your adversary has at its disposal, and a top level view of how a lot data the adversary has of the system.

Subsequent, it is best to guarantee your evaluations are adaptive. Particularly, each analysis ought to construct upon prior evaluations but in addition be impartial and signify a motivated adversary. This method permits a holistic analysis that takes all data under consideration and isn’t overly centered on one error occasion or set of analysis circumstances.

Lastly, scientific requirements of reproducibility ought to apply to your analysis. For instance, you need to be skeptical of any outcomes obtained and vigilant in proving the outcomes are right and true. The outcomes obtained must be repeatable, reproducible, and never depending on any particular circumstances or environmental variables that prohibit impartial copy.

The Adversarial Machine Studying Lab on the SEI’s AI Division is researching the event of defenses towards adversarial assaults. We leverage our experience with adversarial machine studying to enhance mannequin robustness and the testing, measurement, and robustness of ML fashions. We encourage anybody eager about studying extra about how we will assist your machine studying efforts to achieve out to us at information@sei.cmu.edu.

[ad_2]