[ad_1]

Nvidia is using excessive for the time being. The corporate has managed to extend the efficiency of its chips on AI duties a thousandfold over the previous 10 years, it’s raking in cash, and it’s reportedly very arduous to get your fingers on its latest AI-accelerating GPU, the H100.

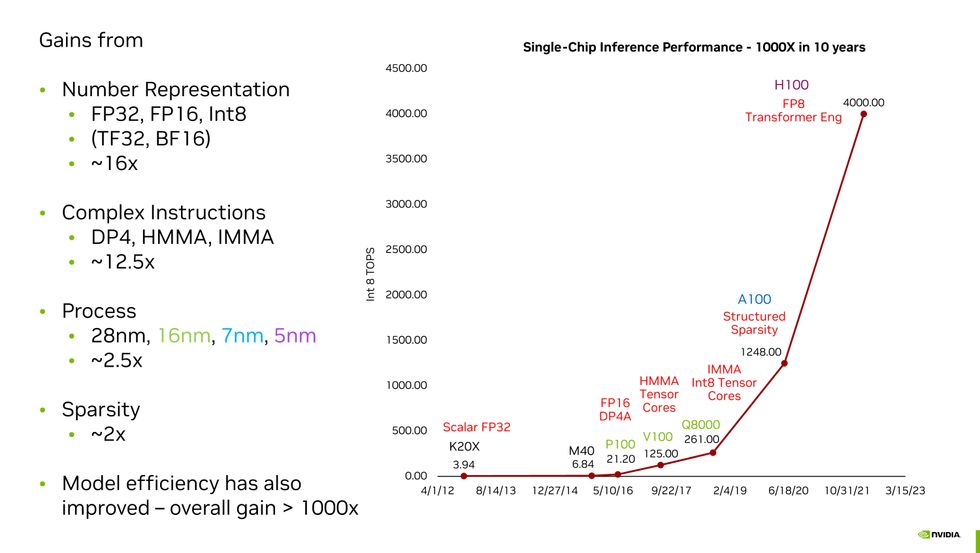

How did Nvidia get right here? The corporate’s chief scientist, Invoice Dally, managed to sum all of it up in a single slide throughout his keynote handle to the IEEE’s Scorching Chips 2023 symposium in Silicon Valley on high-performance microprocessors final week. Moore’s Legislation was a surprisingly small a part of Nvidia’s magic and new quantity codecs a really massive half. Put all of it collectively and also you get what Dally known as Huang’s Legislation (for Nvidia CEO Jensen Huang).

Nvidia chief scientist Invoice Dally summed up how Nvidia has boosted the efficiency of its GPUs on AI duties a thousandfold over 10 years.Nvidia

Nvidia chief scientist Invoice Dally summed up how Nvidia has boosted the efficiency of its GPUs on AI duties a thousandfold over 10 years.Nvidia

Quantity Illustration: 16x

“By and enormous, the most important achieve we obtained was from higher quantity illustration,” Dally informed engineers. These numbers symbolize the important thing parameters of a neural community. One such parameter is weights—the energy of neuron-to-neuron connections in a mannequin—and one other is activations—what you multiply the sum of the weighted enter on the neuron to find out if it prompts, propagating data to the following layer. Earlier than the P100, Nvidia GPUs represented these weights utilizing single precision floating-point numerals. Outlined by the IEEE 754 commonplace, these are 32 bits lengthy, with 23 bits representing a fraction, 8 bits appearing basically as an exponent utilized to the fraction, and one bit for the quantity’s signal.

However machine-learning researchers have been rapidly studying that in lots of calculations, they may use much less exact numbers and their neural community would nonetheless give you solutions that have been simply as correct. The clear benefit of doing that is that the logic that does machine studying’s key computation—multiply and accumulate—may be made quicker, smaller, and extra environment friendly if they should course of fewer bits. (The vitality wanted for multiplication is proportional to the sq. of the variety of bits, Dally defined.) So, with the P100, Nvidia minimize that quantity in half, utilizing FP16. Google even got here up with its personal model known as bfloat16. (The distinction is within the relative variety of fraction bits, which provide you with precision, and exponent bits, which provide you with vary. Bfloat16 has the identical variety of vary bits as FP32, so it’s simpler to change backwards and forwards between the 2 codecs.)

Quick ahead to at the moment, and Nvidia’s main GPU, the H100, can do sure elements of massive-transformer neural networks, like ChatGPT and different massive language fashions, utilizing 8-bit numbers. Nvidia did discover, nonetheless, that it’s not a one-size-fits-all resolution. Nvidia’s Hopper GPU structure, for instance, truly computes utilizing two completely different FP8 codecs, one with barely extra accuracy, the opposite with barely extra vary. Nvidia’s particular sauce is in figuring out when to make use of which format.

Dally and his crew have all types of attention-grabbing concepts for squeezing extra AI out of even fewer bits. And it’s clear the floating-point system isn’t ideally suited. One of many most important issues is that floating-point accuracy is fairly constant regardless of how huge or small the quantity. However the parameters for neural networks don’t make use of huge numbers, they’re clustered proper round zero. So, Nvidia’s R&D focus is discovering environment friendly methods to symbolize numbers so they’re extra correct close to zero.

Complicated Directions: 12.5x

“The overhead of fetching and decoding an instruction is many instances that of doing a easy arithmetic operation,” stated Dally. He identified one kind of multiplication, which had an overhead that consumed a full 20 instances the 1.5 picojoules used to do the mathematics itself. By architecting its GPUs to carry out huge computations in a single instruction relatively than a sequence of them, Nvidia made some enormous positive factors. There’s nonetheless overhead, Dally stated, however with advanced directions, it’s amortized over extra math. For instance, the advanced instruction integer matrix multiply and accumulate (IMMA) has an overhead that’s simply 16 p.c of the vitality value of the mathematics.

Moore’s Legislation: 2.5x

Sustaining the progress of Moore’s Legislation is the topic of billons and billions of {dollars} of funding, some very advanced engineering, and a bunch of worldwide angst. Nevertheless it’s solely answerable for a fraction of Nvidia’s GPU positive factors. The corporate has persistently made use of probably the most superior manufacturing know-how accessible; the H100 is made with TSMC’s N5 (5-nanometer) course of and the chip foundry solely started preliminary manufacturing of its subsequent era N3 in late 2022.

Sparsity: 2x

After coaching, there are various neurons in a neural community that will as properly not have been there within the first place. For some networks, “you possibly can prune out half or extra of the neurons and lose no accuracy,” stated Dally. Their weight values are zero, or actually near it; so they only don’t contribute the output, and together with them in computations is a waste of time and vitality.

Making these networks “sparse“ to scale back the computational load is difficult enterprise. However with the A100, the H100’s predecessor, Nvidia launched what it calls structured sparsity. It’s {hardware} that may pressure two out of each 4 doable pruning occasions to occur, resulting in a brand new smaller matrix computation.

“We’re not performed with sparsity,” Dally stated. “We have to do one thing with activations and might have higher sparsity in weights as properly.”

From Your Website Articles

Associated Articles Across the Internet

[ad_2]